Desktop Testing: Build Your Testing Strategy

|

|

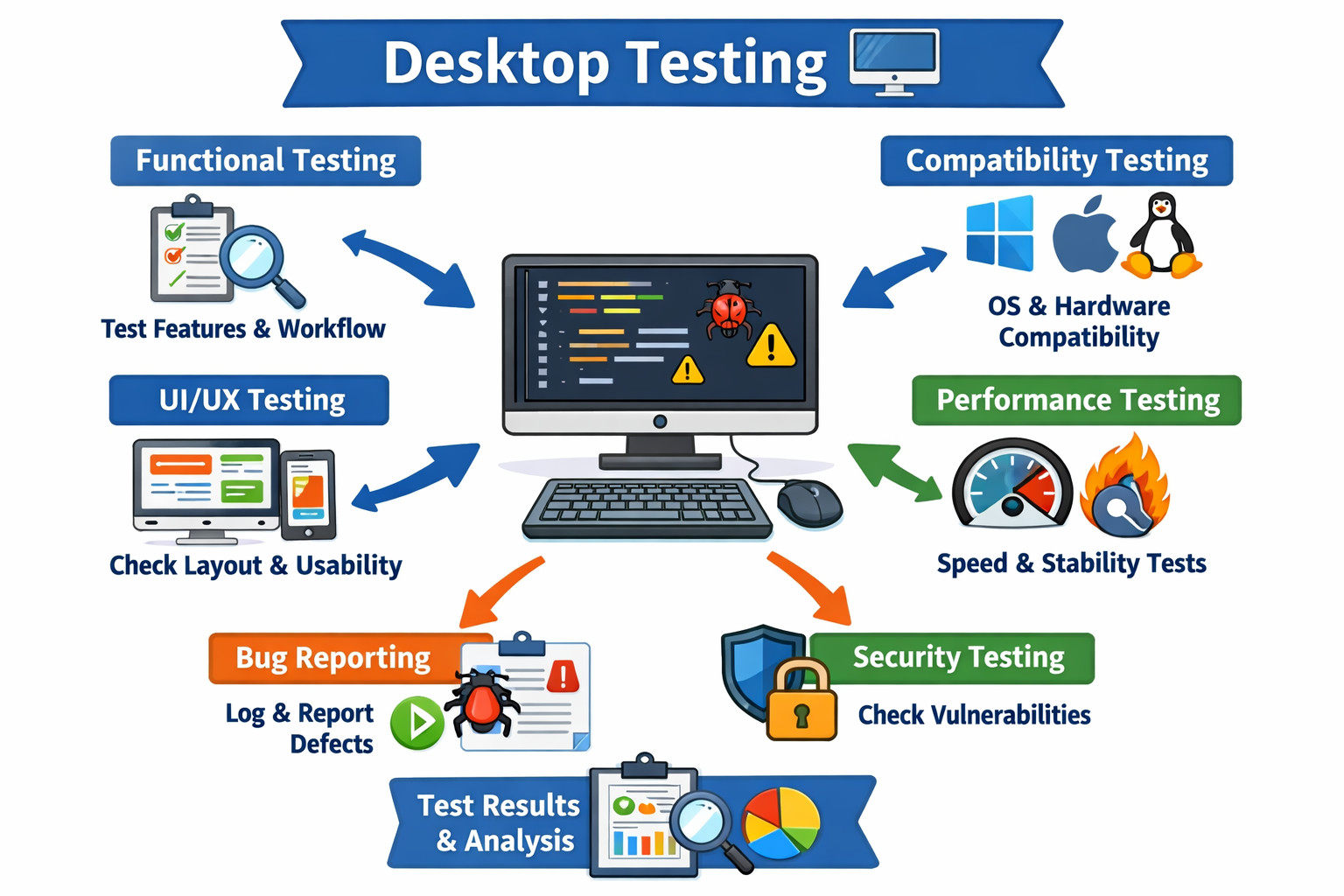

What’s Desktop Testing?

Desktop testing is a comprehensive evaluation of desktop applications to ensure they meet the desired standards for functionality, usability, performance, compatibility, and security. These applications run on a desktop computer or laptop and are installed locally, rather than being accessed through a web browser or as a mobile app.

Due to the platform-dependent nature of desktop applications, it is important for the quality assurance (QA) team to thoroughly test each application for compatibility with different operating systems, hardware configurations, and language requirements. This testing process can involve checking the installation and uninstallation of the application, verifying that version upgrades or patches do not break any functionalities, and testing the app’s performance under different workloads.

In some cases, desktop testing can be more challenging than web testing, as certain aspects of the application may not be amenable to automation. For example, security client applications often involve components with masked properties, making it difficult to test using traditional automation tools. In such cases, the development of in-house automation tools may be necessary.

The goal of desktop testing is to deliver a high-quality and reliable user experience by ensuring that the application works as intended, provides a user-friendly interface, and is free of defects or security vulnerabilities.

| Key Takeaways: |

|---|

|

Importance of Test Planning in Desktop Testing

Test planning plays a vital role in the software testing process. A good test plan acts as a blueprint for the entire testing process, outlining what needs to be tested, the approach to testing, resources required, who will conduct the testing, and how issues will be tracked and reported.

A well-structured test plan guides the testing team and provides clear direction for the testing activities. It reduces the risk of missing out on any critical tests and ensures all the application’s key functionalities are verified.

Moreover, a good test plan promotes effective communication within the team. It ensures everyone understands their roles, responsibilities, and the expected outcomes. It’s a point of reference that can help keep the team aligned and working together effectively. Furthermore, it also helps in estimating the time and effort required for testing, allowing for better project management.

How to Test Desktop Applications

There are different types of testing done on desktop applications. Since these applications are standalone, the testing types can differ from web testing. Let’s look in detail at the different types of testing that are performed on desktop applications:

- Installation/ uninstallation testing

- Functional testing

- Patch testing

- Rollback testing

- Compatibility testing

- Performance testing

- UI (GUI) testing

- L10N / I18N testing

- Accessibility testing

- Disaster recovery testing

- Real user monitoring (RUM)

Installation/ Uninstallation Testing

Installation testing verifies that the application can be successfully installed on a system without any issues. The QA team checks if all required file engines have been installed, and if the application can be opened by clicking the icon. They also ensure that the installation only proceeds if the system meets the necessary requirements. The process of installation is also tested in cases where there may be disruptions or retry scenarios.

Uninstallation testing focuses on ensuring that all files, including system files and their folder structures, are completely removed from the system when the application is uninstalled. The QA team checks if no application or user data is left behind after the uninstallation process.

Both installation and uninstallation testing are performed on different operating systems with varying hardware options and different versions of the application. The stability of the system post-installation and post-uninstallation is also tested to ensure that there are no issues.

Functional Testing

Functional testing is a crucial aspect of desktop testing, where the focus is on evaluating the application’s ability to perform its intended functions in accordance with specified requirements. Every function within the application is thoroughly tested to ensure it works as expected. Test cases can be designed to focus on a single component’s functionality or to test end-to-end user flows.

Depending on the nature of the application and the test cases, functional testing can be performed either manually or through automation. Automated functional testing is typically used for regression test cases, while manual testing can be used for new feature releases to ensure that they work as intended. The aim of functional testing is to ensure that the application functions correctly and provides an optimal user experience. Read: Functional Testing Types: An In-Depth Look

Manual Functional Testing

Manual testing refers to the process of testing desktop applications through the manual execution of test cases by a human tester. It is a hands-on approach to testing where the tester interacts with the application to verify its functionality and other aspects. In manual testing, the tester follows a set of test cases and uses their knowledge and experience to validate the application’s behavior. This may include verifying that all features work as expected, checking the application’s response to different inputs, and evaluating the overall user experience.

Manual testing is usually performed in conjunction with automated testing to provide comprehensive coverage of the application’s functionality. While automated testing is useful for quickly executing repetitive test cases, manual testing provides the human touch that is often necessary for uncovering unexpected behavior or discovering problems that are difficult to detect through automation. Read: Manual Testing: A Beginner’s Guide

Automated Functional Testing

Automated testing uses software tools to perform repetitive or time-consuming test cases on a desktop application. The goal of this testing is to save time and resources by automating the process, thereby freeing up testers to focus on expanding test coverage.

Automated testing can be performed on different operating systems and hardware configurations to ensure that the desktop application works correctly on all platforms.

The easiest way to create stable functional tests is by using testRigor. No programming skills are needed, and automated tests look like they’re manual test cases. Test maintenance is typically a big headache with most automated testing tools; however, testRigor completely solves this issue. testRigor supports not just desktop testing, but also web, mobile, and API – so it can be the only functional and end-to-end testing tool you will ever need. Read: How to Start with Test Automation Right Now?

AI Feature Validation in Desktop Applications

With AI becoming a core component of many desktop applications, traditional testing approaches are no longer sufficient. AI feature validation focuses on ensuring that intelligent behavior within the application is reliable, predictable, and safe for users.

This type of testing validates that AI-powered features produce accurate and relevant results, handle ambiguous or unexpected inputs gracefully, and fail safely when confidence is low. Testers must also verify that AI-driven decisions do not introduce bias, misleading outputs, or inconsistent behavior across sessions.

Unlike deterministic features, AI behavior can evolve over time due to model updates or data changes. Therefore, AI feature validation is not a one-time activity but an ongoing process that combines functional testing, exploratory testing, and monitoring in production-like environments. The goal is not only correctness, but also user trust and transparency. Read: What is AI Evaluation?

Patch Testing

Patch testing refers to the process of evaluating and validating patches or updates to a desktop application before they are released to users. The goal of patch testing is to ensure that the changes made to the application do not negatively impact its existing functionality, performance, compatibility, or security.

When a patch or update is released for a desktop application, the QA team will test the application to ensure that the patch has been applied correctly and that the application continues to work as expected. This may include functional testing to ensure that all features are still working, compatibility testing to ensure that the application continues to work on different operating systems and hardware configurations, and performance testing to ensure that the application runs smoothly and efficiently.

Patch testing is an important part of the desktop application testing process, as it helps to ensure that users receive updates that improve the application without introducing new issues. This helps to maintain the reliability and stability of the application, ensuring that users can continue to use it with confidence.

Rollback Testing

The QA team verifies that an application can be rolled back to a previous version in the event of an issue or a failure. Rollback testing is a type of recovery testing that is designed to validate the application’s ability to revert to a previous version in the event of an unexpected error.

The aim of rollback testing is to ensure that the application can be returned to a known, stable state. Rollback testing can be performed manually or through automation and typically involves testing the rollback process using a range of scenarios and conditions.

In desktop application testing, rollback testing is a critical component of ensuring the overall reliability and stability of the application. By verifying that the application can be successfully rolled back to a previous version, testers can ensure that users have a fallback option in case of an issue with the latest version, reducing the risk of data loss or system instability.

Compatibility Testing

Compatibility testing ensures that the application works correctly on different hardware, software, and operating system configurations. This type of testing ensures that the application is compatible with different versions of operating systems, different types of computers (such as desktops, laptops, and tablets), different screen sizes and resolutions, different hardware components (such as graphics cards, processors, and memory), and different browser versions. For example, the application will be tested against the least RAM, a configuration of 4GB, to ensure the application doesn’t freeze.

Compatibility testing is vital because it helps to identify issues that could affect the user experience or prevent the application from functioning properly. For example, if an application is not compatible with a certain version of an operating system, users with that version may not be able to install or use the application. By testing the application’s compatibility with a variety of configurations, the quality assurance (QA) team can ensure that the application works as expected for a wide range of users.

Offline-First and Data Synchronization Testing

Modern desktop applications are expected to function reliably even in unstable or disconnected network conditions. Offline-first and data synchronization testing ensure that users can continue working without connectivity and that their data remains consistent when connectivity is restored.

This testing focuses on scenarios such as creating, editing, or deleting data while offline, handling partial sync failures, resolving conflicts between local and remote data, and ensuring that no data is lost during reconnection. It also verifies that the application behaves predictably when switching between offline and online modes.

In 2026, this type of testing is essential for desktop applications used in enterprise environments, remote work scenarios, and geographically distributed teams. Proper offline and sync testing directly impacts user confidence and productivity.

Performance Testing

Performance testing refers to the process of evaluating the responsiveness, stability, and scalability of a desktop application under different load conditions. The goal of performance testing is to identify any application bottlenecks and ensure that it can handle the expected load and usage patterns.

Performance testing is critical for desktop applications, as it helps ensure that the application provides an optimal user experience, even when subjected to high levels of usage and demand. This can include testing the application’s memory usage, processor usage, disk I/O, network bandwidth, and response times, among other metrics.

Performance testing can be conducted using a range of tools, including load testing tools, performance monitoring tools, and stress testing tools, among others. Load testing tools can simulate a large number of users accessing the application at the same time, while performance monitoring tools can track the application’s performance in real time. Stress testing tools can be used to push the application to its limits, determine the maximum capacity, and identify any performance-related issues.

Overall, performance testing is a critical aspect of desktop application testing, as it helps ensure that the application provides a high level of performance and responsiveness, even under high levels of usage and demand. Read: What is Performance Testing: Types and Examples

UI (GUI) Testing

GUI or Graphical User Interface testing is responsible for the application’s graphical user interface functions across different resolutions or hardware configurations. GUI testing mainly evaluates:

- Layout

- Color

- Font size

- Text boxes

- Position and alignment of texts, objects, and images.

- Clarity of the images

Testing is done by changing the screen resolution and evaluating how the application adjusts to the new resolution. GUI testing can be done via manual and automated testing. testRigor is also an excellent choice for this type of testing. Read: UI Testing: What You Need to Know to Get Started

L10N / I18N Testing

Localization testing, also known as L10N testing, verifies that a desktop application is adapted to the cultural, linguistic, and regional requirements of a specific location. This type of testing checks if the application correctly displays text, currency, time format, and text alignment based on the selected country and language. It also ensures that the application does not contain any graphics that are sensitive to that region. The objective of localization testing is to make sure that the application is suitable for use in a specific region.

Internationalization testing, or I18N testing, evaluates whether a desktop application can adapt to different cultures and languages worldwide without requiring changes to its code. For example, suppose an application supports switching between English and French. In that case, the I18N testing will ensure that all text is properly translated when switching languages and that the look and feel of the application remains unchanged. The main goal of I18N testing is to verify that the application can be easily adapted to different cultures and languages without any issues. Read: Localization vs. Internationalization Testing Guide

Accessibility Testing

Accessibility testing is a critical component of desktop application testing that ensures the app is usable by people with disabilities. This includes individuals with visual, auditory, motor, or cognitive impairments. This form of testing ensures that the application is compatible with assistive technologies like screen readers, speech recognition software, and alternative input devices.

For example, a desktop application might be tested to confirm that all visual content is accompanied by alternative text descriptions that can be read by screen readers for visually impaired users. Similarly, applications might be checked to ensure they don’t rely solely on color to convey important information, as this could be inaccessible to color-blind users.

Conducting accessibility testing not only helps in creating an inclusive environment where your application can reach a wider audience but also aligns with various legal requirements and standards, such as the Web Content Accessibility Guidelines (WCAG) and Section 508 of the Rehabilitation Act. Read: Accessibility Testing: Ensuring Inclusivity in Software

Disaster Recovery Testing

Disaster recovery testing is another component of desktop application testing. It ensures that the application can recover data and resume operations quickly and effectively in the event of a system failure, such as a hardware malfunction, power outage, or software bug.

To conduct disaster recovery testing, testers first simulate a disaster or failure scenario. They then follow the procedures outlined in the disaster recovery plan to recover the application and its data. Once the recovery process is complete, they verify that the application is functioning as expected and that all data has been restored accurately.

This form of testing involves multiple steps, such as:

- Creating backups of all application data and settings.

- Restoring the application from the backup.

- Verifying the integrity of the restored data and the functionality of the application.

Disaster recovery testing can help uncover any flaws or weaknesses in the disaster recovery plan. It can also provide insights into how long the recovery process might take in a real-world scenario. This information can be used to improve the disaster recovery plan and reduce the impact of any future system failures. Read: Backup and Recovery Test Automation – How To Guide

Real User Monitoring (RUM)

Real User Monitoring (RUM) is an approach to application testing and performance monitoring that involves gathering data from users who are actively using your application in the real world. This is often achieved by embedding a small piece of code into the application, which collects data about user interactions and sends it back for analysis.

RUM can provide valuable insights into how the application performs under real-world conditions. It can show how quickly the application loads for users, where users encounter errors, and how they interact with the application. This can help identify performance bottlenecks, usability issues, or other potential problems that may not be apparent in a controlled testing environment.

For example, if RUM data shows that a certain feature of the application is rarely used, or that users frequently abandon the application at a certain point, this could indicate a usability issue that needs to be addressed. Similarly, if the data shows that the application is slow to load for users in a certain geographical location, this could point to a performance issue that needs to be resolved.

In addition to identifying potential issues, RUM can also provide insights into how users interact with the application. This can inform future development efforts, helping to align them more closely with user needs and expectations. By understanding how the application is used in the real world, developers and testers can work to continuously improve the application and the user experience it provides.

Observability-Driven Performance and Experience Monitoring

Performance testing in 2026 extends far beyond traditional load and stress testing. Modern desktop testing strategies increasingly rely on observability-driven insights that combine performance metrics, behavioral data, and real-user signals.

This approach focuses on long-running application behavior, memory usage over extended sessions, background task efficiency, and responsiveness under real-world usage patterns. Instead of testing performance only in controlled environments, teams analyze runtime data to detect anomalies, regressions, and degradation trends early.

By combining performance testing with real user monitoring and analytics, QA teams gain a deeper understanding of how the application behaves in production-like conditions. This allows them to prioritize performance improvements that have the greatest impact on actual user experience.

Best Practices for Desktop Testing

Desktop application testing may vary depending on the nature of the application and the specific requirements of the project. However, certain best practices can guide a more efficient and effective testing process:

- Start testing early: Starting the testing process early in the development cycle can help to identify and address issues sooner, reducing the cost and complexity of fixing them later.

- Clear testing objectives: Each testing activity should have a clear objective. Knowing what you want to achieve with each test will guide your approach and make it easier to measure success.

- Update test cases regularly: As the application evolves, test cases should be updated, or new ones should be created. This ensures that the tests stay relevant and continue to provide value.

- Prioritize tests: Not all tests are equally critical. Prioritize your tests based on the importance of the features they cover, the risk associated with them, and their impact on the user experience.

- Use a mix of testing techniques: Manual testing and automated testing both have their strengths. A balanced approach that makes the most of both can deliver a more comprehensive assessment of the application.

- Implement continuous testing: Incorporate testing into every stage of the continuous integration/continuous deployment (CI/CD) pipeline. This helps to identify and address issues more quickly and ensures that the application is always in a releasable state.

- Record and track bugs: Use a bug tracking tool to record, track, and manage any bugs that are identified during testing. This helps to ensure that no issues are overlooked and provides a record that can be useful for future development and testing activities.

- Learn from each cycle: After each testing cycle, review the process and the outcomes. Look for any lessons learned and apply them to future testing activities.

How to Automate Desktop Testing

Automating your test cases is the most efficient way to ensure consistently high quality. The latest advancements in this field now allow virtually anyone to write automated UI tests from scratch using no code. testRigor is an AI-driven cloud tool that simplifies the process of test authoring as much as it is technically possible.

Autonomous and Self-Maintaining Test Automation

Traditional desktop automation approaches often struggle with high maintenance costs due to frequent UI changes and platform updates. In 2026, automation strategies increasingly focus on autonomy and resilience rather than script-heavy frameworks.

Autonomous test automation emphasizes intent-based testing, where tests are written in human-readable language and focus on what the user is trying to achieve rather than how the UI is implemented. These tests are more stable, adapt better to UI changes, and require significantly less maintenance.

Modern automation solutions also support unified testing across desktop, web, mobile, and APIs, enabling true end-to-end validation. By reducing maintenance overhead and increasing test stability, autonomous automation allows teams to scale coverage, execute tests more frequently, and keep pace with faster release cycles.

With testRigor, you can:

- Write and edit end-to-end tests in plain English up to 15x faster than with other tools.

- Spend minimal time on test maintenance (due to smart algorithms that make tests extremely stable).

- Build tests not just for desktop, but also for web and mobile applications.

- Focus on automating repetitive tasks, expanding the coverage, and automating 90% of your test cases within a year.

- Execute your tests as often as needed, and get results in minutes instead of hours or days.

To know more about how to Automate Native Desktop Testing with testRigor, read the blog: Automate Native Desktop Testing with testRigor

Here’s a short video that will give you an idea of how simple it is to use testRigor for your desktop testing needs:

Wrapping Up

Desktop testing comprises various types of testing that can be done manually or through automation. Being quite expensive, employing automated testing for the majority of the tasks is advisable. Make sure to opt for competent tools that simplify testing desktop applications for you.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |