Acceptance Testing Automation – The Easy Way

|

|

“Technology is best when it brings people together” – Matt Mullenweg.

Acceptance testing plays a crucial role in ensuring that software meets business expectations before it reaches end users. As the final validation step, it focuses on real user scenarios and business workflows rather than technical implementation details. A failure at this stage can delay releases and impact customer confidence.

With faster release cycles and iterative Agile development, acceptance testing is no longer a one-time activity. The same scenarios often need to be validated repeatedly across sprints, making efficiency and repeatability essential. Automating acceptance testing becomes key to maintaining quality while keeping pace with modern software delivery.

| Key Takeaways: |

|---|

|

What is Acceptance Testing?

Acceptance testing is a critical step in the software development process that verifies if a system meets business requirements and can be delivered to customers. It is the final stage of testing, following unit, integration, and system testing. Acceptance testing is a form of testing where the system is evaluated against a set of acceptance criteria to ensure it meets the required specifications. Once the system passes acceptance testing, it can be handed over to the customer or deployed to a production environment.

Acceptance testing is typically carried out in an environment that closely mimics a production environment. Integration testing focuses on business, risk, contract, and user perspectives, while acceptance testing focuses on user scenarios. This type of testing is often considered black box testing, which concentrates on the end-user experience. In the initial phase, requirements are converted into test scenarios or use cases, which are then validated during acceptance testing. If a product passes acceptance testing, it means that it meets the requirements.

In an Agile environment, a test scenario may be divided into multiple user stories. Some user stories may be developed in the current sprint, while others may be planned for future sprints. This means acceptance testing may fail at the end of every sprint, as many scenarios are not yet fully developed. As a result, the same acceptance scenarios must be executed repeatedly, making acceptance testing an iterative process.

Read: What is Business Acceptance Testing (BAT)?

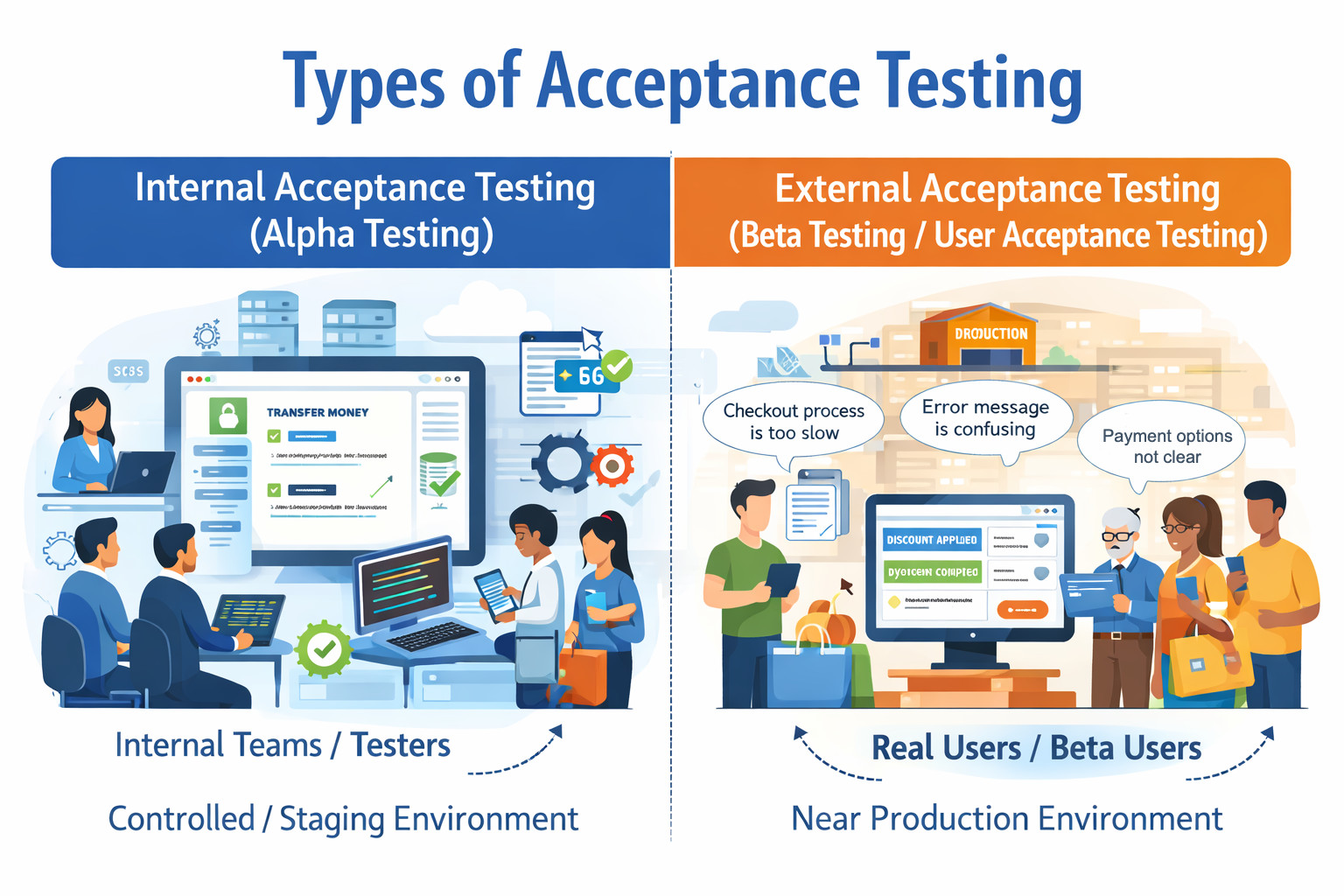

Types of Acceptance Testing

Acceptance testing can be categorized into two different types :

Internal Acceptance Testing (Alpha Testing)

Internal acceptance testing, commonly referred to as alpha testing, is performed within the organization by internal teams who are not directly involved in the development of the feature. This testing usually takes place in a controlled development or staging environment before the product is exposed to real users.

The primary goal of internal acceptance testing is to validate that the application meets business requirements, functional expectations, and basic usability standards before external users interact with it.

During this phase:

- Testers execute predefined acceptance scenarios.

- Business workflows are validated end-to-end.

- Defects and gaps are documented and shared with the development team.

- Developers fix the identified issues and provide a revised build for re-validation.

Example: A company develops an online banking application with features such as fund transfers, bill payments, and account statements. Before releasing the application to customers, an internal QA or business validation team tests scenarios like:

- Transferring money between accounts

- Paying utility bills using saved beneficiaries

- Generating monthly account statements

If testers find issues such as incorrect balance calculations or broken workflows, these defects are reported and fixed internally. Only after successful internal acceptance testing is the application considered stable enough for external users.

External Acceptance Testing (Beta Testing / User Acceptance Testing)

External acceptance testing is carried out by users outside the organization, such as real customers, business stakeholders, or selected beta users. This phase is often referred to as beta testing or User Acceptance Testing (UAT) and is usually conducted in a production-like environment.

The main objective of external acceptance testing is to ensure that the software:

- Meets real-world user needs

- Aligns with business expectations

- Works correctly in practical usage scenarios

- Delivers a satisfactory user experience

Unlike internal testing, external users focus less on technical correctness and more on usability, workflow suitability, and business value.

Example: An e-commerce company launches a new checkout feature that includes multiple payment options and discount codes. Selected customers are invited to participate in beta testing. They validate scenarios such as:

- Applying discount coupons during checkout

- Completing purchases using different payment methods

- Receiving order confirmation emails

Users may provide feedback like:

- The checkout flow feels too long

- Error messages are unclear

- Certain payment options are confusing

This feedback helps the organization refine the feature before a full-scale public release.

Read: Alpha vs. Beta Testing: A Comprehensive Guide.

Acceptance Testing as an Iterative Process

As mentioned earlier, acceptance testing is an iterative process. Test scenarios are written and executed against the current build. Some scenarios may pass, while others fail because they are not yet fully developed. To improve efficiency, acceptance scenarios can be automated and added to the CI/CD pipeline. This reduces redundant work, as the manual QA team does not need to repeatedly execute the same scenarios after every sprint. Automation also allows the manual team to focus more on other testing activities, such as smoke testing and exploratory testing.

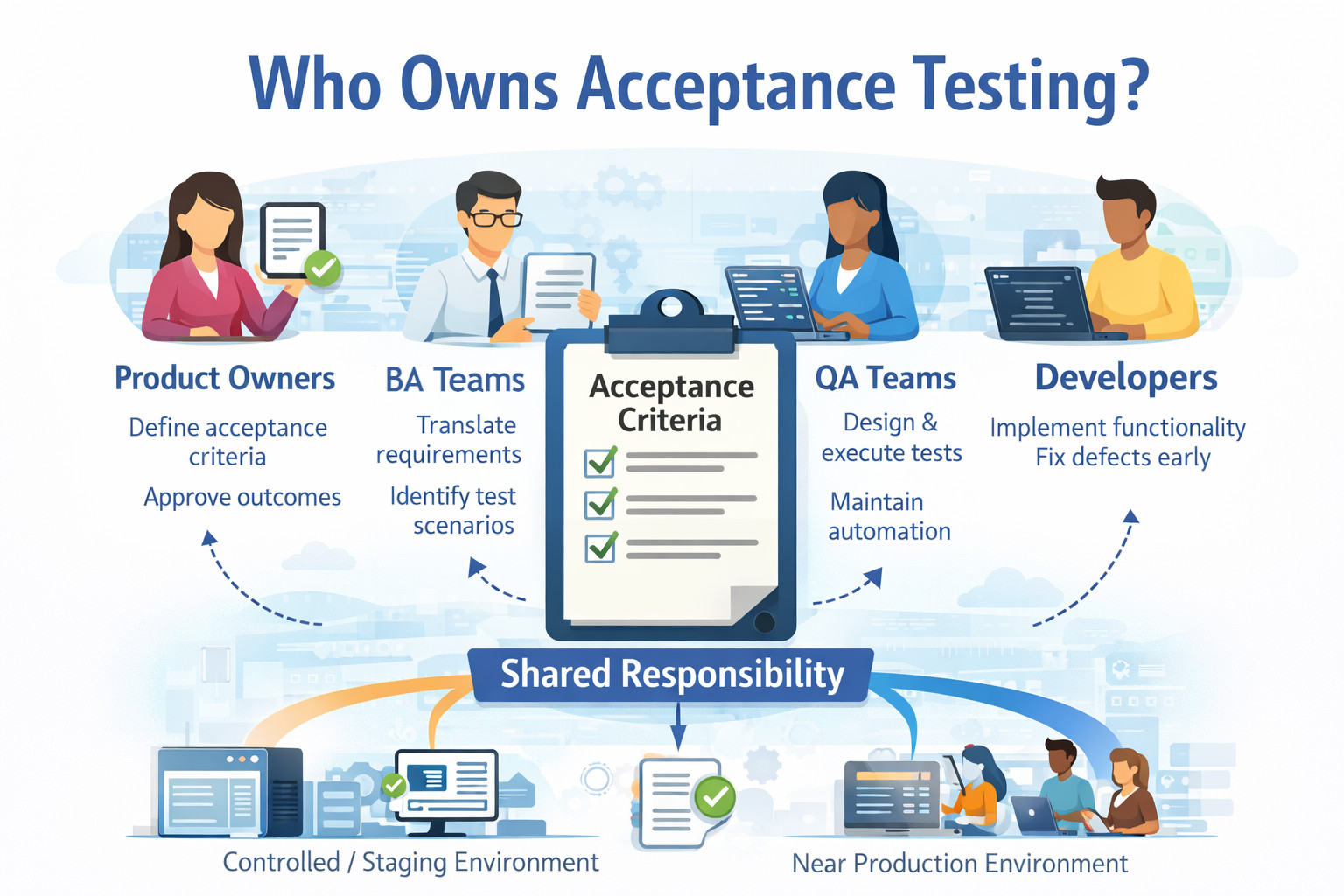

Who Owns Acceptance Testing?

Ownership of acceptance testing is often misunderstood because it does not belong to a single role. Instead, acceptance testing is a shared responsibility, where each role contributes a specific perspective to ensure the product meets business expectations and is ready for release.

- Role of Product Owners: They define the acceptance criteria and ensure that each feature delivers the intended business value. They review acceptance test outcomes and provide final approval, confirming that the implemented functionality meets user and stakeholder expectations.

- Role of Business Analysts: They translate high-level business requirements into clear, testable acceptance criteria. They help identify edge cases, alternative flows, and business rules, ensuring acceptance scenarios accurately reflect real-world usage.

- Role of QA Teams: They design, execute, and maintain acceptance test scenarios to validate end-to-end business workflows. They ensure acceptance tests are repeatable, reliable, and automated where possible to provide fast feedback without slowing down the sprint.

- Role of Developers: They implement functionality based on defined acceptance criteria and support acceptance testing by fixing defects early. Their involvement ensures technical implementation aligns with expected business behavior, reducing rework later in the sprint.

Read: Minimizing Risks: The Impact of Late Bug Detection.

Shared Ownership in Agile and ATDD

In Agile and ATDD environments, acceptance testing is a collaborative effort involving product, business, QA, and development teams. This shared ownership ensures acceptance criteria are agreed upon upfront and validated continuously, turning acceptance tests into a living representation of business requirements.

Acceptance Test Driven Development (ATDD)

Acceptance testing often involves multiple stakeholders, including business analysts, project managers, developers, testers, management, and sales teams. One widely adopted methodology is Acceptance Test Driven Development (ATDD), which is closely related to Behavior-Driven Development (BDD).

In ATDD:

- Business analysts, PMs, developers, and testers collaboratively define acceptance criteria for every new use case

- Based on these criteria, BDD-style tests are written and executed

- If a test fails, developers implement the required functionality and rerun the tests

- This cycle continues throughout every sprint

Because acceptance testing involves non-technical stakeholders, it is important to avoid legacy automation tools such as Selenium. These tools require significant effort to set up infrastructure and automation frameworks, often causing delays.

When Selenium was more prominent, QA teams typically lagged at least one sprint behind development. Although manual testing was completed in the same sprint, automation teams required an additional sprint to create and stabilize scripts. This increased costs, extended timelines, and reduced the overall quality of automation outcomes.

Why No-Code Tools are Better for Acceptance Testing

Acceptance testing is inherently business-driven and scenario-focused. It validates user workflows, business rules, and real-world usage rather than technical implementation details. Because acceptance testing involves business analysts, product owners, QA engineers, and sometimes even sales or customer-facing teams, the tooling must be accessible to both technical and non-technical users.

Legacy automation tools were designed primarily for engineers and require programming skills, complex frameworks, and ongoing maintenance. This makes them poorly suited for acceptance testing, where clarity, collaboration, and speed matter more than technical flexibility. No-code and low-code automation tools address this gap by allowing teams to express acceptance scenarios in natural language, align tests directly with acceptance criteria, and execute them continuously without heavy technical overhead.

Scalable Automation Using testRigor

One of the biggest challenges with legacy automation tools is maintenance. Even small UI changes can break automation scripts because these tools rely heavily on XPath or ID-based locators. As test suites grow, legacy frameworks often become complex and tightly coupled, where a small change in one function can trigger multiple test failures. Over time, automation teams may end up maintaining multiple framework versions, increasing frustration, effort, and operational overhead.

testRigor addresses these challenges through intelligent Gen AI-based element identification and a pure no-code approach. Let’s look into the features of testRigor.

- Eliminate Code Dependency: testRigor helps to create test scripts in parsed plain English, eliminating the need to know programming languages. So, any stakeholder, like manual QA, BA, sales personnel, etc., can create test scripts and execute them faster than an average automation engineer using legacy testing tools. Also, since it works in natural language, anyone can create or update test cases, increasing the coverage.

-

One Tool For All Testing Types: testRigor performs more than just web automation. It can be used for:

- Web and Mobile browser testing

- Mobile app testing

- Desktop app testing

- API testing

- Visual testing

- Database testing

- Accessibility testing

- Mainframe testing

- AI features testing

You don’t have to install different tools for different types of testing; testRigor takes care of all your testing needs singlehandedly. -

Stable Element Locators: Unlike traditional tools that rely on specific element identifiers, testRigor uses a unique approach for element locators, it uses Vision AI and AI context to identify elements. You simply describe elements by the text you see on the screen, leveraging the power of AI to find them automatically. This means your tests adapt to changes in the application’s UI, eliminating the need to update fragile selectors constantly. This helps the team focus more on creating new use cases than fixing the flaky XPaths. Here is an example where you identify elements with the text you see for them on the screen.

click "cart" click on button "Delete" below "Section Name"

- Integrations: testRigor offers built-in integrations with most of the popular CI/CD tools like Jenkins and CircleCI, test management systems like TestRail, defect tracking solutions like Jira and Pivotal Tracker, infrastructure providers like AWS and Azure, and communication tools like Slack and Microsoft Teams.

login as customer //reusable rule click "Accounts" click "Manage Accounts." click "Enable International Transactions" enter stored value "daily limit value" into "Daily Limit" click "Save" click "Account Balance" roughly to the left of "Debit Cards" check the page contains "Account Balance"

In the above script, you can see the usage of reusable rules and datasets. To understand how to use testRigor for creating test cases and executing them, please refer to this guide:

You can go through more features of testRigor and its documentation as well.

Wrapping Up

In today’s highly competitive market, speed is no longer optional; it is a decisive advantage. With “First in Market” as the dominant strategy, acceptance testing must be fast, reliable, and efficient without becoming a bottleneck in the delivery pipeline.

testRigor enables organizations to achieve this balance by delivering high-quality acceptance test automation with minimal effort and overhead. By allowing teams to automate, execute, and maintain acceptance scenarios within the same sprint, testRigor accelerates release cycles while preserving quality. As a result, teams can move faster, release with confidence, and meet market demands without compromising user experience or business expectations.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |