Cost-Aware Testing: When Every Test Case Costs Money

|

|

Normally, when you write tests for your software applications, you write tests as if they are “free”. Have you considered the possibility that they might come with a cost? We often run the tests on every commit, add more tests to get more coverage, and let the CI pipelines churn. But what we are not aware of is that in many real environments, every test case has a price tag. This makes testing an economic system where one should be aware of the cost of every test case, and that is why we need cost-aware testing.

| Key Takeaways: |

|---|

|

This article explores why tests cost money, how to model those costs, and practical strategies to maximize quality under constraints.

What is Cost-Aware Testing?

Cost-aware testing is a strategic software testing methodology that ensures efficient resource allocation by balancing testing quality with the financial and time-related costs of execution.

You can begin with cost-aware testing with a question that is uncomfortable yet productive:

If this test case didn’t exist, what does it cost us to keep it, and what would it cost us in defects, incidents, support load, and reputational damage?

It involves prioritizing, reducing, or optimizing test suites, particularly in regression testing, to maximize fault detection while minimizing expenses. A cost-aware testing approach integrates cost considerations into the software development lifecycle (SDLC).

Key Aspects of Cost-Aware Testing

- Goal: There is always this “sweet spot” between high-quality testing and financial sustainability. Aim to optimize it. This involves collaboration among QA, engineering, and finance teams.

- Test Suite Reduction/Minimization: Redundant test cases from large suites can be eliminated by using algorithms. It also lowers execution time and resources while maintaining high test coverage.

- Prioritization: High-risk or critical areas are given priority using techniques like the APFD (Average Percentage of Faults Detected) metric to evaluate test effectiveness.

- Cloud and Resource Optimization: Cost-aware testing ensures that testing does not overspend on infrastructure in cloud-based environments, balancing performance with budgetary constraints.

Examples

- Regression Testing: To be cost-aware, instead of running all tests, only the most critical and relevant tests are run after a code change to save time and money.

- AI/Machine Learning: Cost-aware AI (CoAI) frameworks help with accurate predictions using a small number of low-cost features, significantly reducing computational costs.

- System Testing: Services are placed on a cheaper, available infrastructure using cost-aware scheduling in edge-cloud scenarios without degrading performance.

Why is “Every Test Case Costs Money” More Common?

- Cloud CI Pricing: Hosted runners, container builds, parallel execution, artifact storage, network egress, every API call, server spin-up, and data storage unit in a CI/CD pipeline cost money. A single inefficient test suite running on a large instance can accumulate high costs.

- Device and Browser Farms: This incurs costs like per-minute charges for real devices, OS versions, and geographies.

- Hardware-in-the-loop (HIL) Labs: Scarce physical rigs, calibration time, and technician support also cost money.

- Third-party Integrations: Payment gateways, identity providers, SMS services, maps, and ML inference APIs often charge per request.

- Test Data and Environments: When used for testing, operations such as database restores, seeded datasets, anonymization, and staging replicas incur costs.

- Time-to-Feedback Cost: Sometimes obtaining feedback is time-consuming because of slow pipelines that delay merges, increase context switching, and reduce throughput. This adds up to the cost.

- Maintenance & Technical Debt: Test cases are code and require maintenance, updates, and debugging. This often costs more in engineering time than they save in debugging.

- Other Costs: Manual verification, exploratory sessions, triage meetings, and flaky test investigations all add up. Even beyond automated execution, testers require time and training that significantly add up to the total cost.

The reality is that test execution consumes various resources, including physical and digital, and resources have a price, either on an invoice or on your team’s velocity.

The Economics of a Test Case

The economics of a test case focus on optimizing the cost-to-benefit ratio of finding software defects early, since it is 10 to 100 times cheaper than fixing bugs in production.

Effective test economics can prioritize high-value test cases, reduce redundant tests, and manage test suite maintenance (which constitutes half of software maintenance costs).

- The Cost of Late Bug Detection: When testers fail to test early, costs incurred are exponentially higher as defects are fixed later in the SDLC.

- Prevention vs. Appraisal: Costs are divided between prevention (e.g., training and standards) and appraisal (e.g., testing and code reviews).

- Test Case Prioritization (TCP): You select and execute test cases based on risk or coverage. With this, you can maximize fault detection within a budget and limited time.

- Regression Testing Costs: Optimize and automate regression test execution to reduce costs, especially in large projects.

You can also use the following model, which treats tests like assets in a portfolio.

| Fixed Costs: To create and enable a test | Variable Costs: Scale with each execution | Maintenance and “tax”: Recurring cost for stable tests | Opportunity Cost (the silent budget killer) |

|---|---|---|---|

|

|

|

|

For cost-aware teams, pipeline time is a billable resource as every additional minute that multiplies across every engineer, every merge, every day.

Value: What Else Cost-Aware Testing Brings?

A cost-aware testing approach doesn’t only measure the expense (in terms of money); it measures the reduced risk and keeps the tests high-value.

- Catches defects before production: This is the most obvious return on testing, in which defects, especially high-severity or high-frequency defects, are caught before they reach users. High-value tests protect against high-impact failures. If the test is able to prevent expensive production incidents, you can say it justifies the cost.

- Reduces uncertainty quickly: A good test reduces fear, and fast feedback enables safe refactoring with rapid delivery. Merging is performed with less hesitation, and manual verification is reduced. In a sense, you are buying engineering velocity.

- Enforces contracts and invariants: Tests with high-value protects:

- Security boundaries

- Compliance requirements

- Core user workflows

- Authentication and authorization

- Data integrity

When you spend on tests in these areas, you’re actually buying business continuity and reputation protection. - Improves observability of quality: Tests provide visibility into system health and help detect drift, flaky behavior, or environment instability. A good test suite tells you:

- What is broken

- Where it broke

- Why it broke

- Whether it’s safe to release

High-value tests provide greater clarity and support decision-making.

- Impact (if it fails in production, what’s the damage?)

- Likelihood (how often do changes break this area?)

- Detectability (would other tests or monitoring catch it anyway?)

- Time-to-signal (how quickly does it tell you something trustworthy?)

- Actionability (can engineers quickly interpret and fix it?)

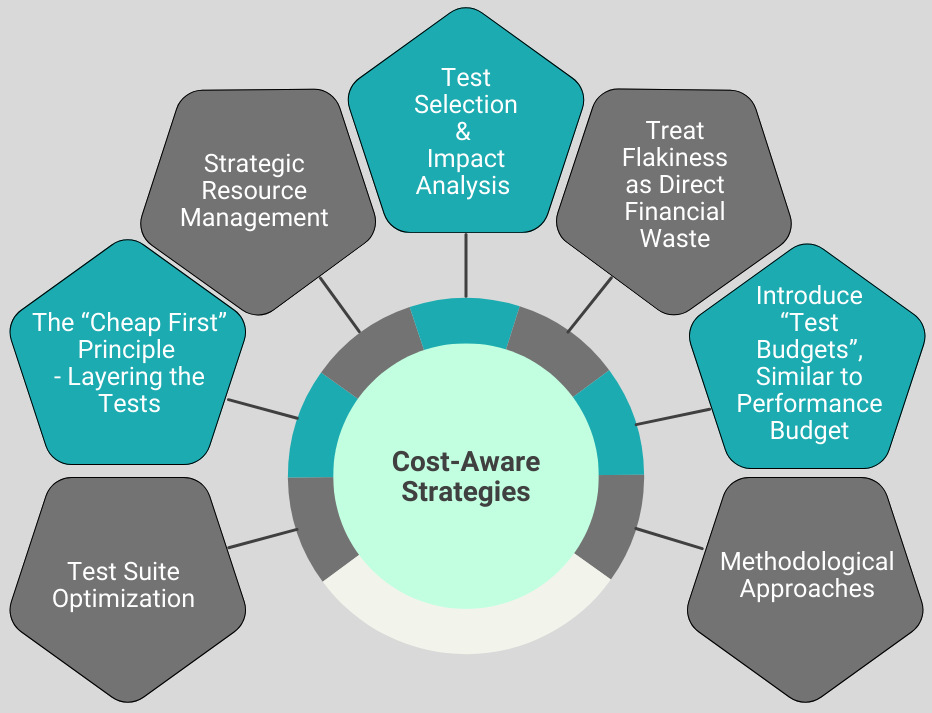

Cost-Aware Strategies that Work in Practice

The methodologies that are designed to optimize the software testing process and resources, maximizing fault detection, maintaining high software quality, and most importantly, reducing costs while achieving all these. The strategies discussed next involve prioritizing, reducing, or optimizing test cases to achieve maximum code coverage with fewer resources.

Here are the key cost-aware testing strategies:

Test Suite Optimization

- Test Suite Reduction: Identify and remove redundant test cases from the test suite. This decreases the execution time and resource consumption.

- Test Case Prioritization: Order and prioritize test cases based on specific criteria, such as risk, severity, or, in regression testing, changes in code to ensure critical tests are executed earlier. Also, run expensive tests more selectively. For example, run tests that historically catch regressions frequently or deprioritize tests for stable, low-impact modules.

- Maximum Fault Coverage (MFTS Algorithm): Use this technique to reduce test suites while ensuring maximum code coverage. This improves the efficiency of fault detection.

You can use algorithms (genetic algorithms) to run the most critical, high-risk tests first, maximizing the rate of fault detection (APFD) early in the cycle.

The “Cheap First” Principle: Layering the Tests

- Unit Tests: Cheapest per run, fast signal, and high volume.

- Component/Contract Tests: Moderate cost, strong isolation, and great for API boundaries.

- Integration Tests: Typically higher cost, and validate real wiring (integration).

- End-to-end (E2E) Tests: Often the most expensive and most flaky, but they validate real user journeys.

- Production Monitoring/Canaries: Continuous cost, but excel in high realism and early detection.

Cost-aware teams must push as much validation as possible into cheaper layers, reserving expensive E2E runs for the most valuable flows.

Strategic Resource Management

- Test Automation: Use AI-powered tools like testRigor to automate repetitive, time-consuming manual tests, which reduces labor costs and increases accuracy.

- Risk-Based Testing: Focus testing efforts on high-risk, critical, or frequently used components to maximize ROI and prevent costly defects in production.

- Outsourcing/Offshoring: Utilize third-party or offshore teams as and when required for specific testing tasks to maintain quality while reducing overhead costs.

Test Selection and Impact Analysis

There may be a situation where only a small portion of code is changed. In this case, there won’t be any need to run tests for everything. Tests to be run should be selected based on the impact, dependency, and coverage.

- Dependency-based Selection: Map tests to modules/packages and execute only affected tests.

- Coverage-based Selection: Execute tests covering changed lines (more complex).

- Ownership Tags: You can skip the integration suite for a service that has not changed.

In addition to the above approaches, even a basic mapping (tests → folders/services) can dramatically reduce the test spend.

Treat Flakiness as Direct Financial Waste

- The test reruns cost computing

- Investigating these scenarios costs time

- Teams lose trust and ignore signals

- If a test’s flake rate exceeds a threshold, it is quarantined (not included in the current release gate).

- Quarantined tests must have an owner and a fixed SLA.

- The goal is to restore trust in the signal.

A suite with even a minor 1% flake at scale can become an enormous hidden expense. One option is to run the full matrix less frequently (nightly or pre-release) and keep the PR gate lean.

Introduce “Test Budgets”, Similar to Performance Budgets

A powerful cultural shift in reducing test costs is to treat pipeline time and test cost as budgets.

For example, the PR pipeline budget should be ≤ 12 minutes p95. E2E budget should be ≤ 30 device-minutes per PR. In addition, flake budget should be at a ≤ 0.3% rerun rate.

Within these thresholds, when a new test is added, it competes for budget like any other resource. This forces prioritization and encourages the use of cheaper alternatives.

Methodological Approaches

- Cost-Aware ERO (Expected Reward Optimal) Investing: This strategy is used in sequential testing to manage a limited budget. It adopts the number of samples for each test to optimize the rate of true positives and false discoveries.

- Cost-Aware Calibration: This is a technique for machine learning classifiers, and it adjusts the model to minimize the cost of miscalibration. The technique is especially useful when false negatives are more expensive than false positives (e.g., in cancer detection).

- Cost-Aware Retraining (Cara): The Cara strategy maintains machine learning models by determining the optimal time to retrain them based on the trade-off between model staleness costs and retraining costs.

Cost-Effectiveness in Manual vs. Automation

To understand the cost implications of manual vs. automated testing, we must consider the entire SDLC. Manual testing methods have low initial setup costs and are great for short-term projects. Manual testing is also cost-effective for exploratory testing or when there are small, frequent changes.

Automation testing, on the other hand, is more cost-effective for long-term, large-scale, or repetitive tasks. It offers higher efficiency and is more accurate than manual. Automation reduces human effort and saves costs, but it requires a high upfront investment.

- Initial Costs: Manual testing has low upfront costs, whereas automation initially requires significant investment in tools, infrastructure, and training.

- Test Case Maintenance: Manual test cases require continuous updates as the software changes. This increases the maintenance costs. In automation testing, initially, the costs are incurred for script creation and maintenance, but they provide long-term cost savings through reuse.

- Testing Frequency: The frequency of manual testing is limited by the number of testers and their availability. Automated testing can be run as often as needed and reduces overall time and costs.

- Test Coverage: Manual testing is highly dependent on the tester’s expertise and attention to detail. Automation testing, on the contrary, ensures comprehensive test coverage.

- Regression Testing: When it comes to regression testing, automation testing is more efficient, saving a significant amount of time and effort, and ultimately the cost. Manual testing is time-consuming.

- Human Error & Precision: Manual testing is prone to human errors and often leads to missed defects, increasing the costs. Automation offers consistency and precision if the test scripts are stable.

- Long-Term Savings: Automation becomes cheaper over time due to the reusability of scripts, while manual testing costs continue to rise with repetitive human effort.

- Efficiency and Speed: Automation speed is much faster and allows for 24/7 testing, improving ROI on large projects.

- Best Use Cases: Manual testing is ideal for ad-hoc, usability, or exploratory testing. Automation excels in performance, load, and repetitive functional testing.

Maximizing QA Efficiency and Minimizing Costs

- Strategic Test Selection: Critical areas of the application are identified for automation testing, such as regression tests and repetitive tasks. Manual testing is reserved for exploratory, usability, and ad-hoc scenarios.

- Continuous Training: QA teams are trained to adapt to automated testing tools and methodologies, allowing them to work more efficiently.

- Test Automation Frameworks: Choose frameworks that facilitate script creation with minimum maintenance, reducing the overall cost of automation.

- Regular Review: Testing strategies are continuously reviewed to ensure they align with the evolving needs of your project. And the balance between manual and automated testing is adjusted accordingly.

What are the Cost-Aware Metrics to Measure?

- Cost per signal: How much does it cost to get a trustworthy pass/fail?

- Cost per defect prevented (approximate): Use historical data, like what tests historically catch regressions?

- Mean time to feedback (MTTF): For PR gates, this is often more valuable than running “everything”.

- Flake rate and rerun rate: Direct proxy for wasted compute and human attention.

- Escape rate: Defects reaching production per release, normalized by change volume.

- Test maintenance ratio: Time spent maintaining tests vs. building product changes.

- Test cost per transaction/API call: Measures how much a specific functional test costs in cloud infrastructure.

- Defect detection percentage: Percentage of bugs found by tests versus those found in production.

- Return on Investment (ROI): Calculates the savings from bugs caught early vs. the cost of running the tests.

With cost-aware testing, QA transforms from a “cost center” into a “value driver,” ensuring that the software is reliable without breaking the bank.

Read more on QA Metrics here.

Example: Shrinking an Expensive E2E Suite Without Getting Riskier

- E2E tests = 1,500

- Time on PR gates = 45 minutes

- Heavy device-farm costs

- Frequent reruns due to flakiness

- Categorize tests:

- 150 critical journeys (auth, checkout, refunds)

- 500 medium-risk flows

- 850 low-risk UI permutations

- Re-layer:

- Convert low-risk UI permutations into component tests.

- Add contract tests for service boundaries that E2E was implicitly validating.

- Change execution schedule:

- PR gate: 150 critical E2E + unit/component suite (≤ 12 minutes)

- Post-merge: medium-risk E2E

- Nightly: full E2E and broader device matrix

- Deflake aggressively:

- Quarantine unstable tests.

- Fix root causes: waiting strategies, test data coupling, and environment instability.

- PR feedback becomes faster and more predictable.

- Total coverage remains high, but the expensive portion is used strategically.

- Device-farm spend drops because matrix runs move out of the PR path and become analytics-driven.

Common Pitfalls in Cost-Aware Testing

- Cost-aware becomes an excuse to cut quality: If the only KPI for testing is to reduce CI cost, more defects are likely to be shipped.

- Optimize execution cost but ignore maintenance cost: A test that’s cheap to execute but expensive to maintain is still costly. Brittle tests create drag.

- Over-mocking creates false confidence: Replacing all integration/E2E checks with mock tests can make tests pass, but in reality, they break. Cost-aware does not mean: simulate everything or use mock data. It means: use realism where it buys down risk.

- Selection logic becomes a new source of bugs: If test selection mapping is incorrect, you’ll miss needed coverage. Selection should be simple at first, validated properly, and then maintained like production code.

- Teams don’t trust quarantined tests: Quarantines become a graveyard if not paired with ownership and deadlines.

Conclusion

Test cases cost money, but the objective is not to minimize testing. It is to maximize confidence per unit cost. Test suites should be treated like living systems, which are measurable, maintainable, and aligned with actual product risk.

You will get a healthier suite, faster pipelines, fewer flakes, and higher trust in the signal if you build a culture where tests must justify both their runtime and their value. And ironically, this often leads to better coverage, not because more tests are run but because you invest in the right kinds of verification.

Frequently Asked Questions (FAQs)

- What role does flakiness play in testing costs?

Flaky tests significantly increase costs through reruns, investigation time, delayed merges, and loss of trust in test results. Treating flakiness as a measurable and fixable cost is central to cost-aware testing.

- Is cost-aware testing only relevant for large enterprises?

No. Startups and small teams often feel more cost pressure because CI minutes, device labs, and engineering time directly impact runway and delivery speed. Cost-aware testing benefits teams of all sizes.

- What is the ultimate goal of cost-aware testing?

The goal is to maximize confidence per dollar (or per minute) spent, ensuring that every test contributes meaningful risk reduction without unnecessarily slowing development or increasing infrastructure costs.

- How often should expensive test suites be run?

It depends on risk and feedback needs. Critical tests should run in PR pipelines, while broader regression and full device matrices can run post-merge, nightly, or before major releases to balance cost and confidence.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |