Ready for Meta’s Just-in-Time Tests?

|

|

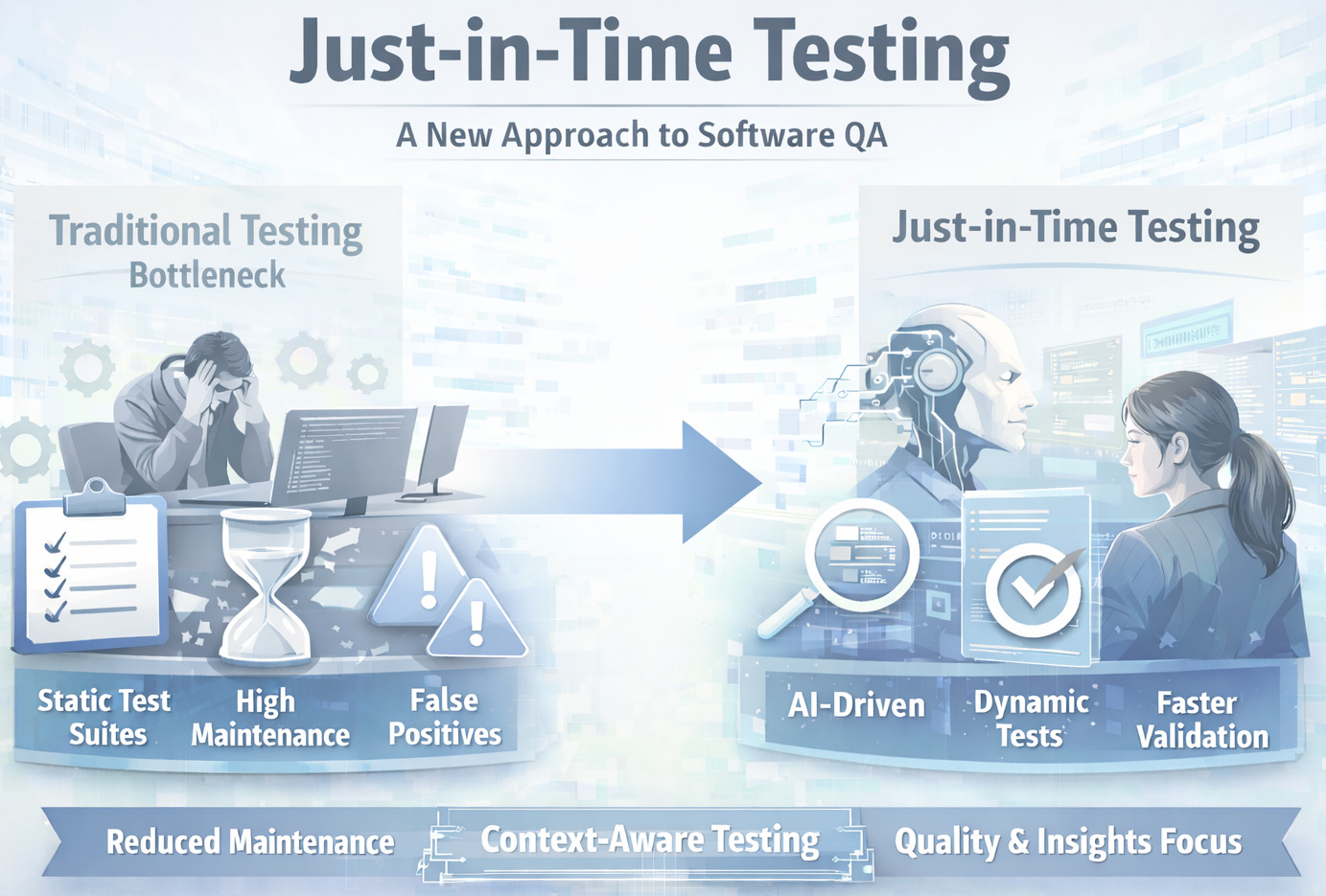

Traditional testing has been the backbone of software quality for decades. Test cases were defined manually, and the industry subsequently built automated regression suites to cover increasingly complex systems.

And let me be clear, this model hasn’t suddenly “stopped working”. It is still valuable in many situations. But something fundamental has changed. But with the rise of AI-driven and agentic development, code is being created, modified, and deployed at unprecedented speed. What was once a structured, incremental process is now fast, fluid, and constantly evolving. And for the first time, development is no longer the bottleneck – testing is.

This brings us to an interesting approach to testing – Just-in-Time tests.

| Key Takeaways: |

|---|

|

Your Test Suite Is Becoming the Bottleneck

Across modern engineering teams, a new frustration is emerging: keeping test suites up to date is now harder than writing the code itself. It’s a striking reversal of what testing was meant to achieve.

Test suites were built to accelerate development by providing confidence. Today, they often do the opposite. Every major change can trigger a cascade of failing tests – whether the code is actually broken or not. Engineers are increasingly stuck in a loop of fixing tests instead of building new features.

At the heart of the problem is the rise of false positives. Tests fail not because of real defects, but due to UI tweaks, workflow changes, or shifting expectations. Over time, this erodes trust. Engineers begin to question whether a failure signals a real issue – or just another outdated test.

- Teams start ignoring test failures

- Test suites grow larger but less reliable

- Maintenance costs spiral out of control

At scale, this becomes unsustainable.

There’s also a deeper limitation. Traditional regression testing is inherently backward-looking – it verifies what worked in the past. While that remains important, it doesn’t answer the most critical question in today’s fast-moving environments:

Did this particular change cause the problem?

That’s not a question regression suites are designed to answer. Those are meant to confirm the past, not the present. This disconnect becomes more stark as development races ahead. As the test suite grows, maintenance becomes cumbersome, and the feedback loop gets slower. Over time, testing becomes a bottleneck rather than a safety net.

The Shift to Just-in-Time Testing

To tackle these challenges, a novel practice is taking shape; one that reconsiders the place and lifecycle of tests from scratch. JiT testing generates tests dynamically as each code change occurs rather than having a static suite of test cases.

It generally requires examining the code change, guessing its intent, and targeting failure points. AI systems can model multiple scenarios, generate targeted tests, and check whether the change produces the desired behavior.

- First, it eliminates the need for ongoing test maintenance. Since tests are not stored or reused, they do not become outdated. There is no need to update assertions or refactor test logic when the system evolves.

- Second, it reduces noise. Because tests are context-aware, they are less likely to flag expected changes as failures. This improves the signal-to-noise ratio, making test results more actionable.

- Third, it aligns testing with development speed. Tests are generated as quickly as code changes occur, ensuring that validation keeps pace with creation.

What Are Catching JiT Tests?

At the heart of this new model is the concept of catching Just-in-Time (JiT) tests – a sharp departure from how teams have traditionally approached testing.

Unlike conventional test cases that are written once and maintained over time, catching JiT tests are created on demand, specifically for a single code change. Their purpose is simple: to determine whether that particular change introduces a bug.

This marks a fundamental shift in thinking. Traditional tests are designed to “harden” a system by continuously validating existing functionality. Catching JiT tests, on the other hand, are designed to “catch” issues in the moment. They don’t aim to live forever or become part of a growing regression suite.

As described by Meta, these tests are often generated by simulating potential failures – introducing controlled variations or “mutations” in the code – and then creating tests that would detect those issues if they existed. The result is a set of highly targeted tests that focus only on the risk introduced by the latest change.

- The developer submits a code change.

- System guesses the intent of the change.

- It creates “fake bugs” (mutants) to simulate risks.

- AI generates tests to catch those bugs.

- These tests are then run on the real code.

- Only meaningful failures are shown to engineers.

However, this approach also raises new challenges. Since these tests are generated dynamically, ensuring their accuracy and filtering out noise becomes critical. Without that, you just risk replacing one form of test fatigue with another!

Still, catching JiT tests highlight where software testing is heading: away from static, long-lived test suites, and toward dynamic, change-aware validation that keeps pace with modern development.

A Word of Caution

One of the biggest concerns with this type of testing is trust. Automatically generated tests can produce noise, especially if they are not well-calibrated. False positives – already a problem in traditional testing – don’t disappear entirely. If anything, poorly tuned JiT systems could overwhelm teams with low-quality signals, leading to the same fatigue they are trying to escape.

There’s also the question of coverage. Disposable tests are excellent at catching issues in specific changes, but they don’t always guarantee long-term system stability. Critical workflows, compliance requirements, and business-critical paths still need persistent validation. Relying entirely on ephemeral tests could leave gaps if not carefully managed.

Another challenge is visibility. With traditional test suites, teams have a clear, documented set of checks that define system behavior. JiT testing, by contrast, is more dynamic and less transparent. Without proper tooling and reporting, it can become harder to answer simple questions like: What exactly is being tested right now?

What This Means for Testers

This change can be exciting, and at the same time, quite disruptive for testers.

It is, on one level, firing at many of the core activities that have defined the role for decades. Writing detailed test cases, building large regression suites, and maintaining automation frameworks might become less central in a JiT-driven world. On the flip side, it presents new opportunities to give more than before.

- Define quality benchmarks and risk areas.

- Ensure AI-generated tests reflect real-world behavior.

- Develop deep system understanding.

- Evaluate AI outputs critically.

- Transition from gatekeepers to quality strategists.

This shift also places greater emphasis on understanding risk. Since JiT testing is centered around validating specific changes, testers will need to think in terms of impact analysis – what could break, where, and why. Domain knowledge, product understanding, and the ability to anticipate edge cases will become even more valuable than maintaining large test libraries.

For organizations considering this shift, a hybrid approach is likely the safest path forward. JiT testing can complement existing strategies by providing fast, targeted feedback, while stable regression tests continue to guard critical functionality.

The takeaway for testers is clear: this is less about replacement and more about evolution.

The tools and techniques may change, but the core mission remains the same – ensuring quality in systems that are becoming faster, more complex, and less predictable.

Other Interesting Reads:

- Can You Trust an AI That Can’t Explain Its Decisions? A Guide to Explainable AI Testing

- Trusting AI Test Automation: Where to Draw the Line

- How to Write Good Prompts for AI?

- Battle of the Testers: Technical vs. Non-Technical in the AI Era

- Will AI Replace Testers? Choose Calm Over Panic

- Common Myths and Facts About AI in Software Testing

- Lessons to Learn from Your Failing Test Suites: How to Fix Them

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |