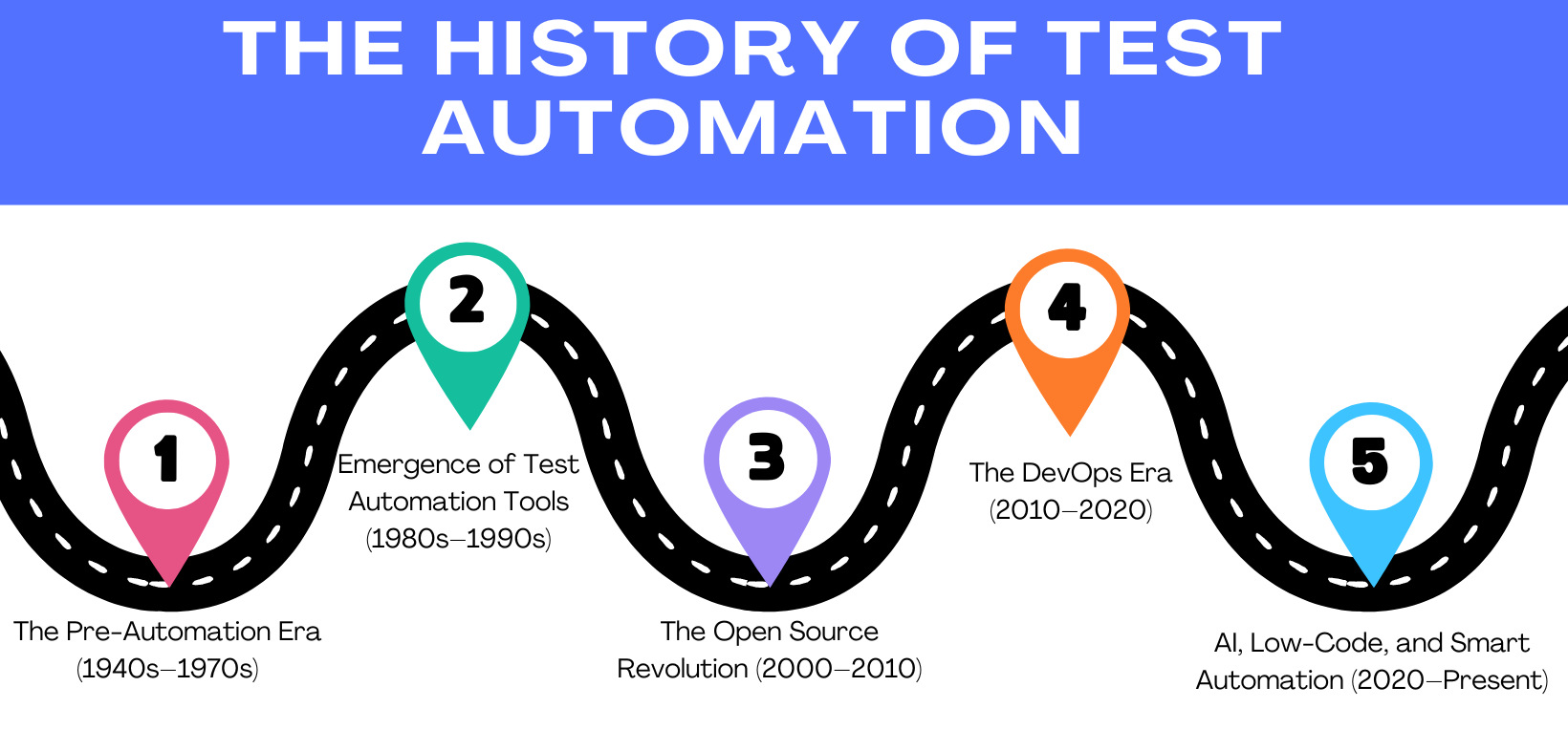

The History of Test Automation

|

|

The history of software testing dates back to the 1940s and 1950s, when programmers used ad-hoc methods to manually check their code for errors. However, the first dedicated software testing team was formed in the late 1950s at IBM, led by computer scientist Gerald M. Weinberg. This team was responsible for testing the operating system for the IBM 704 computer, which was one of the first commercial mainframe computers.

The concept of test automation can be traced back to the early days of computing, but the first practical implementation of test automation occurred in the 1970s. One of the earliest examples of test automation is the Automated Test Engineer (ATE) system developed by IBM in the early 1970s. The ATE system was designed to automate the testing of mainframe software applications and was considered a significant breakthrough.

Let’s dive into the various eras of test automation in the following sections.

The Pre-Automation Era (1940s-1970s)

In the very early days of computing during the 1940s and 1950s, software development and testing were tightly tied to the hardware. Programs were written on punch cards or in machine language, and the execution would happen on big, expensive computing machines like the ENIAC or UNIVAC. Developers, who were also the testers, had to check outputs by studying printed logs or inspecting memory dumps.

Testing was primitive and completely manual. Debugging meant printing logs, checking memory dumps, and verifying each line of output by hand. Since computing power was scarce and expensive, every bug was costly in terms of time and access to the machine. There were no test methodologies, no documentation, and absolutely no automation. Testing was reactive, trying to fix errors after they were discovered.

“Program testing can be used to show the presence of bugs, but never to show their absence,” a quote by Edsger W. Dijkstra captured the mindset of that era: Testing was more about debugging than it was about quality assurance.

By the 1970s, with mainframe computing taking off and software systems becoming much more complex, organizations started making testing roles more formalized. The origins of test automation date back to this date, with the first seeds typically consisting of simple batch or shell scripts that automated repeated test tasks. While primitive, these scripts were the building blocks for automating parts of the software testing lifecycle.

During this period, the same person who wrote the software also tested it. As systems grew in size and complexity, this dual role became unsustainable. Developers were increasingly overwhelmed. Manual testers emerged to support overwhelmed developers. But even these testers worked in isolation, manually stepping through procedures, clicking buttons, and verifying outcomes.

Lesson Learned: Back in the day, testing meant punching cards, praying to the mainframe gods, and hoping your log didn’t betray you.

- Testing was manual, tedious, and reactive; there was no automation, no documentation.

- Manual testing involved testers operating in silos without collaboration with development teams.

- Developers doubled as testers, often checking logs and memory dumps by hand.

- The idea of automation was born from necessity, but it was just starting, mostly via simple scripts and batch jobs.

Emergence of Test Automation Tools (1980s-1990s)

In the 1980s, as GUI-based applications started to gain popularity, manual testing became painful, slow, and inefficient. This gave rise to the first test automation tools that were designed to simulate user activity.

1980s: The First Generation Tools

- Introduction of Record-and-Playback Tools: These early tools allowed testers to record interactions with applications and replay them to validate consistent behavior across versions.

- The Emergence of Unit Testing Tools: The creation of SUnit, a unit testing framework for Smalltalk, laid the groundwork for modern frameworks like JUnit in later years.

However, these tools had multiple challenges. They were especially brittle; even a tiny change to the UI (for instance, a label on a button or a layout on a screen) could break the test scripts. The weak identification of elements, in addition to reliance on screen coordinates, made maintenance a nightmare.

Lesson Learned: Testers in the ’80s invented ‘record-and-pray’ before ‘record-and-play’ was cool.

- GUI-based apps made manual testing too slow and painful.

- Record-and-playback tools emerged, but were fragile—one button label change could wreck the test.

- Early frameworks like SUnit laid the groundwork for unit testing.

1990s: The Rise of Commercial Tools

The 1990s saw a major leap with the introduction of commercial, enterprise-grade automation tools. Businesses started investing in proprietary tools to accommodate the growing demand for software testing. Tools offered more advanced scripting engines, improved recognition of UI elements, and support for test management platforms.

- WinRunner by Mercury Interactive (later acquired by HP)

- Rational Robot by Rational Software (later acquired by IBM)

- SilkTest by Segue Software

These tools focused on Windows-based desktop applications, which were prevalent in the software market back then. They added more powerful scripting functionality with languages such as TSL (Test Script Language) as well as improved reporting, test case management, and integration with defect tracker systems. Testers were no longer just clicking through applications; they were now coding. This shift brought new responsibilities and challenges as testers had to acquire scripting skills.

These tools have improved in fragility compared to the 1980s, but they are still too expensive for smaller organizations. Their steep learning curves and dependence on dedicated scripting languages also meant that only dedicated QA engineers could effectively use them.

- Testers transitioned into coding, increasing test coverage and reliability but adding to complexity.

- Rise of commercial tools like WinRunner, SilkTest, and Rational Robot.

- Improved scripting with languages like TSL.

- Better UI recognition and integration with defect trackers.

- Still expensive, complex, and inaccessible to smaller teams.

The Open Source Revolution (2000-2010)

The early 2000s were a game-changer for software testing, with two primary catalysts: the advent of Agile processes and a new influx of open-source testing tools. A conventional, slow-moving testing approach was ineffective in providing timely responses to the Agile appeal of fast releases, continued feedback, and tight collaboration between developers and testers.

The Impact of Agile

Agile brought shorter development cycles with continuous integration (CI) and iterative releases. Such evolution required testing methods that were faster, more adaptive, and developer-centric. Legacy automation tools designed for Agile workflows are slow, expensive, and hard to maintain. The industry required sophisticated yet lightweight configuration tools that could be deeply embedded in the software development process.

Open-source Tools

Agile was tempered with open-source solutions. This was free, community-supported, and highly customizable, which is perfect for Agile teams.

- JUnit (Java): Made unit testing a standard practice and popularized Test-Driven Development (TDD), where tests are written before code.

- Selenium (2004): ThoughtWorks built Selenium, which is widely used to automate browser interaction through real user workflows. It became the de facto tool for web UI testing due to its flexibility and multi-language support.

- TestNG: JUnit-based framework TestNG offered powerful features such as parallel test execution, test configurations, and a flexible grouping option that best suited large and complex test suites.

Despite Agile adoption, many teams struggled with fully embracing its principles. They maintained traditional waterfall mindsets beneath the surface, delaying test automation until late in the cycle or isolating QA from the rest of the team.

Lesson Learned: Agile said ‘move fast’, old tools said ‘not today’—so open-source came to the rescue.

- Agile and CI/CD demanded faster, more collaborative testing.

- Testing became a shared responsibility and shifted left.

- Rise of JUnit, TestNG, and the legendary Selenium for web UI testing.

- Open-source meant freedom, community support, and customization.

- Testing became developer-friendly, with test-driven development (TDD) gaining traction.

- Despite Agile slogans, many teams clung to waterfall-style testing processes.

The DevOps Era (2010-2020)

The 2010s introduced DevOps, a cultural and technical movement to bring development and operations together to hasten the availability of the software. DevOps proposed automating every stage of the software lifecycle to output quality software faster and more frequently, making test automation the cornerstone of the initiative.

Test Automation as a DevOps Essential

With the rise of Continuous Integration and Continuous Delivery (CI/CD) pipelines, automated testing went from being an optional QA check to a requisite task in every build and every release. This shift ensured that bugs were caught earlier, deployments became safer, and feedback loops tightened.

- CI/CD Integration: Tools like Jenkins, CircleCI, and Travis CI enabled the running of automated tests on every code change, build, or deployment. This resulted in faster, more trustworthy, and deeply integrated testing in the development pipeline.

- Mobile Testing Goes Mainstream: With the global dominance of mobile apps, tools such as Appium and Espresso made automation possible for Android and iOS testing, allowing device-level verification on a massive scale.

Layered Testing Strategies

- Unit Tests: They are written by Developers to check the core logic at the function or class level. They’re fast, simple, and unbelievably accurate.

- Integration Tests: Tests, especially in UI automation, would become flaky because of asynchronous elements, timing issues, and changing environments.

- UI Tests: Interaction of actual users as they interact with browsers or mobile frontends, usually in varying browsers or without visible rendering.

- Flaky Tests: Especially when it comes to UI automation, tests would become flaky because of asynchronous elements, timing issues, and different environments.

- Test Maintenance Overhead: The application changes so rapidly that tests fail frequently, and the test scripts need to be modified continuously.

Lesson Learned: If your test suite didn’t run in CI/CD, did it even exist?

- DevOps promoted automated testing across every stage of the lifecycle.

- Testers transitioned into quality advocates, shaping product strategy and delivery culture.

- CI/CD tools like Jenkins and CircleCI allowed auto-testing with every code push.

- Emergence of mobile testing tools like Appium and Espresso.

- The testing pyramid strategy was emphasized: unit tests (fast, reliable), integration tests (somewhat flaky), UI tests (very flaky and high-maintenance).

- Test maintenance became a major challenge due to rapid product evolution.

AI, Low-Code, and Smart Automation (2020-Present)

The 2020s marked the dawn of a new age for test automation, powered by the surge in artificial intelligence, the emergence of low-code/no-code platforms, and an increasing need for fast, smart, and widely accessible testing. This era has been characterized by innovations aimed at lowering maintenance overhead, allowing non-technical users to have deep functionality, and making testing a hard-core part of the entire software lifecycle.

AI-Powered Testing Becomes Mainstream

Traditional scripting-based automation tools began to break down as applications became more complex and release cycles accelerated. To catch up, the industry adopted AI-based automation tools that embraced change, reducing brittleness and enhancing accuracy.

- Natural Language Test Creation: Perhaps one of the biggest changes was writing test scripts in plain English. Tools like testRigor help testers, not just testers alone, but any stakeholder in the project, create or update test scripts. testRigor, with its Natural Language Processing, converts the script into a format that is understandable by testRigor.

- Self-Healing Tests: Tools like testRigor have come up with test scripts that take care of updates in the test script when a UI element changes, thus drastically reducing the cost of maintenance and increasing the reliability of tests.

- Generative AI: With Generative AI, testRigor helps generate/create/record test cases or unique test data by providing a description of the tests. So, testers just need to provide the description, and the possible test cases are generated by the tool. Here is an All-Inclusive Guide to Test Case Creation in testRigor.

- AI Vision: Using its AI Vision, testRigor can not only detect but also understand and analyze elements visually on the screen. For images, this means testing far more than the presence of an image. testRigor can identify what the image is. For example, we can check if the image is a red rose or white shoes, etc. It can analyze colors, size, placement, and alt text. It can also identify fuzzy, distorted, or missing images. Read Vision AI and how testRigor uses it.

- Stable Element Locators: Unlike traditional tools that use XPath or CSS selectors, testRigor identifies elements based on visible text on the screen. This AI-powered approach minimizes maintenance efforts and allows teams to focus on creating meaningful use cases instead of fixing flaky tests. Example commands include:

click "cart" enter "Peter" into "Section" below "Type" and on the right of "Description"

- Comprehensive Testing: testRigor supports different types of testing, like native desktop, web, mobile, API, visual, exploratory, AI features, mainframe, and accessibility testing.

- Reduced Test Maintenance: By focusing on the end-user perspective and avoiding dependency on traditional locators, testRigor significantly reduces test maintenance time, especially for frequently changing products. It supports up to a 99.5% reduction in maintenance effort.

- Seamless Integrations: testRigor integrates effortlessly with popular CI/CD tools like Jenkins and CircleCI, test management platforms like TestRail, defect tracking systems like Jira, and communication tools such as Slack and Microsoft Teams, making it a natural fit for existing workflows.

Will AI Replace Testers?

One major concern raised in every tester’s mind was whether AI would replace testers.

As AI takes over more tasks, like test generation, maintenance, and even analysis, a pressing question arises: Will AI replace testers? The answer lies not in replacement but in evolution. Testers are shifting roles, from manual execution to strategic oversight, training AI models, interpreting results, and ensuring ethical test coverage. AI augments human capability, not erases it.

Lesson Learned: We went from scripting like wizards to speaking plain English like Muggles—and the tests still run!

- Explosion of AI-powered tools and low/no-code platforms.

- Natural Language Testing lets users write test cases in English (hello, testRigor!).

- Self-healing tests adapt to UI changes without human interference.

- AI is reshaping testing roles but not replacing testers; humans remain essential for context, strategy, and oversight.

The Future of Test Automation

Test automation will reportedly be smarter, more autonomous, and more integrated than ever as we look ahead. We are headed toward a future in which AI doesn’t just support testing; it drives it. Tools will generate, execute, and maintain tests automatically based on real-user behaviors, application changes, and production data.

Testers will become AI trainers and quality strategists, training ML models to find regressions, prioritize which test scenarios are executed, and continuously monitor quality. Test creation through natural language and voice will be the new normal, paving the way for non-engineers to create tests. AI will take over mundane tasks, enabling testers to focus on optimizing workflows, shaping product quality, and ensuring user satisfaction. The rise of no-code tools means testers will collaborate more with business users, developers, and designers in a shared quality culture.

Automation will be closely integrated into development workflows, providing immediate feedback as code is written. Meanwhile, AI will create synthetic data, simulate complex edge cases, and perform advanced visual, security, and compliance testing in real time.

The QA ecosystem will be more modular, decentralized, and collaborative. Testing won’t just be the responsibility of one team; it’ll be a shared responsibility across developers, designers, product managers, and support teams, thanks to no-code and AI-powered platforms. And we’ll also start to see broader coverage across emerging tech such as AR/VR, voice assistants, IoT, and even blockchain-based applications.

In the future, automation systems will be context-aware, business-intelligent, and self-improving on an ongoing basis, not just because of the internal correctness of the technology but because of the real need in the world. To sum up, test automation will not just be an era or a tool; rather, it will be an integral intelligent partner in building quality software.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |