MCPs vs. APIs: Differences

|

|

API has been the foundation of modern technical infrastructures for decades. They provided modular architectures, microservices, SaaS ecosystems, and cloud-native platforms. Almost every integration tale in software engineering has rightly been an API tale.

The advent of Large Language Models (LLMs) and AI agents has, however, disrupted entirely how software systems are consumed and orchestrated. We are beyond the days of only deterministic clients coded by developers. Instead, we have agents, more or less autonomous ones that can reason at least to some extent and that plan and seek capabilities and decide on the fly how to use tools or services.

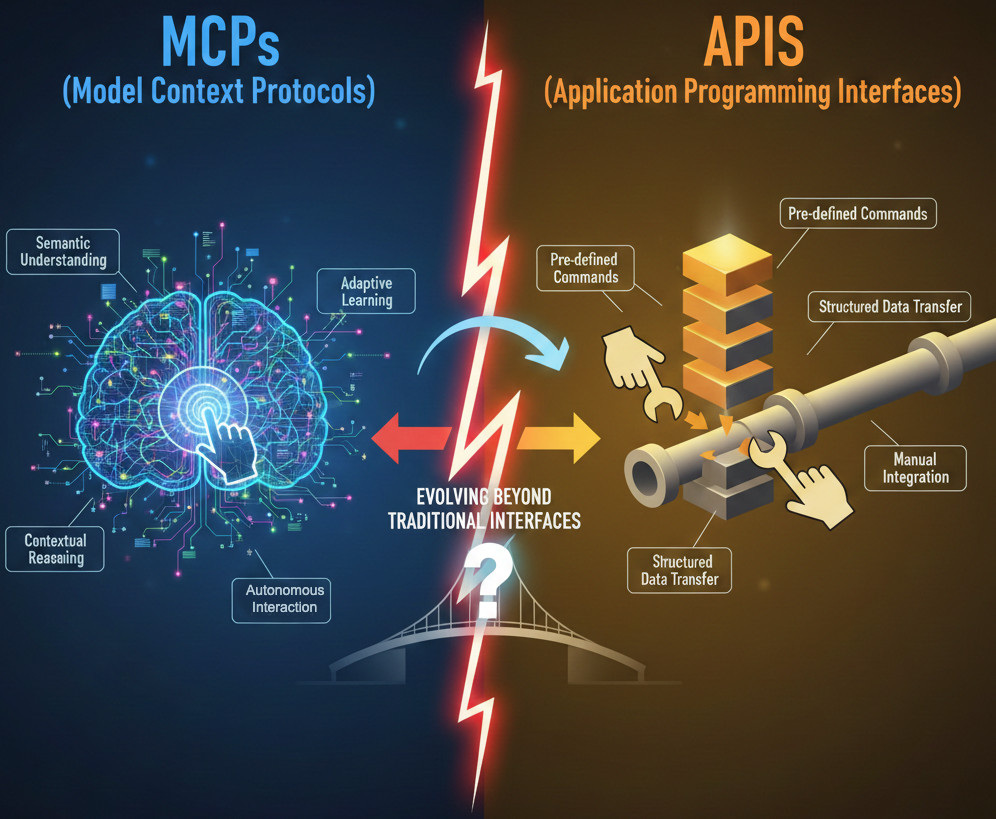

This transition revealed a key missing element: APIs were never built for AI agents. Preconfigured endpoints, handwritten client code, static schemas, and manual integration updates are expected. To fill this gap, they have introduced a new idea called Model Context Protocols (MCPs).

The progression has led many engineers to ask an ostensibly simple question: ‘So, are MCPs just APIs?‘ The short answer is no, but yes, and they are very closely related.

| Key Takeaways: |

|---|

|

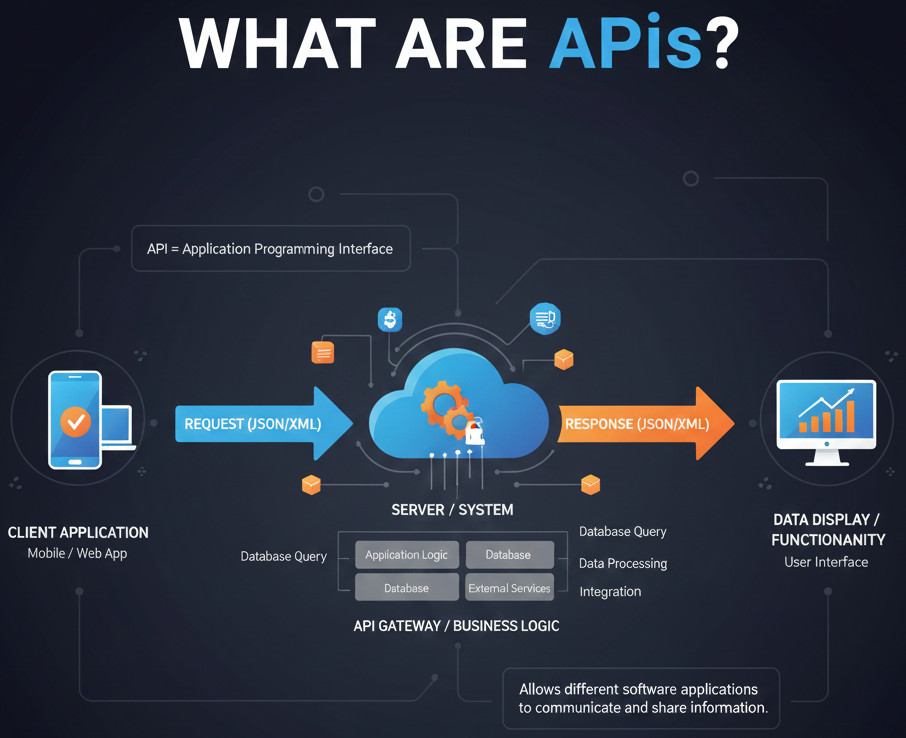

What are APIs? A Foundational Perspective

The purpose of APIs was to address one very specific problem: making sure one chunk of software could talk to another, and work predictably with it. At a fundamental level, APIs are contracts that specify endpoints, parameters, data structures, and authentication mechanisms. This allows one to define predictable transfers between systems.

However, APIs rely on a crucial assumption that a human developer is ultimately responsible somewhere along the line. The developer reads the documentation, gets the gist of what is going on, writes some integrations, and keeps it up-to-date when the API changes. This human-in-the-loop approach has been very successful for decades, but it was not formulated with autonomous reasoning systems in mind.

Why APIs Still Matter

APIs are especially good at deterministic behavior, performance-critical interactions, and service-to-service communication that scales. They provide transparent versioning, strong validation, and clear contracts leading to reliable and efficient integrations. These traits are why today’s software systems are built on APIs.

From the testing point of view, APIs are perfectly predictable and testable with request-response validation, contract tests, performance loads, and security checks. Being structured has made it much easier to debug failures and maintain automation. APIs aren’t broken, they’re just designed for a world in which humans, not autonomous systems, are making the decisions.

The AI Shift Beyond Human Clients

The AI transition is not just about human clients, and it fundamentally changes the software experience. Now systems have to support thinking entities that actually build capabilities on top of things they find out about and select dynamically, as opposed to passively relying on pre-orchestrated integrations.

An AI agent flexibly interprets the intent, chooses tools at runtime, and adjusts to changing capabilities. It’s not a human, and it doesn’t know what endpoints are available beforehand. Instead, it thinks about the kinds of actions that are currently available. Unlike traditional APIs, which require the client to already know which endpoint to reach and what each operation represents. They depend on pre-set integration logic written by a developer.

AI agents believe they need to find out what tools are available and comprehend what each tool can do. They determine which solution would be fittest for the goal in hand: that is why MCPs exist.

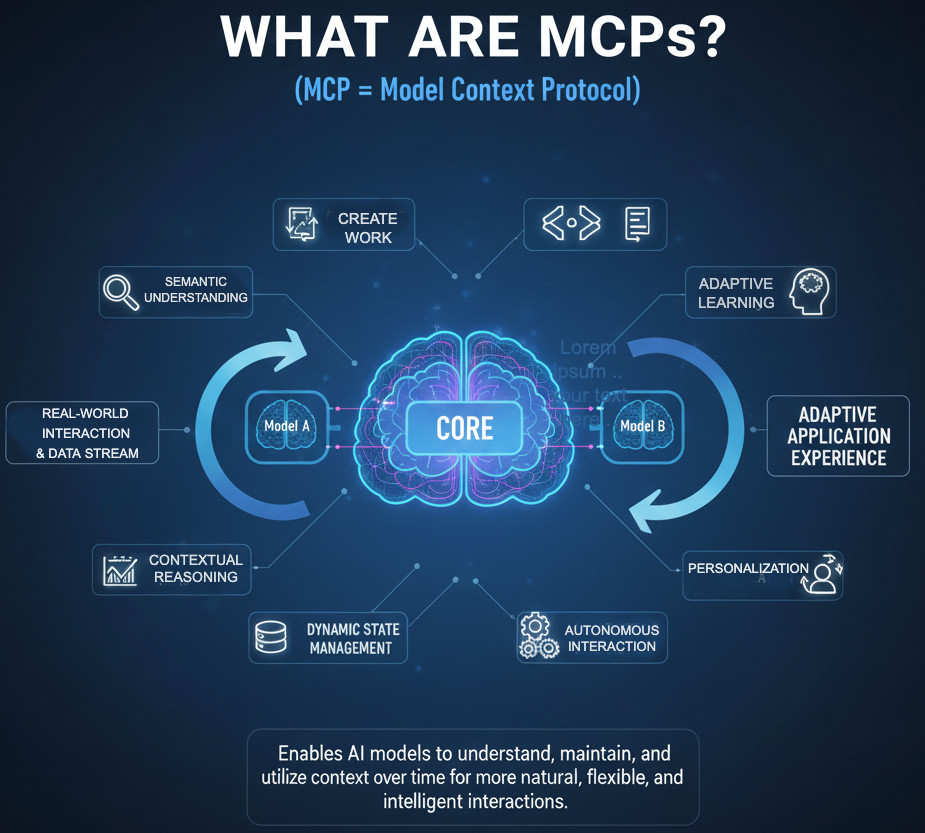

What Are MCPs?

MCPs (Model Context Protocols) are custom-defined interfaces that allow AI models and agents to plug in and connect with external tools, services, and data. Unlike plain function calls, they focus on structured context that AI systems may be able to understand and reason over. This gives the agents the ability to actually act, rather than relying on predefined actions enabled by executability.

MCPs address capability discovery, semantic descriptions, standard interfaces, and AI-friendly context exchange. These aspects enable AI agents to know about what tools are available, what they do, and how one can use them effectively. So, MCPs are made for reasoning systems and not for deterministic clients.

Difference Between MCPs and APIs: Comparing the Purpose

Let’s start with purpose, because this is the root of all other differences.

MCPs Built for Reasoning Models

MCPs are specially constructed to connect the Large Language Model (LLM) applications with external tools and data. Its primary objective is to facilitate AI agents in assimilating, reasoning over and launching capabilities on demand.

They normalize how context is given to AI models and describe tools semantically. They also prescribe how to consistently invoke actions and return results in a way a model can reason about. Also, they presume the client is an AI agent, rather than a program written by a human. They also assume that the client needs to know what a tool does and adjust behavior at runtime. Read: AI Assistants vs AI Agents: How to Test?

APIs Built for Software Integration

APIs are ubiquitous, general-purpose interfaces for exposing capabilities (features) through a well-defined interface with predictable behavior and a known contract, provided that developers assemble integration logic themselves. They were written much earlier than AI or LLMs became software-service consumers, so they depend on documentation, static schemas, and manual wiring. This doesn’t make APIs inferior.

APIs are human-centric. MCPs are AI-centric.

| MCPs | APIs |

|---|---|

| MCPs are designed for AI agents that reason about tools dynamically. | APIs are designed for human-written software that follows predefined logic. |

| MCPs support capability discovery at runtime. | APIs assume the client already knows which capability to invoke. |

| MCPs describe tools semantically so models understand what they do. | APIs describe endpoints syntactically so developers know how to call them. |

| MCPs treat context as a first-class concept for decision-making. | APIs rely on manually constructed context outside the interface. |

| MCPs allow clients to adapt behavior as capabilities change. | APIs require developers to update code when integrations change. |

| MCPs optimize for understanding and reasoning. | APIs optimize for determinism and performance. |

| MCPs are AI-centric by design. | APIs are human-centric by design. |

Why Dynamic Self-Discovery Matters

Dynamic self-discovery is one of the features that sets MCP apart from standard APIs. MCPs help AI agents learn the existence and functionalities of tools at runtime instead of requiring pre-existing knowledge. This allows more agile decisions to be made in environments where such capabilities are subject to change and/or evolution.

How MCP Self-Discovery Works

An MCP client can query the MCP server at runtime to learn which capabilities are supported and what tools are available, as well as what inputs those tools expect and what outputs they produce. This discovery process occurs at runtime and is not hardcoded in advance. This is a complete change in how integrations operate for AI agents.

This feature makes it possible to add new functions to the agent without having to redeploy the agent. Agents can be contextual to adapting systems, and pick their tools based on what is immediate rather than having fixed logic. This allows for tool use with great flexibility and ultimately autonomous behavior.

Why APIs Fall Short Here

Most existing REST APIs have no real runtime discovery capabilities. There certainly are specs like OpenAPI, but they’re fixed, human-written primarily, and it’s still up to you to trigger pushes manually in those client integrations. The consequence is that the client now has to be adapted each time the API changes.

When an API is modified, existing clients can regularly break, requiring developers to step in and upgrade. This workflow, which relies on humans, is working if we are talking about traditional software development, but it does not work for AI agents that should run all the time by themselves.

Reducing Integration Dialects

The last main difference between APIs and MCPs is that of standardization by interface. MCPs are committed to reducing the number of integration languages by offering a uniform manner for describing tools, context, and interactions. This uniformity makes it simpler for AI agents to learn and interact across disparate systems without special-purpose logic.

MCP Standardization

Each of the MCP servers is using identical protocol patterns to describe capabilities, invoke tools or share context. This standardization makes it so that AI agents can interface with various systems in a uniform and predictable manner. As a result, agents do not require custom logic for every new integration.

This uniformity has a strong effect in that systems may be built but integrated many times. AI agents can share the same reasoning and decision logic across several environments with minimal glue code. From an AI point of view, MCPs act as a common integration language.

API Fragmentation

APIs are naturally fragmented, with every service specifying its own endpoints, parameter schemas, authentication schemes, and error-handling conventions. These differences generate a number of integration dialects, each of which is different between systems. There are NO rules of the road for an API client.

Human developers cope with such complexity by writing their own, custom glue code and tweaking integrations by hand. However, AI agents find this splitting difficult as it necessitates implicit (assumed) knowledge and hardcoded assumptions. Without standardization, autonomous reasoning is hard to do and fragile.

An AI interacting with ten different APIs often needs ten different adapters. With MCPs, it needs one.

Context Handling as a Core Advantage

APIs are good for data transfer, but not for the conveyance of context. An API may even provide endpoints for creating another user, billing a credit card, or generating a report, but doesn’t communicate when to perform each operation. The reason for these acts is unspoken.

Documentation, rather than the interface, tends to explain things like limitations, trade-offs, and best practices. Human developers learn this information from experience and by reading. AI agents do need to be given this context, but it needs to be in an explicit, structured form.

MCPs Treat Context as a First-Class Citizen

MCPs allow a deep knowledge of the context to be injected directly into the interface (context in its broadest sense, such as tool intention, semantic meaning, constraints, assumptions, and expectations). This data isn’t out-of-band documentation of the interaction, it is part of that interaction. This allows AI agents to better understand the action that verbs play for each tool.

Given this embedded environment, AI agents can then reason about whether a tool is the right fit, forecast the possible effects of using one, and reckon how well it syncs with their objectives. Choices are deliberate and not the product of experimentation. This change is a game-changer for testing and quality engineering.

Are MCPs Replacing APIs?

Given this context information, AI agents can consider whether a tool is appropriate for the depicted situation. They can predict the likely outcomes from using a tool and assess how well it matches their goals. In this way, choices are thought out rather than left to trial and error. Read: AI Context Explained: Why Context Matters in Artificial Intelligence.

This represents a fundamental shift in the way testing and quality engineering works. Intelligent agents can choose their validation strategies and change them as the system dynamics evolve. The result is a more trustworthy, self-managing, and context-driven quality process.

From Scripts to Intent-based Testing

At testRigor, we feel the winds of this transformation as AI fundamentally revolutionizes software testing. The old world of test automation was predicated on assumptions like fixed user-flows, deterministic interfaces, and human-authored scripts that encode behavior in a relatively straightforward manner. Here is where these assumptions become less and less realistic for fast-changing AI-driven systems.

AI-based testing brings us into a totally new playing field of dynamic exploration, intent-based behavior, and consolidated actions. Rather than sticking to a predetermined script, AI agents think on the fly about what and how to test. An interface that can support reasoning, rather than just execution is needed to accommodate this shift.

API-Centric Testing Limitations

API-only testing depends on static contracts and integration logic that is pre-defined. Changes to their APIs tend to break tests, and are in need of ongoing maintenance and constant fixing. This solution is difficult to apply to systems that evolve over time.

MCP-Enabled Testing Opportunities

MCPs provide the ability to unlock a new set of testing capabilities, allowing for dynamic test discovery and tool selection driven by intent, rather than hardcoded flows. They decrease the amount of test maintenance, simplify failure analysis, and support context-aware validation that updates as systems evolve. AI can check whether the system does what it is intended to do, instead of how something works.

This aligns perfectly with testRigor’s philosophy: test what the user wants, not how the code is written.

Choosing Between APIs and MCPs

APIs are the recommended strategy for performance-critical situations when you wish to keep behavior deterministic. They are a good fit for scenarios where the integration logic is not changing that frequently, and human developers can manage ordering. These qualities continue to make APIs one of the foundational pieces of modern software.

MCPs perform very well when AI agents are leading clients and system capabilities change frequently. They are particularly useful in scenarios that need runtime flexibility, rich context, and reasoning based decisions. So, MCPs enable a new degree of flexibility and independence.

| APIs | MCPs |

|---|---|

| Best suited for performance-critical scenarios | Best suited when AI agents are the primary clients |

| Designed for deterministic and predictable behavior | Designed for reasoning and adaptive behavior |

| Work well when integration logic changes infrequently | Excel when system capabilities evolve frequently |

| Assume human developers manage flow and sequencing | Assume autonomous decision-making at runtime |

| Foundational infrastructure in modern software | Enable flexibility and independence for AI-driven AQE |

Wrapping Up

MCPs are not APIs 2.0, nor is it a replacement or competing standard; rather, the MCP is a new abstraction layer designed for a new type of client. If APIs enabled microservices, then MCPs enable autonomous AI by giving systems the ability to reason, discover, and adapt. As AI transforms software development and testing, this difference becomes critical knowledge for architects, QA directors, and test engineers. The future of testing is not just automated, but adaptive, contextual, and AI-driven, and MCPs are a key part of that future.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |