What is Prompt Regression Testing?

|

|

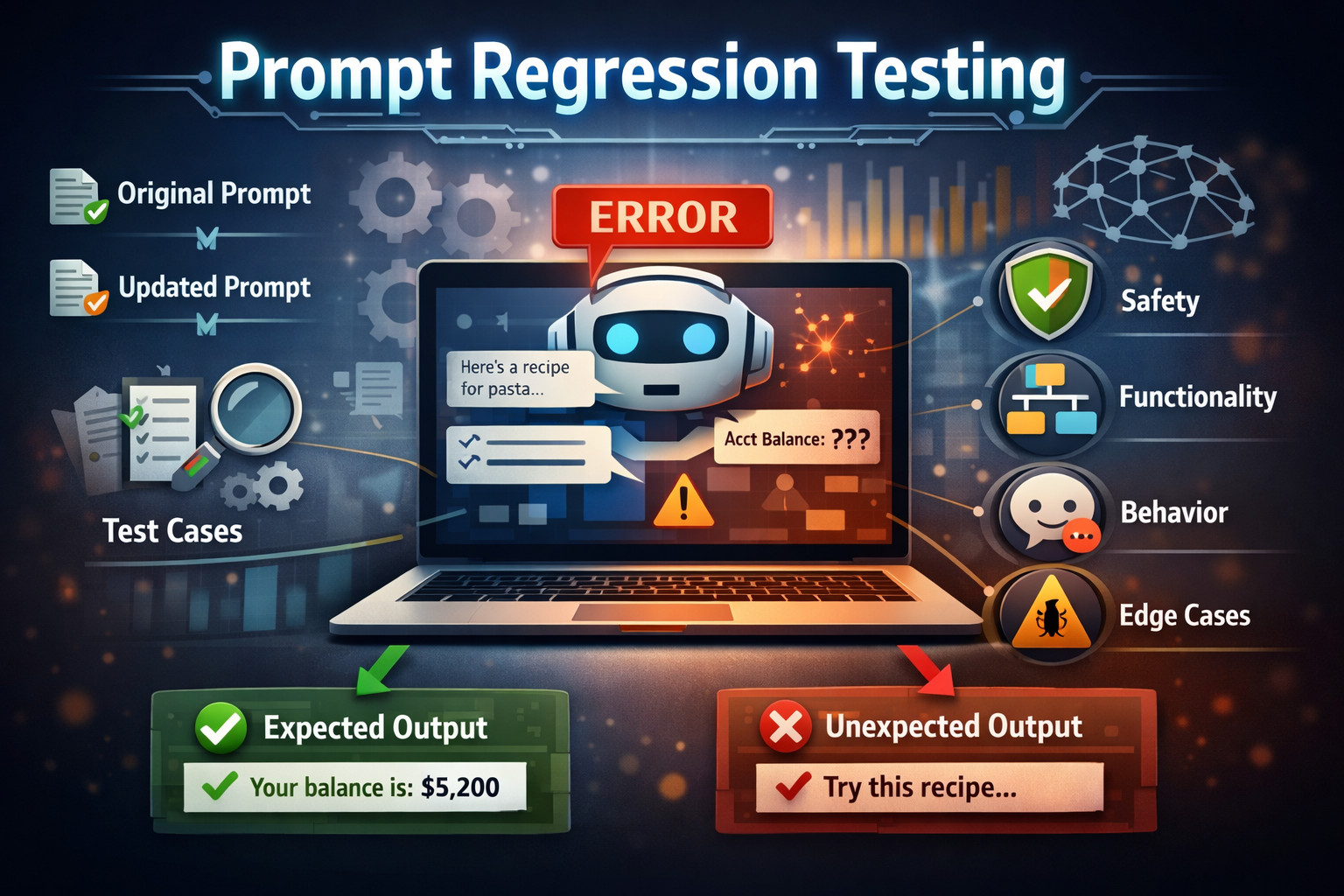

It was silent in the conference room as Sarah, a product manager at a fintech start-up, looked at her laptop screen in shock. Their AI-driven customer service chatbot, which had performed flawlessly over a three-month period, was suddenly spewing gibberish in response to basic banking inquiries. Only yesterday, it was enabling people to easily see their balances and transfer money. Next thing, today, it was recommending recipes in response to a query about account statements.

“What happened?” her CTO asked, leaning over her shoulder.

“I have no idea,” Sarah admitted. “We didn’t change anything in the application code. We just updated the prompt to make responses more friendly.”

This scenario occurs in millions of businesses around the globe every day. Now that artificial intelligence and large language models are central to business operations, teams are finding a rather uncomfortable truth: AI systems are much more fragile than traditional software. A small change to a prompt can trickle into surprising behaviour and break features that used to work with no issues.

The problem is not that prompts change. The problem is that their impact is rarely validated. This is where Prompt Regression Testing becomes essential.

| Key Takeaways: |

|---|

|

Understanding Prompt Regression Testing

Prompt regression testing is a systematic quality assurance process designed to ensure that changes to AI prompts, models, or configurations don’t inadvertently break existing functionality. Much like traditional software regression testing verifies that new code changes haven’t introduced bugs into previously working features, prompt regression testing validates that modifications to your AI system maintain the expected behaviors and outputs.

The only difference is that now, rather than testing code paths and user interfaces, we are testing behavior, intent compliance, form and function of artificial intelligence outputs. A prompt is not just text. It contains logic, priorities, guardrails, tone and assumptions. When the logic changes, whether deliberately or not, the behavior of the system follows. The responsibility for catching that drift before it reaches users is the purpose of prompt regression testing.

Read: Prompt Engineering in QA and Software Testing.

Why Prompt Regression Exists at All

It’s not that most teams are intentionally trying to break their own prompts; it’s just that problems tend to sneak in with regular, good-faith updates. Technical tricks such as making outputs sound more human, adding extra context to be more precise, or adding examples for instance-level consistency do not seem harmful at first sight, but subtly change how the model understands the supervision signal.

Regressions also show up when teams upgrade to a new model, adjust temperature, or broaden use cases without refactoring the original prompt. Each change also affects the balance of constraints and intentions, and eventually, the prompt moves away from what it was designed to do in the first place, even though each individual shift made sense.

All of these sound harmless in isolation. However, prompts behave holistically. A sentence inserted only for tone may undermine constraints established ten lines above. A new example can bias outputs away from rare edge cases. A model update can alter how strictly instructions are followed. Prompt regression testing emerged since teams saw an uncomfortable scenario: AI failures often look like success until you inspect them closely.

Read: How to Test Prompt Injections?

Prompt Regression vs. Traditional Regression

Traditional regression testing assumes determinism: given the same input, the same answer will always be expected. AI prompts shatter this paradigm because outputs and phrasing can vary among runs, wording may change without changing meaning, and creativity settings (temperature) will directly affect phrasing. To make matters worse, model upgrades or backend changes can cause mild changes in behavior over time, even when the prompt itself remains unchanged.

- Are mandatory elements still present?

- Are forbidden actions still avoided?

- Is the intent still respected?

- Is the structure still valid?

- Are safety and compliance boundaries intact?

In other words, prompt regression testing verifies what must not change, not what can vary.

| Traditional Regression Testing | Prompt Regression Testing |

|---|---|

| Expects identical outputs for the same input | Accepts output variation as long as intent is preserved |

| Relies on exact value or text matching | Relies on behavioral and semantic validation |

| Fails tests on minor wording or formatting changes | Ignores wording changes if meaning remains intact |

| Assumes system behavior is stable over time | Accounts for model drift and evolving behavior |

| Validates what changed in the output | Validates what must not change in behavior |

Common Prompt Regressions

Prompt regressions are seldom plain failures. They tend to be rather subtle degradations that pass casual review. A prompt that once declined to provide legal advice might begin giving “general guidance.” A test case generator that previously employed boundary scenarios may stop doing so. A defect summary prompt may still generate summaries, but no longer includes root cause analysis. A support bot might remain polite while quietly violating policy constraints. Read: What is Regression Testing?

Prompt regression can be done to cover different categories.

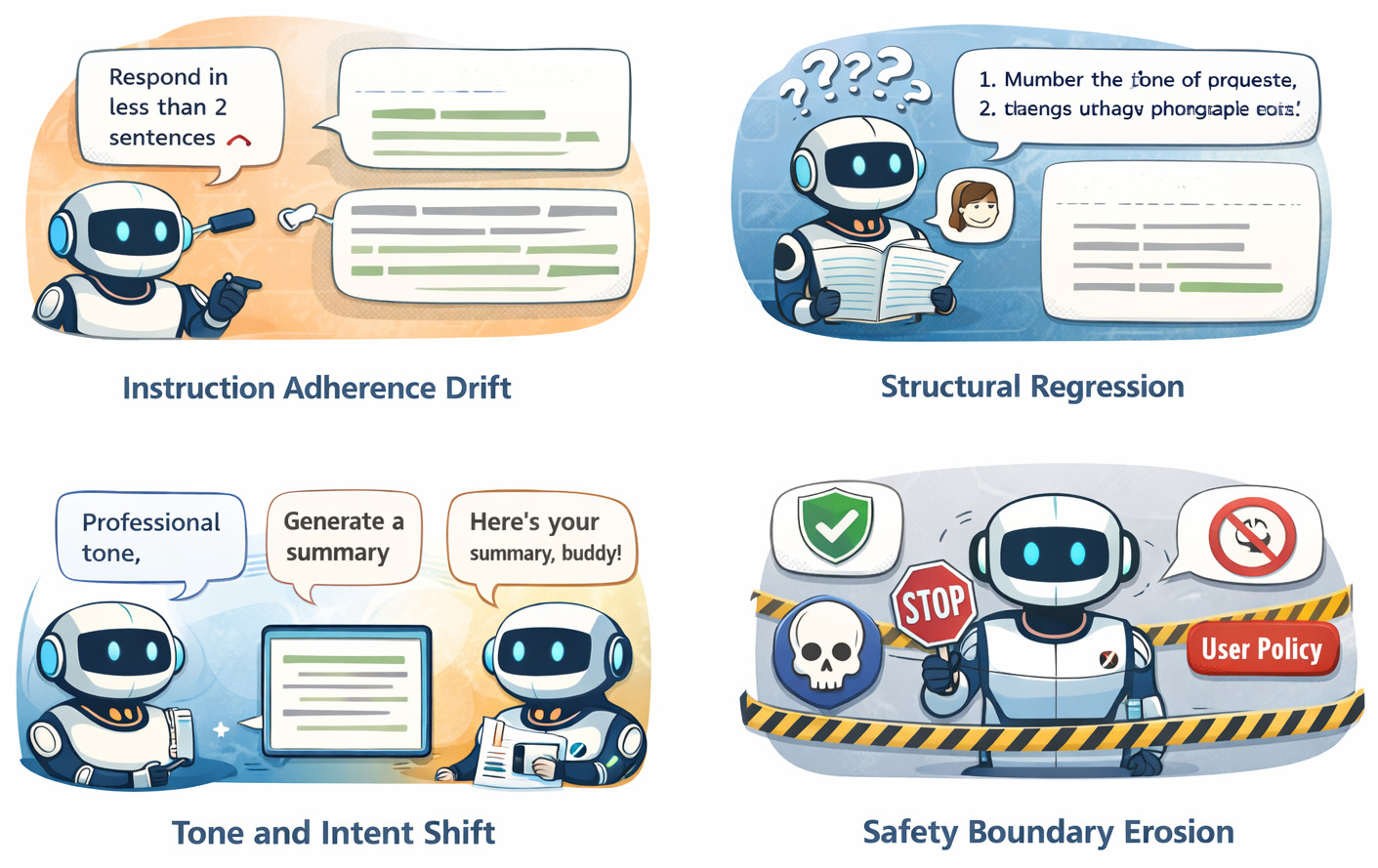

- Instruction Adherence Drift: The model gradually ignores or deprioritizes critical instructions as the prompt becomes longer or more complex. For example, a prompt that explicitly says “do not provide recommendations” starts returning suggested actions buried at the end of the response.

- Structural Regression: The output stops following the required format, which breaks parsing or downstream automation. For example, a response expected in JSON starts with explanatory text before or after the JSON block.

- Tone and Intent Shift: The style of AI turns casual, opinionated, or speculative, which doesn’t match its original intention. Example: a neutral, factual assistant adds phrases like ‘I think’ or ‘you should probably’ to compliance outputs.

- Safety Boundary Erosion: Guardrails deteriorate over time, and hallucinations increase, refusals or sensitive subjects are mishandled. For instance, A model that previously refused to answer medical questions starts providing “general advice” without disclaimers. Read: What are AI Hallucinations? How to Test?

- Coverage Loss: The AI gradually ceases to cover edge cases, negative paths, or other non-functional considerations that were previously included. For example, error scenarios and failure conditions disappear from API response explanations, leaving only the happy path.

Types of Prompt Regression Testing

- Functional Prompt Regression Testing: This ensures the AI continues to perform its primary intended task correctly after prompt or model changes. It validates that the core outcome remains accurate, complete, and usable. If the AI produces outputs that technically respond but fail to solve the original problem, a functional regression has occurred.

- Behavioral Regression Testing: This focuses on how the AI responds rather than what it responds with. It ensures consistency in tone, level of detail, professionalism, and interaction style across prompt changes. Even when answers are correct, shifts in behavior can reduce trust, usability, or brand alignment.

- Safety Regression Testing: This confirms that the AI still operates within ethical, legal, and organizational boundaries. Prompt changes can inadvertently erode refusal logic, safety instructions, or policy enforcement. With this kind of testing, the AI does not regress to create harmful, sensitive, or non-compliant content.

- Context Handling Regression Testing: This verifies that the AI maintains conversational state and intent understanding across interactions. It validates that prior inputs, clarifications, and constraints are still correctly interpreted after prompt updates. Regression here typically manifests as inconsistent answers, lost context, or incorrect assumptions.

- Edge Case Regression Testing: This focuses on unusual, ambiguous, or adversarial inputs that are easy to miss when testing the prompts. It ensures the AI responds safely and consistently to missing data, conflicting instructions, or deliberately tricky prompts. These regressions are rare but high-impact, often surfacing only in real-world usage.

Read: AI Context Explained: Why Context Matters in Artificial Intelligence.

Core Goals of Prompt Regression Testing

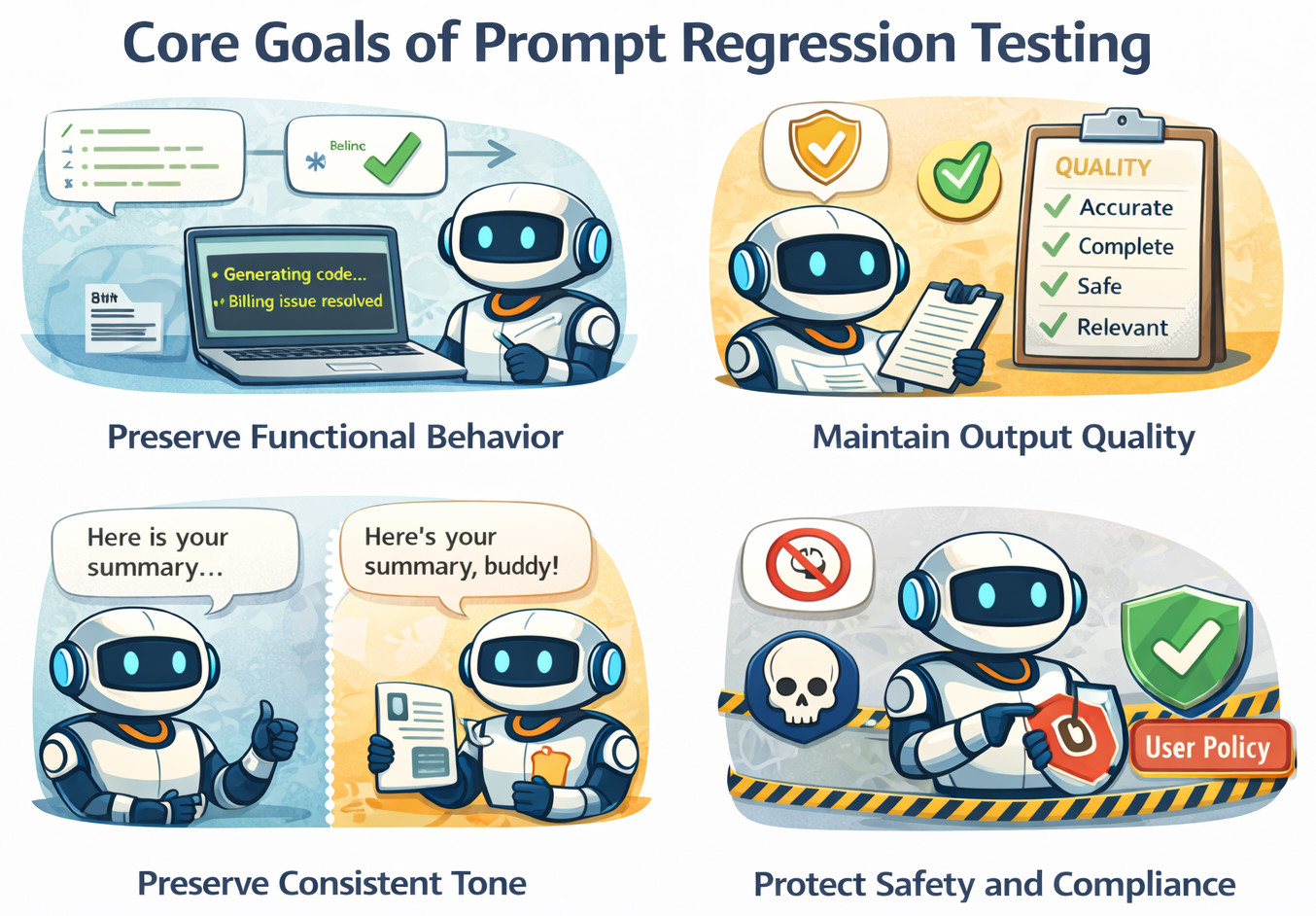

Prompt regression testing goes beyond detecting obvious breakages and focuses on protecting the stability of AI behavior over time. Its goal is to ensure that improvements or changes do not silently degrade reliability, trust, or compliance.

- Preserve Functional Behavior: The AI should keep responding to the same types of questions correctly according to the rules and workflows. Any change in the expected outcome is a functional regression, despite the responses seeming reasonable.

- Maintain Output Quality: Prompt changes should not degrade accuracy, raise hallucinations, or deliver irrelevant or misleading content. Regression testing ensures quality remains stable even when prompts change.

- Preserve Consistent Tone: The AI should maintain a stable tone, level of professionalism, and communication style across prompt updates. Sudden shifts in tone can impact user trust and brand alignment.

- Protect Safety and Compliance: AI systems must continue enforcing safeguards against harmful advice, policy violations, and sensitive data exposure. Regression testing ensures these guardrails remain intact despite prompt modifications.

Designing Effective Prompt Regression Tests

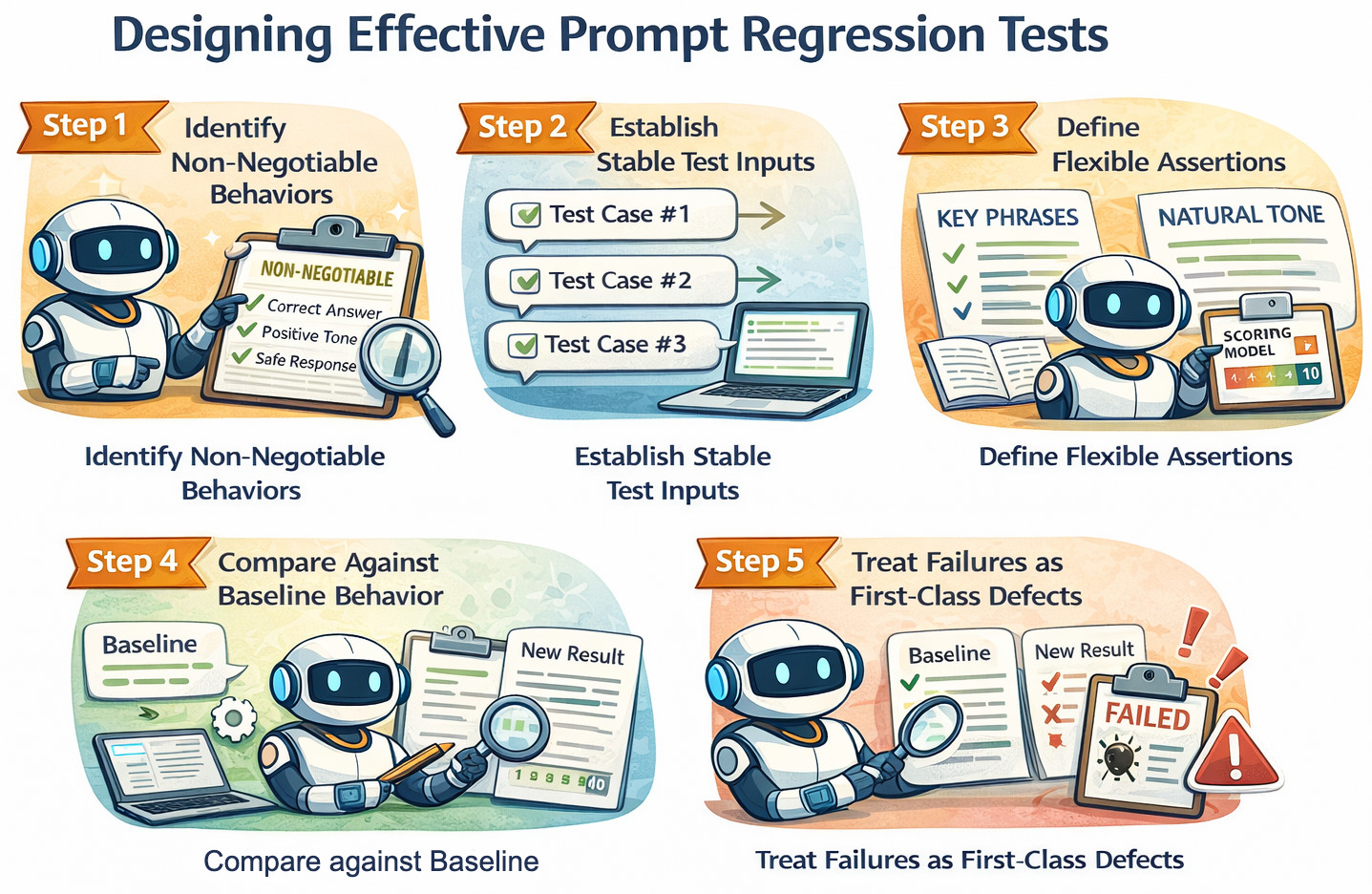

Designing effective prompt regression tests begins with clarity. You must define what must remain true, regardless of how the prompt evolves. That means explicitly identifying non-negotiable behaviors such as required structure, safety boundaries, and intent preservation so variations in wording don’t trigger false failures.

Step 1: Identify Non-Negotiable Behaviors

This exercise prioritizes what behaviors remain constant, no matter how the prompt evolves over time. These rules act as anchors for regression and safeguard core constraints such as safety, structure, and intent.

For example, “the AI must not provide medical advice,” “the output must always include severity classification,” and “the AI must refuse unsupported requests.”

Step 2: Establish Stable Test Inputs

Prompt regression testing relies on consistent inputs that reliably expose behavioral changes. These inputs should include common user queries, edge cases, and scenarios that have caused issues in the past.

For instance, a standard user query, a malformed input, a boundary-value request, and a query that previously caused hallucinations can all work together as a robust regression suite.

Step 3: Define Flexible Assertions

Assertions must validate meaning and behavior, instead of exact wording or phrasing. This method creates room for the range of natural responses while preserving necessary rules and intent as much as possible.

- If we need to check the content or a message has a positive intention. We can write the test script as:

check that page "contains a positive message" using ai

- If we need to confirm that a statement is true about the current screen, we can write the test step as:

check that statement is true "page contains testRigor logo"

Step 4: Compare Against Baseline Behavior

Baselines represent previously accepted and correct behavior, not identical outputs. A regression is detected when the new behavior violates non-negotiable rules defined earlier.

For example, if a previous version correctly refused a request and the new version answers it partially, the regression test should fail.

Step 5: Treat Failures as First-Class Defects

As in the development cycle, prompt regressions must be treated with the same seriousness as code regressions. Any unintended behavior change should block releases until you fix the prompt.

An Example: If a prompt update causes the AI to skip safety checks or ignore mandatory instructions, the change should not be shipped.

Prompt Regression and Evaluation

It is a common confusion, since both practices involve looking over AI outputs, but solve very different problems. Prompt evaluation tells you how good your response is and prompt regression testing checks that previously correct behavior hasn’t changed.

Prompt evaluation evaluates quality and is subjective, looking at how to improve response quality as time goes by. Prompt regression testing measures stability according to objective pass/fail criteria and is designed to prevent unintended behavioral changes.

This makes sense if you picture things this way: evaluation makes things better, regression testing prevents you from breaking what already works. You want both, but at different stages of AI development they perform different tasks.

| Prompt Evaluation | Prompt Regression Testing |

|---|---|

| Focuses on response quality and usefulness | Focuses on behavioral stability over time |

| Often involves subjective human judgment | Uses objective pass/fail criteria |

| Optimizes prompts for better performance | Protects prompts from unintended breakage |

| Evaluates how good an answer sounds | Verifies required behaviors still exist |

| Encourages experimentation and iteration | Restricts changes that violate constraints |

| Helps improve outputs | Helps prevent regressions |

Automating Prompt Regression Testing

Prompt regression testing automation guarantees that the behavior of the AI stays the same in response to prompt updates, model modifications, and configuration tweaks. Rather than conducting checks manually, automation continuously verifies the expected behavior, structure, tone, and safety rules, ensuring that regressions are visible early in the development cycle.

Prompt regression tests can be written in plain English with testRigor, enabling AI responses to be validated without fragile exact-text matches. Teams can assert that required information is present, prohibited content is absent, and that outputs follow the expected structure with enough flexibility to maintain correctness. This makes prompt validation accessible to both technical and non-technical team members.

Moreover, testRigor allows end-to-end validation by testing AI-driven workflows instead of isolated prompts. AI responses can also be checked as part of real user journeys so that prompt changes do not break downstream processes, automation, or decision-making. This approach aligns prompt regression testing with real-world usage, rather than just theoretical correctness.

Read: Prompt Engineering in QA and Software Testing.

Closing Thoughts

Prompt regression testing is a natural evolution of software testing in the age of AI, where prompts increasingly replace traditional logic and directly drive system behavior. As prompt changes can introduce subtle and high risk regressions, tools like testRigor help teams test prompts with the same rigor as code by validating behavior, safety, and consistency at scale. In a world where words control outcomes, systematically testing those words is no longer optional but essential.

Frequently Asked Questions

- Why do prompt changes cause regressions even when the application code stays the same?

Because prompts directly encode logic, priorities, constraints, and assumptions for AI behavior. Even small wording changes can alter how the model interprets instructions, causing shifts in intent, safety handling, or output structure without any code-level modification.

- How is prompt regression testing different from manually reviewing AI outputs?

Manual review is subjective, inconsistent, and does not scale. Prompt regression testing uses defined behavioral rules and pass/fail criteria to systematically detect unintended changes, making regressions visible and repeatable before they reach users.

- Can prompt regression testing work with non-deterministic AI outputs?

Yes. Prompt regression testing does not rely on exact text matching. Instead, it validates intent preservation, required elements, forbidden behavior, structural correctness, and safety boundaries, allowing natural variation while protecting critical behavior.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |