Can You Trust an AI That Can’t Explain Its Decisions? A Guide to Explainable AI Testing

|

|

Modern AI systems make critical decisions such as who ends up getting hired, who is approved for loans, or flagged for health risks now. Still, if someone asks why one of these systems acted a certain way, the truth usually comes down to uncertainty; understanding its exact reasoning isn’t always possible.

This brings up a tough truth: can you trust an AI that can’t explain its decisions?

Maybe it looks clear at first glance. Definitely not a yes. When results depend on hidden logic, discomfort follows: more so when consequences run deep.

But in practice, things are messier.

What makes some AI so strong (large language models, deep neural networks, reinforcement learning agents) also hides how it works. These complex systems beat traditional, clearer methods by wide margins. Yet understanding them feels out of reach. Teams are caught in a tradeoff: accuracy versus explainability, speed versus insight, automation versus control.

Honest talk about the push and pull around transparency in machine learning starts here.

We’ll look at what explainable AI really means, why black box AI creates trust issues, where theory breaks down in real projects, and how organizations can make better decisions about when and how to trust AI. Choices about relying on AI need clearer reasoning behind them. Testing tools powered by algorithms face this challenge sharply.

| Key Takeaways: |

|---|

|

Explainable AI (XAI): What it is and What it is not

Explainable AI (XAI) is the field focused on making AI systems more transparent, interpretable, and understandable to humans.

- Feature importance scores

- Natural language rationales

- Visualizations of model behavior

- Counterfactual explanations (“If X were different, the outcome would change”)

Here’s the twist though: not all explanations are equally useful.

Real projects highlight something odd. Explainability often becomes performative. Charts appear clear, yet leave people guessing why a choice was made.

One thing many teams overlook? Explanations are audience-dependent. A point clear to someone like a data scientist might baffle a manager focused on outcomes. Details useful to a regulator could overwhelm someone just trying to use the tool.

Explainable AI is not a silver bullet. It’s a design problem, not just a technical one.

Levels of Explainability: What Kind of Answers Do You Actually Need?

One mistake the explainable AI conversation often makes is treating explainability as a binary choice. Some models get labeled transparent, others tossed into the black box pile. Truth shifts gradually, though.

Different stakeholders demand different types of answers, mixing them up brings annoyance all around.

What made a model pick certain inputs (technical explainability) could matter to someone working with data. For a tester checking outputs, understanding how the system understood the instruction might be key (operational explainability). Executives often just require a clear story they can stand behind when decisions are questioned (Narrative explainability).

Real projects often fall apart when people talk without listening. Someone shouts “visibility,” while others care only about speed. Rarely does anyone pause to wonder: explainable for whom, and for what purpose?

AI Decision Making and the Trust Problem

Most of the time, AI picks answers on the basis of best probability. Patterns show up when models analyze lots of examples, then they try those patterns later in fresh cases. Human thinking runs differently than these systems ever work. There’s no real understanding behind their choices. They produce outputs based on learned correlations.

It works okay, until suddenly you’re left wondering why.

Why did the AI system reject the loan application? A person finds their account blocked without clear reason. Or maybe a test runs and breaks everything live. Questions come up fast. What went wrong here? If all anyone hears is “that’s what the model chose,” belief in the system drops. Fast.

In real projects, this usually breaks when AI outputs collide with accountability.

This matters because people now question AI trustworthiness. Just doing the job fairly well isn’t good enough anymore. They need to be understandable enough for humans to rely on them responsibly.

Black Box AI: Powerful, Opaque, and Uncomfortable

Inside black box AI, hidden layers shift data without clear reason. These deep learning setups refuse to show how decisions form.

What makes black box AI tricky is not its design. It works well, sometimes better than anything else around. Yet the catch? People tend to overlook how difficult it becomes to debug behavior when something goes wrong.

If a rule-based system fails, you can trace the logic. If a black box model fails, you’re often left guessing which data patterns caused the issue or whether it will happen again.

Can AI Be Trusted Without Full Transparency?

Here’s where the debate starts getting interesting.

True enough, you do not need to understand everything to place your faith in it. Take flight, for instance, people board planes daily while having zero clue about jet engine mechanics. Reliability shows up more than knowledge does. Records stay clean, systems get checked, outcomes remain steady. That pattern builds confidence over time.

It turns out there’s a bit of reality here. Human trust in AI systems often develops through repeated exposure and reliability, not deep explanations. A steady pattern of solid results can win acceptance, even when the inner logic stays hidden.

However, fairness slips away when AI decides without explaining themselves. People want to know why a decision was made, not just that it usually works.

Here’s the thing: without clear responsibility, AI accountability can drift off track. Belief in systems doesn’t come only from results. Sometimes it is about the ability to audit, contest, and correct decisions when necessary.

Ethics, Bias, and the Limits of Trustworthy Artificial Intelligence

What makes AI unfair? Bias often hides in training data and model behavior, surfacing only when certain groups are disproportionately affected.

Without explainability, biased AI decisions can persist unnoticed. Teams may not realize there’s a problem until damage has already been done. This is exactly what pushes ethical AI and responsible AI frameworks to spotlight transparency, balance, and governance. Teams ship systems because they work well enough, even if they can’t fully explain them.

Read more: AI Model Bias: How to Detect and Mitigate.

When Explainability Becomes a Legal and Business Requirement

In regulated industries, explainability matters more now. Banks, hospitals, care providers, even job screening tools must reveal their reasoning, more often than before. What lies behind choices can’t stay hidden if oversight exists.

But even outside regulation, business leaders are starting to care.

When actual projects face audits, outages, or user escalations, things often fall apart. Only then do team members see they have no clear answer for how the AI made its choices, and that gap turns into real risk.

This change puts the argument in a new light. Not that it is AI that poses danger. What matters most? Being unable to explain it.

Read more: AI Compliance for Software.

AI Trust Issues in Software and Test Automation

This problem becomes especially visible in AI-powered test automation.

Modern testing tools increasingly use AI to generate tests, identify failures, and adapt to UI changes. But here’s the catch: you can’t always see how decisions happen. Hidden logic replaces clear steps. The risk is opacity.

If an AI testing tool picks what to test or why a test failed, engineers must grasp how it reached those conclusions. Without clarity, debugging turns into random guessing.

Fails happen during actual work, especially when tests crash in CI, suddenly it’s unclear if there’s a true bug, random glitch, or a flaky scenario or an AI misrepresentation. Without clear reasons behind what went wrong, confidence drops before anyone notices.

Read more: Generative AI in Software Testing: Reshaping the QA Landscape.

Explainable AI in Testing: Why it Actually Matters

Confidence comes from testing. When teams decide whether to release, they look at test outcomes. With AI in the mix, that decision inherits AI’s opacity.

What really matters in testing isn’t peeling open the AI like a machine. It’s about getting clear answers to real-world questions.

- Why was this test generated?

- Why did the AI interpret this step a certain way?

- What assumptions did the system make?

In the absence of these answers, AI-driven testing feels risky, even if it’s powerful.

How testRigor Approaches Explainability in AI-Driven Testing

Here, intelligent test automation tools, such as testRigor choose a grounded path.

What if tests sounded like everyday talk? That idea shapes how testRigor works. Clarity comes first, not complex code tricks. The system builds tests using natural language anyone can follow. Instead of positioning AI as a magical black box, testRigor emphasizes human-readable logic and intent-based testing.

When a test fails, teams see what the AI wants to test plus how its choices unfolded. What the system does connects to clear reasons instead of hidden rules.

This sounds simple, but in practice, it dramatically improves trust. When engineers see choices laid out clearly, reverse engineering reduces.

With testRigor, clarity doesn’t vanish when automation kicks in. Because of its grounding in explainable AI principles, teams keep full insight while accelerating testing.

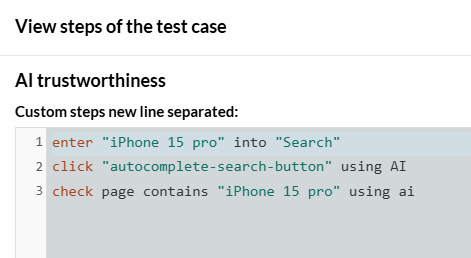

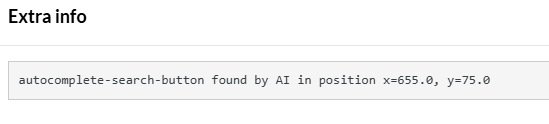

Let us see this simple example where testRigor attempts to use AI to perform an action.

So, the next screenshot highlights why the AI engine behaved the way it did, when it was given the instruction.

This highlights how testRigor is able to maintain explainability of its AI model.

When Explainability is Optional, and When it is Non-Negotiable

| Context | Stakes | Can You Rely on AI Without Explanations? | Why |

|---|---|---|---|

| Content recommendations | Low | Yes | Errors are reversible and low impact |

| UI personalization | Low | Mostly | Users can ignore or override |

| Test case generation | Medium | Sometimes | Failures must be debuggable |

| Automated bug classification | Medium | Risky | Misclassification slows teams |

| Hiring or promotion decisions | High | No | Fairness and accountability required |

| Financial approvals | High | No | Legal and ethical consequences |

| Healthcare diagnostics | Very High | No | Human lives are affected |

| Autonomous production releases | Very High | No | Failures cascade rapidly |

Final Thoughts: Trust is a Design Choice

Trust in AI is not something you “add” at the end. Decisions right at the start influence it most. Choices about model creation, output presentation, how people interact with the technology.

Explainable AI won’t solve all problems. But ignoring explainability creates risks that compound over time. True confidence shows up not in speed or power, but in consistency when tested.

Frequently Asked Questions (FAQs)

What is the difference between transparency and explainability in AI?

A: Transparency refers to visibility into how a system is built or trained. Explainability focuses on whether humans can understand and reason about its decisions. A system can be transparent but still impossible to explain meaningfully. One limitation teams often underestimate is assuming that access to model internals automatically leads to understanding, it usually doesn’t.

How does explainability apply to AI-powered testing and automation?

A: In testing, explainability directly affects confidence. When an AI-generated test fails, teams need to understand why it failed to decide what to do next. This sounds good on paper, but in practice, opaque testing tools slow teams down instead of helping them. Tools like testRigor focus on intent-based, human-readable logic so teams can reason about AI behavior instead of guessing at it.

Is explainable AI always better than black box AI?

A: Not always. Explainable AI often trades raw performance for interpretability. In some domains, that tradeoff is acceptable, even desirable. In others, it may limit what the system can do. The key question isn’t “Is this AI explainable?” but rather “Is it explainable enough for the decisions it supports?” This sounds simple, but in practice, teams rarely define that threshold upfront.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |