What is Symbolic AI in Testing? (Use Cases and Examples)

|

|

A requirement could read “the user must be able to log on quickly”. Also, “This report should have the right appearance” says a product manager. A ticket simply reads “it didn’t work.” A tester remarks, “It failed a few times.”

Even our most advanced test automation frameworks suffer because they can’t press buttons, but rather because they don’t really understand what the system is doing (should be doing), what “correct” means in this particular instance, and what we’re not supposed to do.

This is exactly the kind of space that Symbolic AI becomes interesting for, not in a buzzwordy way, but as an actual toolset to represent, reason about, and verify rules, constraints, and logic with auditability, explanation capabilities, and certainty. In other words, symbolic AI can be a great match for portions of testing that require clarity, traceability, and strict correctness, not probabilistic prediction.

At least for the past decade, AI has been all about Machine Learning (ML) and, more recently, deep learning and generative AI. These methods excel when patterns are too complicated for you to explicitly code, when you have lots of data and relatively simple models are “good enough,” and even if it’s OK if the model is wrong now and then.

| Key Takeaways: |

|---|

|

Understanding Symbolic AI

- A way to represent requirements as structured logic

- A way to derive test cases from rules

- A way to detect contradictions and gaps in requirements

- A way to prove properties of a system or workflow

- A way to explain why something is a failure

Symbolic AI does not “guess” whether something is right. It attempts to look for truth according to reason.

You can represent the policy as explicit logic, with an obvious escape clause: Silver customers get free shipping if and only if the shipping address is domestic. Otherwise, delivery is free if the user is a premium member or if the sum of goods in the cart exceeds $50; in all other cases, it is paid. Because each condition is symbolic, the system can justify its explanations by referring to the specific rule used.

There is a contradiction unless you define precedence: “premium is always free” conflicts with “international means free shipping does not apply” for premium international orders. With the override stated, the minimal tests are based on partitioning the total into < $50 vs. ≥ $50 and combining with premium vs. non-premium and domestic vs. international, yielding 8 cases. Those 8 scenarios cover all decision paths and validate both the override and the two free-shipping triggers.

Read: Generative AI in Software Testing.

Symbolic AI vs. Rule-Based: Same Thing?

Symbolic AI and rule-based systems overlap, but they aren’t the same thing. Rule-based systems are one slice of Symbolic AI, while Symbolic AI also includes logic, constraints, knowledge representations, and formal verification that go beyond simple if-else logic.

| Rule-based systems | Symbolic AI |

|---|---|

| Built mainly on explicit if-then / production rules | Umbrella for rules + logic + constraints + knowledge + formal methods |

| Often executes rules via forward/backward chaining | Can prove, infer, optimize, and verify using multiple reasoning paradigms |

| Typically answers: “Which rule fires next?” | Can answer: “What must be true?”, “Is this state reachable?”, “Is this impossible?” |

| Harder to scale cleanly past large rule sets without conflicts | Designed to handle hundreds/thousands of constraints and relationships systematically |

| Limited inference: mostly what you explicitly encoded | Strong inference: can derive consequences you didn’t write as direct rules |

| Testing support is usually manual (pick inputs, check outcomes) | Can generate tests from constraints and explain failures with logical traces |

| Explanations often: “Rule X matched” | Explanations can include proof steps, counterexamples, and a minimal unsatisfiable core (MUC) |

| Best for stable decision logic (eligibility, routing, policies) | Best for behavior spaces: correctness under constraints, safety, reachability, consistency |

Read: Prompt Engineering in QA and Software Testing.

Why Symbolic AI Matters in Testing

Symbolic AI is something that should be represented in tests, because so much “correct behavior” in real software is by definition defined by rules, permissions, workflows, pricing, policies, and state machines. With rule-based expectations, symbolic reasoning can check edge cases, find contradictions, and describe failures in a precise and auditable way.

Rules Drive Behavior

Even modern AI-heavy products have a ton of symbolic logic in them, like permissions and access control, workflows and approvals, validations and compliance checks, plus all the pricing/tax/discount math. You also see it in routing decisions, feature and policy flags, and enforcement across security, privacy, and governance.

On top of that, many core flows are just state machines in disguise, orders, tickets, onboarding, refunds, plus data integrity constraints that must always hold. When “correct behavior” is rule-defined like this, Symbolic AI aligns naturally because it can reason, detect conflicts, and explain outcomes in plain terms.

Symbolic AI Properties That Strengthen Testing

- Determinism: With the same inputs and rules, you always get the same outcome, making tests stable instead of flaky.

- Explainability: You can trace the exact rule path that produced a result, so failures come with a clear “why.”

- Auditability: Every decision can be logged as applied rules and evidence, which supports compliance and traceability.

- Precision: Results are constraint-satisfying or not reducing “mostly correct” behavior that slips through assertions.

- Coverage reasoning: You can systematically spot untested branches, combinations, and boundary conditions in the rule space.

- Contradiction detection: Conflicting requirements can be surfaced early, before they become bugs in code or tests.

Read: Top 10 Generative AI-Based Software Testing Tools.

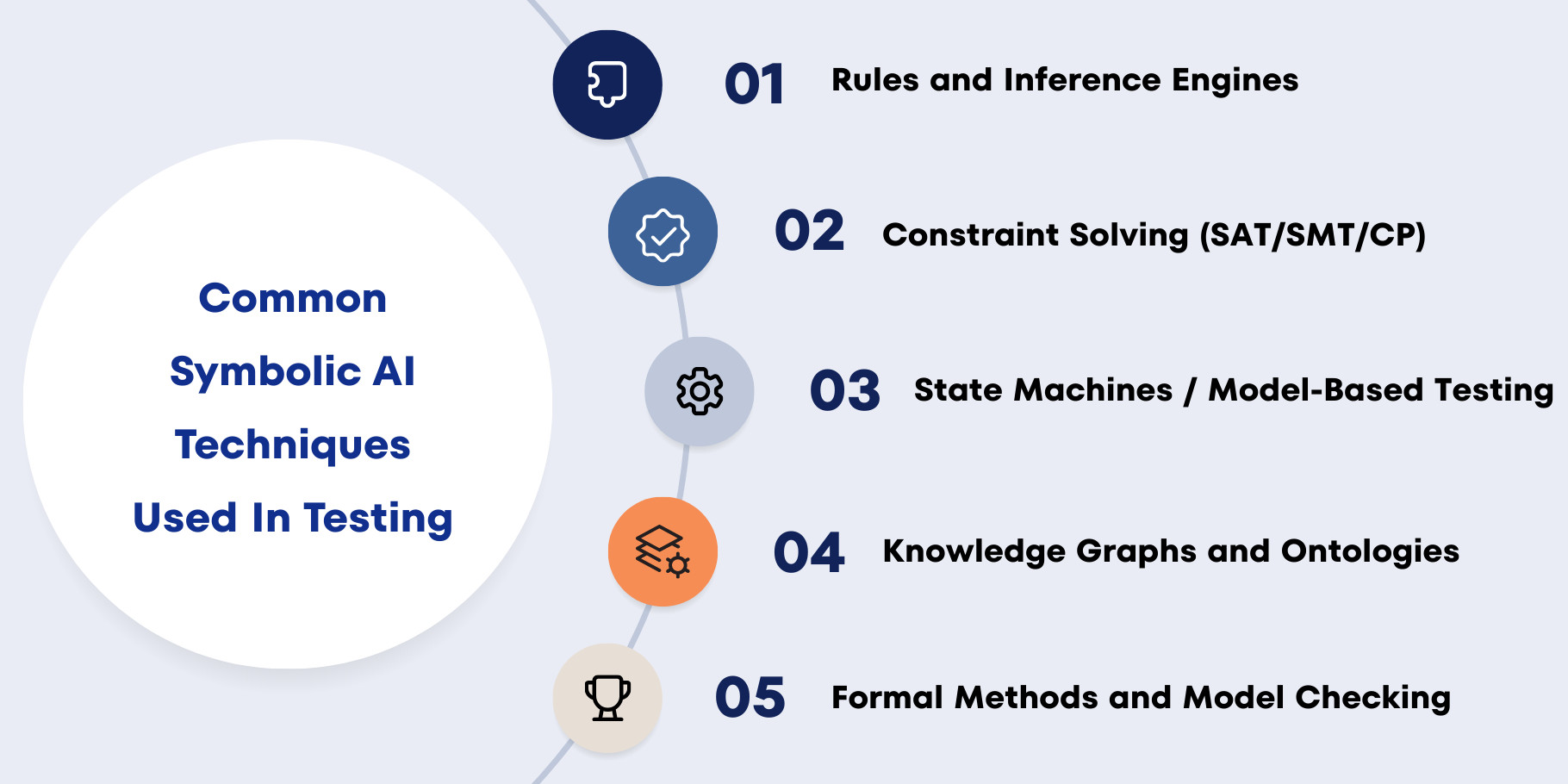

Common Symbolic AI Techniques Used in Testing

Let’s go through the main symbolic techniques and translate them into testing applications.

Rules and Inference Engines

How to Apply in Testing:

- Compute expected outcomes for complex business logic

- Validate workflows like eligibility, approvals, and routing

- Generate scalable decision-table style tests from rule sets

Example: A rules engine can calculate the expected discount for each cart/user category on demand. Your automated test asserts against the engine’s results rather than hard-coding numbers, reducing brittle expectations.

Constraint Solving (SAT/SMT/CP)

How to Apply in Testing:

- Generate input data that satisfies complex validation rules

- Generate boundary and edge-case sets systematically

- Find minimal failing combinations (shrinking)

- Explore combinations intelligently without brute force

Example: Model a loan form with constraints like age, income, DTI, and conditional requirements for self-employment or high loan amounts. Then ask the solver for valid cases, targeted invalid cases per constraint, and a minimal set that covers key logical branches.

State Machines / Model-Based Testing

How to Apply in Testing:

- Verify workflow correctness end-to-end

- Generate multi-step sequences (not just single actions)

- Detect unreachable states or missing transitions

- Validate ordering and concurrency rules

Example: For an order flow, the model can enforce “no shipping before payment” and constrain when refunds or cancellations are valid. It can generate sequences that hit rare edge paths, like cancel-after-ship leading into a returns workflow.

Knowledge Graphs and Ontologies

How to Apply in Testing:

- Verify access control and permissions at scale

- Detect inconsistent entitlement definitions

- Generate tests from role-feature-permission matrices

- Do an impact analysis to see which tests change affects

Example: If “Manager” inherits “Employee” permissions plus approvals access, the graph can compute the full effective permission set automatically. Tests can validate those derived permissions without duplicating inheritance logic across dozens of test cases.

Formal Methods and Model Checking

How to Apply in Testing:

- Verify safety-critical workflows and invariant

- Validate authentication/session protocols

- Check concurrency properties (deadlocks, ordering hazards)

- Prove “never” and “always” statements about the system

Example: You can assert properties like “an authenticated user must always have a valid session” or “every submitted ticket eventually reaches resolved/closed.” A model checker either proves the property in the modeled space or returns a concrete counterexample trace you can turn into a regression test.

Use Cases of Symbolic AI in Testing

In practice, teams use Symbolic AI wherever behavior is rule-defined, and the test space is too large to cover manually. It turns fuzzy requirements into executable models that can generate tests, compute expected results, and explain failures with clear, auditable logic.

Test Case Generation from Business Rules

When business rules keep growing (discounts, eligibility, exceptions), manual suites inevitably miss interactions and edge cases. A symbolic model can generate a minimal set of tests that covers every rule, override, and boundary, without exploding into 2×2×2 combinatorics. This gives you scalable coverage that stays aligned with the actual policy logic.

Read: Creating Your First Codeless Test Cases.

Oracle Generation (Computing Expected Results)

The “oracle problem” is brutal when outputs depend on many rules (tax, pricing, entitlements, compliance), because hard-coded expected values become fragile and outdated. A symbolic oracle computes expected results from the same rule definitions, so tests compare system output vs model output. When rules change, you update the model once, and the whole suite stays consistent.

Security and Authorization Testing

Authorization is rule-dense and high-stakes: RBAC/ABAC policies, inheritance, context constraints (time/location/device), and exceptions can create subtle privilege leaks. Symbolic reasoning can generate a thorough permission matrix and also detect contradictions, accidental allows, and potential escalation paths. The key advantage is explainability—every allow/deny is traceable to specific rules.

Read: Security Testing.

Comparing Symbolic AI and ML

Symbolic AI and machine learning both bring intelligence into testing, but they work in fundamentally different ways. Understanding what each approach is good at and where it falls short helps you choose the right technique or blend them for more reliable, scalable test automation.

Read: Machine Learning to Predict Test Failures.

Source Of Intelligence: Rules Vs. Data

Symbolic AI encodes knowledge explicitly as rules and constraints, so it works well even when you have little or no historical data. ML learns from examples, so it can generalize without hand-written rules but needs training data and continuous evaluation. In testing, rule-defined behavior favors symbolic, while fuzzy or pattern-defined behavior favors ML.

Explainability and Traceability

Symbolic AI is usually easy to explain because outcomes can be traced to specific rules, constraints, and inference steps. ML explanations are often probabilistic and approximate, especially with deep models, making “why” harder to pin down. If you need audit trails, requirement traceability, or compliance evidence, Symbolic tends to fit better.

Determinism and Stability

Symbolic systems are deterministic given the same inputs and rule set, so failures are reproducible and easier to debug. ML outputs can shift with retraining, data drift, sampling variation, or model updates, and predictions are inherently probabilistic. For automation stability and reduced flakiness, symbolic approaches are typically safer.

Coverage and Completeness

With symbolic models, you can reason about logical completeness: untested rules, unreachable states, missing transitions, and contradictory constraints. With ML, coverage usually means dataset diversity rather than guaranteed logical branch coverage, and confidence scores do not imply correctness. For verification-style testing where you must prove behavior, Symbolic has a strong advantage.

Handling Ambiguity and Fuzzy Correctness

Symbolic AI struggles when correctness is subjective or noisy, such as visual “looks right,” changing UIs, natural language tone, or OCR-like variability. ML excels when patterns are hard to formalize, you can learn from examples, and approximate correctness is acceptable. That makes ML strong for visual anomaly detection, failure clustering, flaky-test prediction, and natural language-based classification.

Effort Tradeoffs

Symbolic AI tends to have an upfront modeling cost and needs updates as rules evolve, but it pays back with stability and transparency. ML shifts effort to data collection, labeling, training, and monitoring, which can scale well in messy domains but requires ongoing governance. In practice, both require maintenance: symbolic maintains rules, ML maintains data and models.

Symbolic AI vs. ML in Testing: Where Each Dominates

| Where ML dominates | Where Symbolic AI dominates |

|---|---|

| Visual testing and UI perception: judge layout breakage, chart rendering, alignment, visibility | Business logic correctness: discounts, eligibility, pricing, tax, compliance rules, workflows |

| Log anomaly detection: spot unusual patterns in logs, metrics, traces, and detect new failure modes early | Policy enforcement and access control: RBAC/ABAC, entitlements, rule overrides, exceptions |

| Failure clustering and triage: group similar failures, identify common root causes, and suggest likely components | Complex validation and configuration: constraints, compatibility matrices, feature flags, option rules |

| Predictive quality analytics: predict risky builds, likely test failures, and defect likelihood from change patterns | System state correctness over time: state machines, protocol correctness, lifecycle, and transition rules |

| Pattern learning in messy domains: handle ambiguity where “good enough” classification is acceptable | Compliance and audit trails: prove correctness with traceable, explainable decisions rather than probabilities |

Hybrid Approaches: Symbolic + ML in Modern Testing

Hybrid testing works best when you let ML do what it’s good at (perception and pattern spotting) and symbolic reasoning do what it’s good at (deterministic decisions and proofs). For instance, ML can reveal UI elements, visual discrepancies or sketchy outliers, but symbolic rules can determine if those changes are really allowed according to the requirements. This keeps the system useful over messy real-world signals, without forfeiting the ability to justify and audit decisions.

Another strong pattern is using ML to suggest rules and symbolic models to enforce them as a reliable oracle. Symbolic solvers can also generate a large pool of valid and invalid tests, and ML can prioritize which ones to run first based on change risk and historical failures. Finally, symbolic invariants can define “must never happen” safety properties while ML monitors telemetry for “weird” behavior, giving both rigor and early warning.

Conclusion

Symbolic AI brings hard correctness back into testing by turning fuzzy expectations into explicit rules, constraints, and models that can be reasoned about deterministically. That makes it uniquely valuable for generating thorough tests, computing reliable expected outcomes, and explaining failures with traceable logic instead of probabilities. In modern QA, the most effective approach is often hybrid, let ML handle messy perception and pattern detection while symbolic reasoning enforces what must be true and proves when it is not.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |