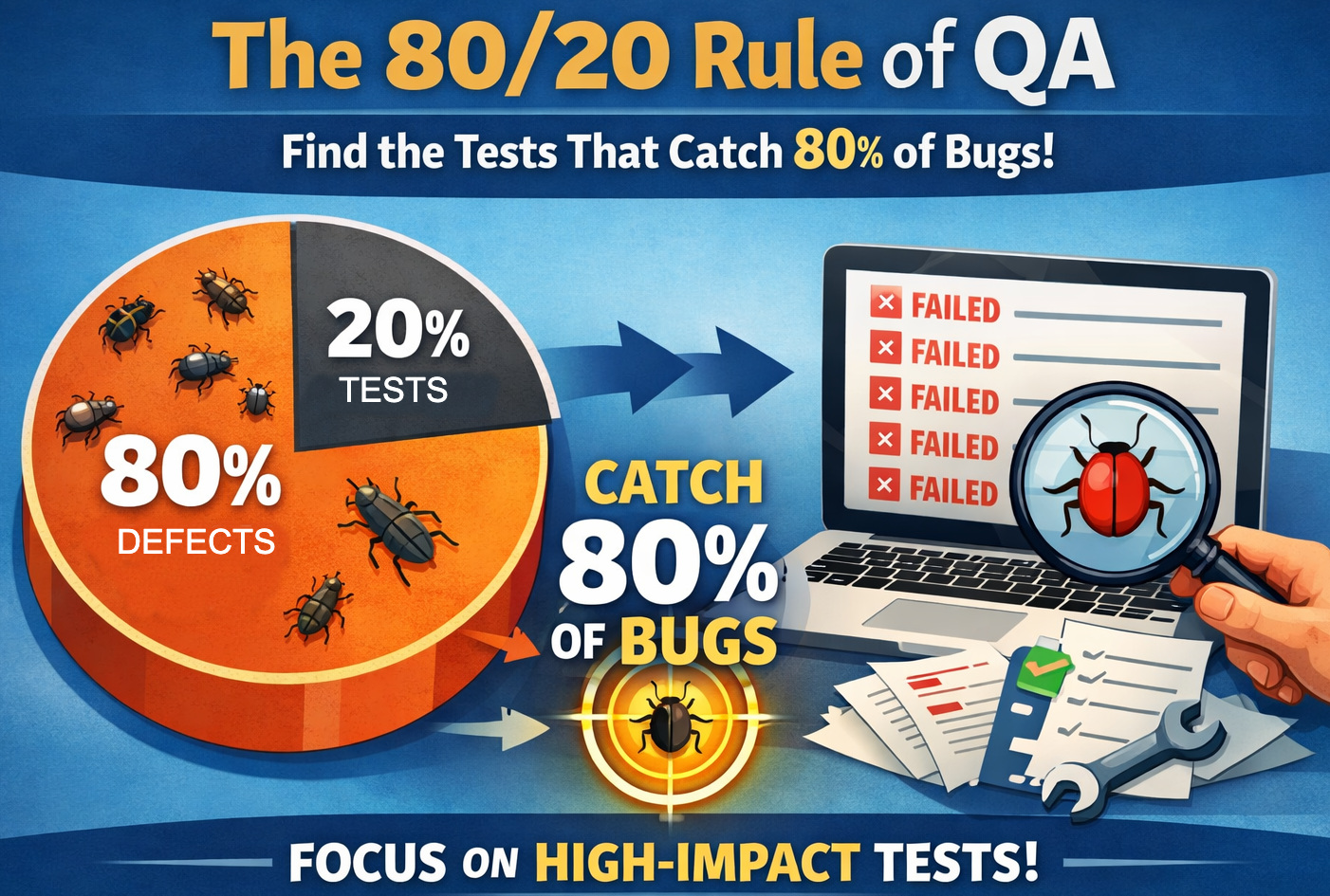

The 80/20 Rule of QA: Find the Tests That Catch 80% of Bugs

|

|

In software testing, there is a hard truth that isn’t often discussed: the value provided by tests is very uneven. A small minority of tests always catch important defects early, and a much larger test set quietly saps time and energy without any meaningful return.

Over time, test suites tend to become bloated and inefficient, leading quality assurance teams to reconsider the definition of effective testing.

The 80/20 rule in software testing provides a powerful lens with which to tackle this imbalance, directing attention toward the handful of tests that yield the greatest results. Teams can optimize their execution, minimize maintenance costs, and speed up releases by figuring out which 20% of tests find 80% of defects.

Such a focused methodology seamlessly empowers a more efficient AI-centric development environment that encourages sharper, data-backed testing initiatives.

| Key Takeaways: |

|---|

|

What is the 80/20 Rule in Software Testing

The 80/20 Rule (also known as the Pareto Principle) explains a critical imbalance in outputs, which is remarkably valid for QA. In software testing, it implies that a tiny fraction of inputs, like tests or modules or features, account for most output, especially negatives like defects and failures.

It means that a narrow set of modules usually creates the majority of bugs for QA teams, while a handful of features consistently bring up almost all production issues. The same applies to the test cases; a tiny number of them are responsible for unearthing most failures.

It is important to understand that this is not a rigid formula but a recurring pattern observed across projects and systems. The actual ratio may vary, but the imbalance consistently exists, making it a valuable lens for optimizing testing efforts.

Read: 7 Most Common Engineering Bugs and How to Avoid Them.

Why This Happens

- Risk Concentration: Complex components like payment processing, authentication, and data transformations involve intricate logic, making them more error-prone. These areas often become hotspots for defects due to their criticality and interconnected nature.

- Change Frequency: Modules that are updated frequently carry a higher risk of regression and unintended side effects. Continuous changes increase the probability of introducing new bugs, even in previously stable areas.

- User Impact: Features that are heavily used by users tend to surface more defects simply due to higher interaction volume. Increased exposure leads to quicker discovery of issues compared to rarely used functionalities.

- Test Redundancy: Over time, test suites accumulate duplicate or overlapping tests that validate the same behavior. These redundant tests add execution and maintenance overhead without significantly improving defect detection.

Read: Risk-based Testing: A Strategic Approach to QA.

The Problem with Traditional Test Suites

Let us have a look at the issues we usually face with traditional test suites.

Losing Efficiency Over Time

Traditional test suites have a tendency to bloat and can become less efficient over time, as tests are added without consideration for removing other tests (i.e., they may not be cut when they would no longer be useful). Though they look complete at first glance, they usually don’t lead to either defect detection or maximization of testing value. It gives a false sense of security but adds to execution and maintenance effort.

Symptoms of Inefficient Test Suites

You are trained to verify that many teams generate thousands of test cases, but they fail rarely, showing low effectiveness in capturing real problems. Long run times slow feedback loops, making it difficult to enable rapid releases. Moreover, flaky tests add noise, and the suite can still miss important defects despite high coverage.

The Illusion of Coverage

Often, indicators of quality, such as code coverage, number, and frequency of tests, are wrongly used. If all vertices are covered, then coverage is high, but this does not guarantee that the important scenarios will be tested (if we focus on the most predictable paths). So there is a failure to thoroughly test edge cases and real-world user behavior, resulting in critical bugs that make it into production.

Read: Code Coverage vs. Test Coverage.

Defining High-Value Tests

Before we can identify the most critical 20%, we first need to have a clear understanding of “value” as it relates to testing. Each test is not equally beneficial in terms of discovering defects, decreasing risk, or instilling user confidence. High-value tests are those that will consistently give useful feedback, reveal important problems that must be addressed, and have a cost-benefit ratio worthwhile of this maintenance effort.

Characteristics of High-impact Tests

- Catch Critical Bugs: High-value tests focus on detecting defects that impact core functionality and directly affect users. They also help prevent issues that could lead to revenue loss or significant business disruption.

- Cover High-risk Areas: These tests are designed to target parts of the system that are inherently complex or frequently modified. They also prioritize integration points where multiple components interact, and failures are more likely.

- Fail Meaningfully: When high-value tests fail, they clearly indicate genuine issues rather than false positives. They provide actionable insights, helping teams quickly identify and resolve the root cause.

- Represent Real User Behavior: Such tests simulate realistic end-to-end workflows that users actually perform. They emphasize business-critical scenarios to ensure the system behaves correctly in real-world conditions.

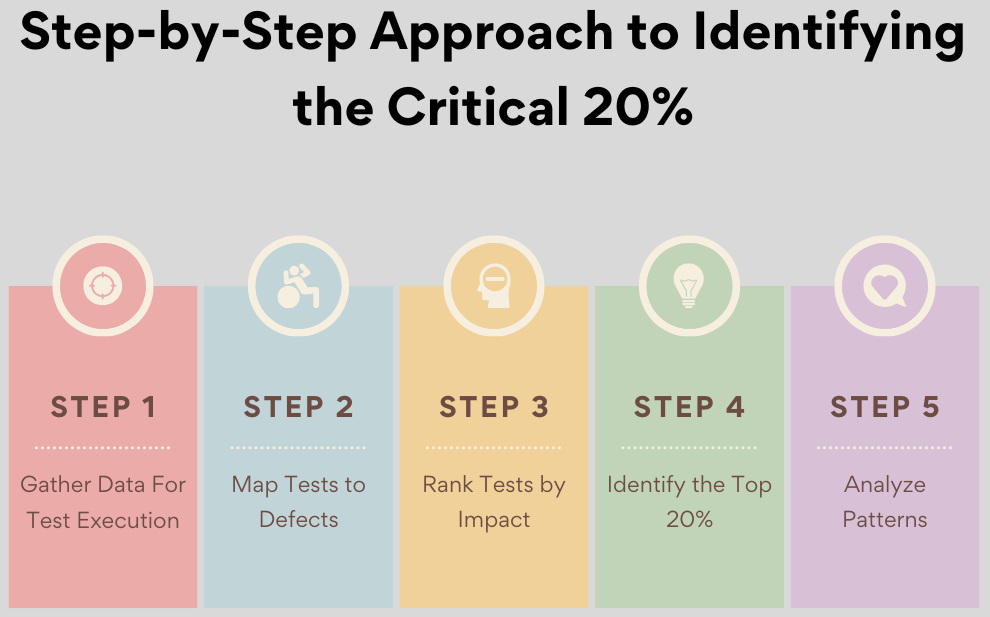

Step-by-Step Approach to Identifying the Critical 20%

To discover the most valuable 20% of tests, there is no getting around it; you will have to build a methodical data-driven effort rather than rely on intuition. It requires looking at the real impact a given test has on catching defects, business risk, and maintenance cost. But employing a pragmatic-based model, QA can decouple the true high-value tests from the sea of low-impact or redundant ones.

Step 1: Gather Data For Test Execution

First and foremost, collect historical data like pass/fail results (with failure frequency), linked defects, runtime, and flakiness metrics. This can help understand test behavior over time. You might discover, for instance, that a payment test fails 18 times in one month and is associated with 10 defects, while a UI color validation test does not fail ever and adds (to your best guess) zero value.

Step 2: Map Tests to Defects

Provide clear traceability between test cases and the defects they discover, so you can correlate them to production incidents if applicable. A “Cart Checkout” test associated with 22 critical bugs, for instance, is significantly more impactful than a “Profile Update” test that’s turned up only two low-severity issues.

Step 3: Rank Tests by Impact

It’s important to assign some degree of test impact score based on criteria like frequency of bug detection, bug severity, business impact, and trust level for maximum gain. A test that detects 10 high-severity defects with low flakiness will score much better than a test that detects minor problems inconsistently.

Step 4: Identify the Top 20%

Sort the tests by impact score to see a small handful of tests that consistently provide the most value. In other words, you could find that out of 500 tests, only 80 are catching 85% of the total critical defects.

Step 5: Analyze Patterns

Look at the most successful tests and see if you can derive common patterns based on test type, what modules are being tapped into, or systems interacted with. For instance, you might find that the majority of high-impact tests are end-to-end scenarios that focus on payment, authentication, and API integrations.

Techniques to Optimize Around the 20%

- Strengthen High-value Tests: Make the critical 20% even stronger by augmenting those with edge cases and additional test data for other scenarios. Second, favor them by running earlier and more often in CI/CD.

- Reduce Low-value Tests: Review the other tests (the remaining 80%) with a critical lens to spot overlap or limited influence. Eliminate duplicate tests, consolidate overlapping scenarios, and archive no longer referenced.

- Shift Left with High-impact Tests: Shift high-value tests left in the development lifecycle to identify defects earlier. For instance, execute them as part of pull request validation and get them into developer workflows for early feedback.

- Automate Intelligently: You can not automate everything; you need to balance the cost with the benefits. Which tests have a higher ROI? Automate scenarios that are frequent, risk-prone, and regress easily, and do so with utmost sincerity.

Role of AI in Identifying the 20%

- AI-driven Test Analytics: With AI-driven analytics, QA teams can handle huge volumes of test data and detect patterns that might not be easy to spot manually. It can identify recurring failure patterns, indicate modules with high instability, suggest deleting unpredictable areas, and predict troublesome modules based on their historical behavior. It would help the teams make better decisions on where to direct their testing efforts.

- Intelligent Test Selection: AI can, therefore, intelligently decide which of the test cases in a given test suite need to be run by looking at the changes made in the code recently, instead of scanning through all available tests. It will always run tests that are most likely to be affected, leading to its significantly reduced execution time whilst producing the same coverage as before. It allows shorter feedback loops without losing the ability to find defects.

Conclusion

The entire perspective on how QA teams should think about testing changes right when they come into work, as the 80/20 Rule, NOT by quantity, but by impact!

Rather than carrying all of their test suites around, teams can optimize their processes by finding the small number of really important tests to run. Using data, structured analysis, and AI-powered insights, organizations can systematically improve this 20% for relevance with ever-changing risks and business objectives.

In the end, this results in quick feedback loops, increased defect identification, and more valuable, efficient test approaches.

Frequently Asked Questions (FAQs)

- How do you convince stakeholders to reduce the number of test cases?

Use data to demonstrate inefficiency, highlight tests that never fail, have no defect linkage, or increase execution time without value. Showing ROI in terms of faster releases and better defect detection helps gain buy-in.

- Can focusing on only 20% of tests increase the risk of missing defects?

Yes, if done carelessly. The goal is not to ignore the remaining 80% entirely but to continuously evaluate, refine, and keep only those tests that add measurable value.

- Does the 80/20 rule apply to all types of testing (UI, API, performance, etc.)?

Yes, but differently. For example, in UI testing, a few end-to-end flows may catch most issues, while in API testing, core endpoints may carry the highest risk.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |