Testing Prompt Robustness Against User Variations

|

|

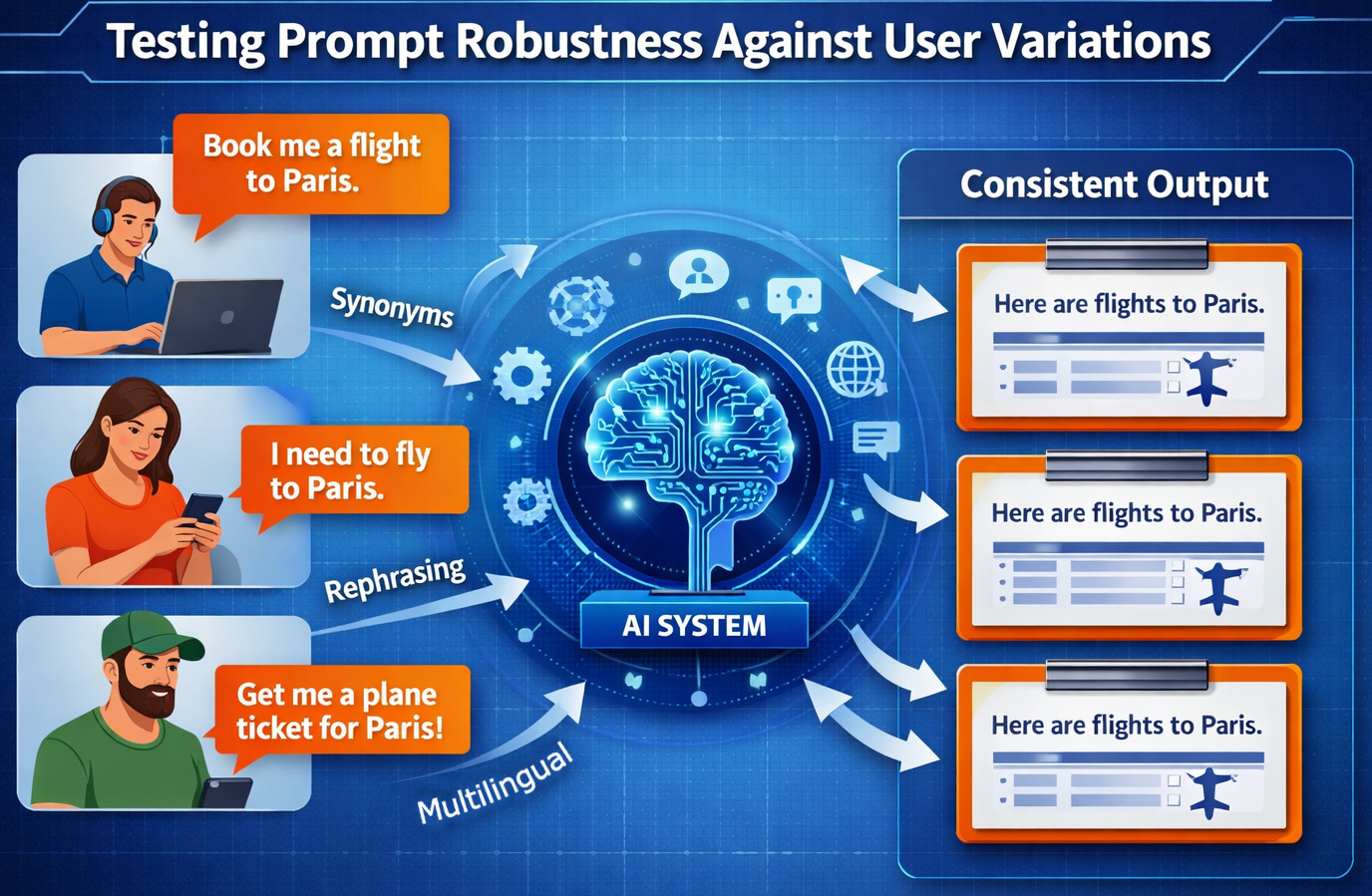

Large language models (LLMs) based on artificial intelligence systems are changing the way humans communicate with a computer. Rather than clicking buttons and navigating complex menus, users now interact with applications via natural language prompts. This paradigm shift brings with it a new testing challenge for quality engineers: to ensure that AI systems reliably behave when users make requests differently.

Though the semantic landscape in traditional software interfaces tends to be predictable, as we can measure user actions quite well, prompt-based systems have to account for a wide variety of human languages. Two different users can ask the same question in completely different words, sentence structures, or tones. One user might say, “Show me my recent transactions,” and another writes, “Can you list the payments I made this week?” A third might type, “What have I spent money on recently?”

The three prompts ask for the same result but are massively different in structure and vocabulary. When an AI system gives contradictory or incorrect responses across all these variations, the experience for the user is a lot worse. That is why we need testing of prompt robustness, while software testing.

When an AI system is asked a question and that same or similar question is posed to it in a slightly different way, yet the responses remain the same, we say the AI prompt mechanism is robust. QA engineers, as well as AI testers, must assess prompt robustness to ensure that AI-powered applications are reliable even in the face of human language variations.

| Key Takeaways: |

|---|

|

What is Prompt Robustness?

Prompt robustness is the property of an AI system that it will consistently understand and generate correct responses when the same prompt is phrased in diverse ways. And that’s part of the problem, because AI systems cannot work with their own input like traditional software.

Traditional software testing focuses on validating systems with controlled inputs and rigid workflows, such as entering a username in each field of the login form and a password. The vast majority of the time, if the format changes or there is a disconnect from what it expects and how those inputs are structured, it rejects the request because unstructured variations cannot be interpreted.

AI systems, on the other hand, need to comprehend meaning over fixed patterns, enabling users to submit requests in a multitude of different formats. Prompt robustness testing ensures that the AI model can identify these variations to be of similar intent and provides consistent outputs regardless of changes in phrasing, tonality, or vocabulary.

Consider a chatbot designed to answer travel-related questions. If a user asks: “Find me flights from New York to London.”

- “Are there flights from NYC to London?”

- “I want to travel from New York to London.”

- “Any airlines flying between NY and London?”

- “Show me plane tickets to London from New York.”

Each variation uses different vocabulary, grammar, and tone. A robust AI system must understand all these prompts as equivalent requests.

Read: Prompt Engineering in QA and Software Testing.

Why Prompt Robustness Matters?

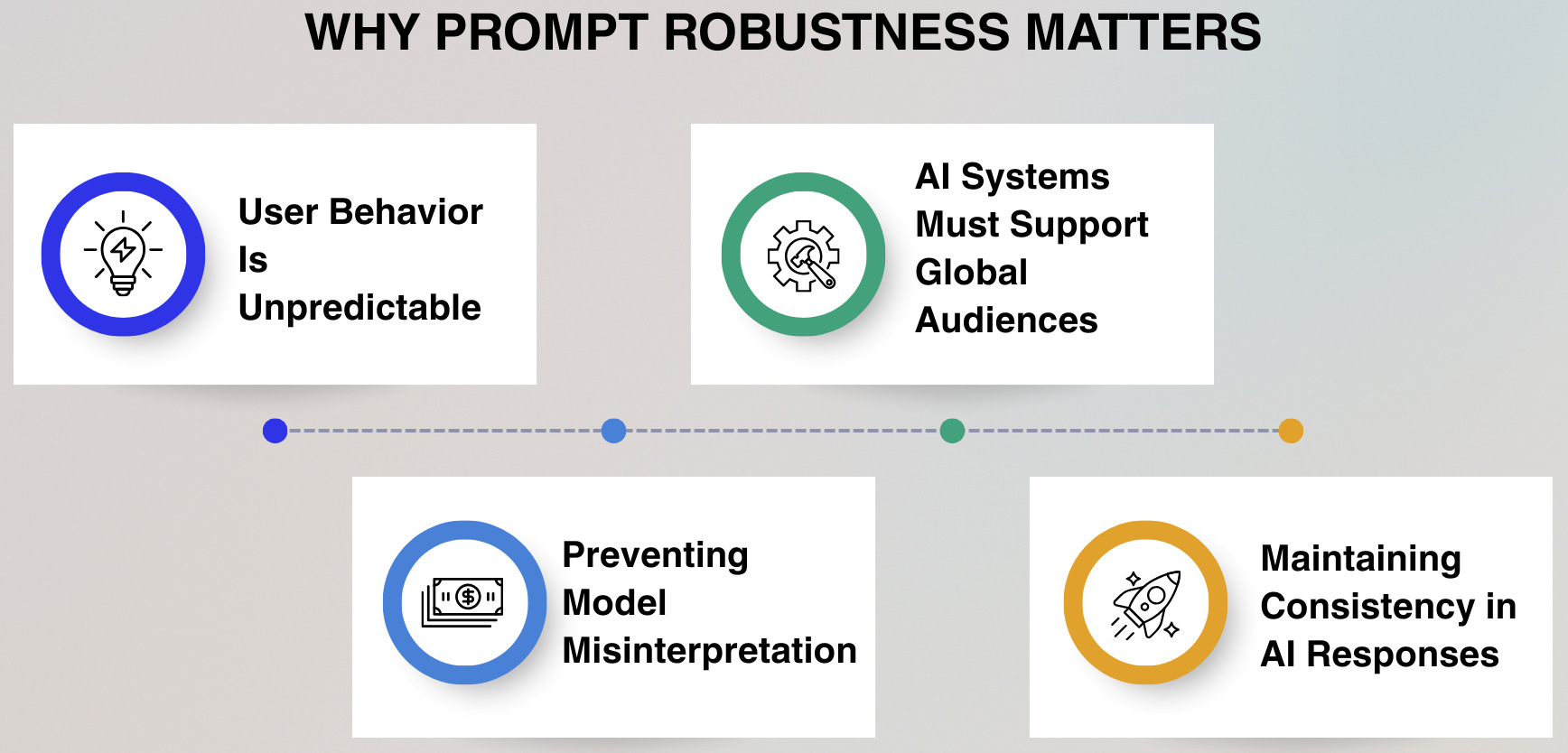

Achieving reliable AI system behavior in the presence of real users is one area where prompt robustness will be important. If an AI model can correctly interpret different ways of requesting the same thing, it enhances usability and builds trust with users while also increasing the effectiveness of the application.

User Behavior is Unpredictable

Users rarely interact with AI systems using carefully structured sentences or predefined scripts. Instead, they communicate naturally using slang, abbreviations, short phrases, or incomplete sentences, which introduces significant variability in how requests are expressed. Because of this unpredictability, AI systems must focus on understanding intent rather than relying on exact wording.

For example, a user requesting transportation might say “Book me a cab,” “Get me a ride,” or “Call a taxi.” Another person could simply type “Need an Uber,” which still reflects the same intent. A robust AI system should recognize all these variations as equivalent requests and provide the same relevant response.

AI Systems Must Support Global Audiences

AI-powered applications cater to users across numerous countries, cultures, and languages; hence, sentence structures and phrasings undergo significant variations. Non-native speakers might employ simplified grammar or unexpected word order, but their meaning is still obvious. So, a strong AI system has to be able to make sense of things even if the language is bad.

For example, a user could type “I want booking hotel tomorrow.” Even though the grammar is wrong, the meaning of needing a hotel for tomorrow (the next day) is clear. For example, a system with strong prompt robustness should still recognize the request and return appropriate hotel booking options.

Preventing Model Misinterpretation

A slight variation in prompts can lead AI systems to generate radically different outputs. Language models depend intimately on the words and context, so minor tweaks can dramatically change how deep or complex the output is. Testing the robustness of a prompt can expose and fix such inconsistencies.

So when a user starts their prompt with, say, “Explain quantum computing simply,” it hints that they want a novice-level explanation. But if the user asks for “Explain quantum computing,” then the system could yield a very technical response. Solid AI systems should keep their clarity and relevance, even with minor wording changes.

Maintaining Consistency in AI Responses

Consistency is essential for building trust in AI-driven applications. If the system gives detailed and helpful answers for one prompt but produces irrelevant or shallow responses for a similar prompt, users may perceive the system as unreliable. Prompt robustness testing ensures that the quality of responses remains stable across different phrasing variations.

For example, a user might ask, “What are the benefits of cloud computing?” while another asks, “Why should companies use cloud computing?” Both questions seek similar information. A well-tested AI system should provide consistent and relevant explanations for both prompts rather than drastically different answers.

Read: What is Prompt Regression Testing?

Types of User Prompt Variations

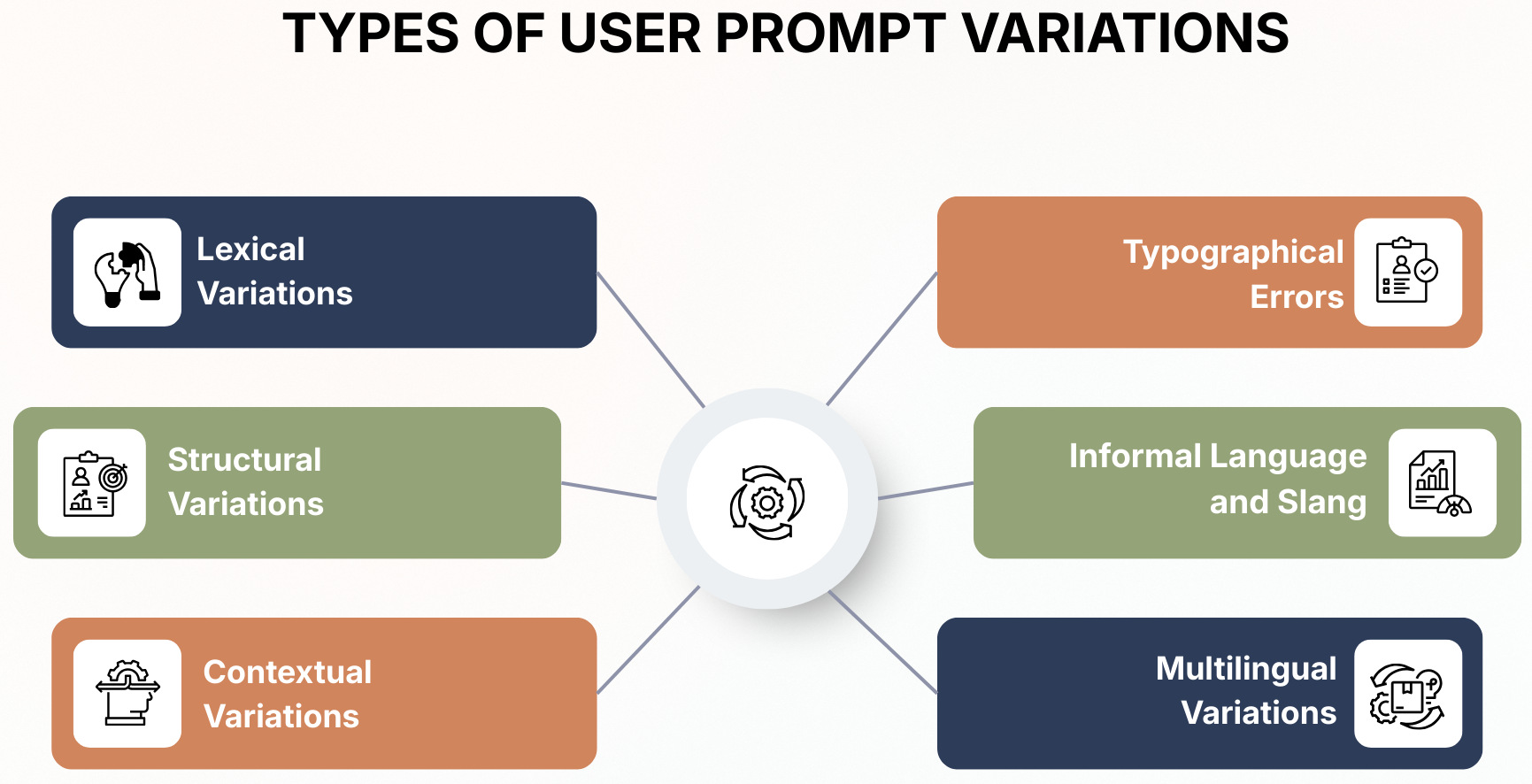

To test prompt robustness, we need to illustrate various ways of articulating the same intention with different language patterns. Human communication is extremely flexible; a single request, varying in vocabulary, structure, context, and cultural background, can take on numerous forms. Distinguishing these differences is critical for understanding how well an AI system captures user intent.

Lexical Variations

Lexical variations use synonyms or similar phrases to replace the words, but they mean the same. A well-designed AI should understand that a user request can be expressed with different wordings.

- “Purchase a laptop”

- “Get a laptop”

- “Order a laptop”

Structural Variations

Structural variations occur if users change the arrangement of sentences or grammatical forms that keep a similar meaning. The same text can be structured and written to read as a question, a statement, or in very short sentences. An AI system would need to understand meaning beyond grammar patterns in order to process this prompt correctly.

- “Tell me tomorrow’s weather in Paris.”

- “Will it rain in Paris tomorrow?”

- “Paris weather forecast for tomorrow.”

Contextual Variations

A contextual variation is a prompt that has information from one request stored in memory to use during the next, rather than repeating an entire request. In conversational AI systems, users frequently ask follow-up questions that are only coherent when referring back to the earlier prompt. To respond correctly to such prompts, robust systems must maintain conversational memory.

Example:

User: “Find restaurants near me.”

Follow-up prompt: “Which ones are open now?”

Typographical Errors

Typographical variation happens when users misspell words or make other typographical mistakes in their prompts. As humans are used to typing fast and on phones during real-world usage, such errors are common. A good AI system should still get the inference correct even if there are minor typos in the sentences.

- “Recommend a movie.”

- “Recommendation for restaurants”

- “weather today”

Informal Language and Slang

Users often write in casual language, with colloquialisms and conversation phrases. Such expressions might not adhere to strict rules of grammar but effectively communicate the user’s intention. AI systems have to translate such casual language correctly in order to give relevant responses.

- “Hook me up with good pizza places.”

- “Any cool movies to watch tonight?”

Multilingual Variations

Users mix languages or translate phrases word by word from their native language, and multilingual variations. These prompts might use simplified grammar or unusual ordering of words, but they still express a clear request. To serve global users, robust AI systems have to be able to understand such inputs.

- “Book hotel tomorrow Paris”

- “Flight ticket Delhi to Dubai tomorrow.”

Read: How to Write Good Prompts for AI?

Challenges in Testing Prompt Robustness

- Non-Deterministic Responses: AI models produce parameters through probability, not based on rules, which is why you will often get different responses to a similar prompt.

- Infinite Prompt Variations: With natural language, you can combine words and sentence structures in infinite ways, so you will never be able to test every possible prompt variation.

- Difficulty in Test Validation: An AI can vary its wording but give the right answers, making it impossible to compare the exact output during testing.

- Context Dependency: The meaning of a prompt may depend on earlier conversation context, which complicates isolated prompt testing.

- Influence of Training Data: An AI model may respond better to a certain style of prompting because it saw similar phrasing more often and learned that it had value in the training data.

- Prompt Sensitivity: Small changes in wording, tone, or structure can drastically change how the AI understands and responds to your prompt.

- Need for Intelligent Sampling Strategies: To evaluate robustness against different prompt variations, QA teams need to come up with some representative prompt samples instead of exhausting every variation.

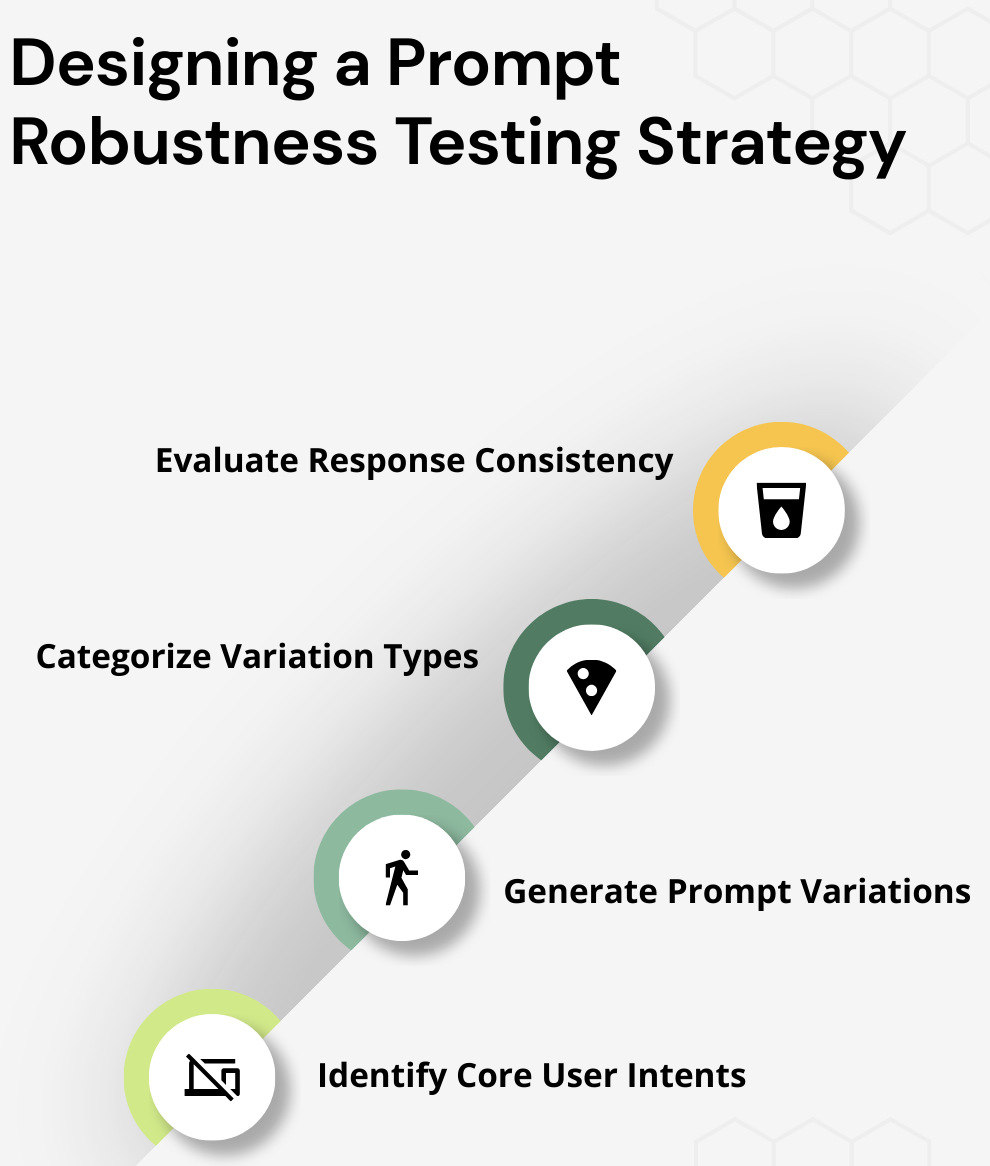

Designing a Prompt Robustness Testing Strategy

Testing prompt robustness requires a systematic approach to ensure AI systems consistently understand different ways of expressing the same request. A well-designed strategy helps QA teams evaluate reliability, detect weaknesses, and improve the model’s ability to interpret diverse user inputs.

Identify Core User Intents

Prompt robustness testing begins by determining the key activities that users engage with in an application. These tasks are the primary objectives users seek to pursue when engaging with the AI system.

All of these become a baseline scenario in which to test variations on the prompt. Intent types include product search, service booking, information requests, and transactions.

Generate Prompt Variations

After defining user intent, testers write several versions of the same prompt, each representing a different way that users might refer to their request. Perhaps most importantly, this and similar steps ensure that the testing process mirrors real-world language diversity.

For instance, the intent “check account balance” could manifest as “What’s my account balance?” or “Show my bank balance.” Other variations include “How much money do I have in my account? or “Please show my balance in my account” helps assess whether the AI understands different ways of phrasing an idea.

Categorize Variation Types

Categorizing prompt variations into structured categories helps increase test coverage and provides a more systematic evaluation process. Organizing these linguistic patterns is useful to make sure that the same ones do not get tested multiple times.

These could generally be in categories such as: synonym replacements, sentence rearranging, typos, slang used, abbreviated phrases, or questions vs. statements. Testing the AI system using these categories helps ensure that it can handle a diverse set of potential user inputs.

Evaluate Response Consistency

Testers then run prompts with variations through the system, reviewing the responses generated by the AI model. The meaning, accuracy, and relevance of the responses should not change based on how the prompt is phrased.

When one variation of a prompt leads to correct information and another variation leads to incorrect or greatly unrelated content, that is an indicator of a robustness issue. Distinguishing such discrepancies supports groups in adjusting the model and increasing reliability.

Read: Understanding Pair Prompting in AI.

Techniques for Generating Prompt Variations

- Manual Linguistic Analysis: Domain experts study how users word prompts in their specific community and hand-tune alternate outputs. This technique gives lifelike deviations but can take a lot of time and work.

- User Data Analysis: The true representation of real usage comes from analyzing actual user queries in application logs to see how people realistically use the system. This data allows testers to create variation in prompts that closely emulate patterns seen in real life.

- Paraphrasing Models: Using AI-based paraphrasing models, we can create many different outputs from the same prompt. These data augmentations may help to reflect various language styles and form valid sentences.

- Adversarial Prompt Generation: Testers will address the System with prompts that cause it to struggle, through vagueness in semantics/wording or unusual sentence structure. The method helps determine flaws and vulnerabilities in prompt reasoning.

Read: Talk to Chatbots: How to Become a Prompt Engineer.

Evaluating AI Responses

- Human Review: Human evaluators check if the AI response appropriately meets the needs of the user intent.

- Semantic Similarity Analysis: Natural language processing methods are used to measure how similar the meaning of two responses is.

- Intent Classification: Testing systems examine if the AI appropriately recognizes the user’s core need or desire.

Read: How to use AI to test AI.

Improving Prompt Robustness

- Expanding Training Data: Expose training datasets to various types of speech/language patterns.

- Prompt Engineering: By writing structured prompts and crafting effective system messages, you can steer the AI for better consistency in its output.

- Feedback Loops: Over time, user interactions and feedback can help in refining the understanding of prompts.

- Continuous Testing: To ensure the reliability of systems, prompt robustness testing must form part of standard ongoing evaluation practices for AI.

Frequently Asked Questions (FAQs)

- How often should prompt robustness testing be performed?

Prompt robustness testing must be performed at each step of the AI development lifecycle. And it is especially important after model updates, prompt engineering changes, or lucrative modifications to training data, as even small perceived differences in user prompts can lead systems to a better understanding of the input received.

- How is prompt robustness different from prompt engineering?

Prompt robustness is about testing the extent to which an AI system can correctly interpret different variants of user prompts. While programming prompts are used to create specific requests that lead the AI to better outputs. Although prompt engineering can enhance the quality of AI responses, prompt robustness testing guarantees that the system performs as desired in cases where prompts are not well-crafted.

- What risks arise if prompt robustness is not tested properly?

When prompt robustness is not tested, AI systems may have differing interpretations of similar prompts, which could potentially lead to inconsistent responses, incorrect outputs, or user frustration. This reduces trust in the AI system and has adverse effects on user adoption, particularly in sensitive domains such as healthcare, finance or customer support.

Conclusion

AI-powered UI enables users to interact with software in ways that are flexible and natural, but that flexibility also creates variance, which can impact system reliability. Prompt robustness testing is used to make sure that an AI system will still respond correctly regardless of the phrasing style, spelling mistakes, slang use in a prompt, and even contextual instructions following the request. With the increasing adoption of AI, companies that test for prompt robustness will have a more robust and trustworthy system, making this one of the most essential skills for QA engineers in the future.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |