AI Predictions for 2026: How to Test?

|

|

“The only way to predict the future is to have power to shape the future” Eric Hoffer.

AI is almost a fly buzzing in every corner of our lives in 2026. From the apps your phone uses to how whole organizations plan and run, AI is no longer a theoretical future concept; its practical manifestation is already restructuring today like never before.

But what is all this really leading to next?

Does that make machines more autonomous and context-aware? The likely continued redefinition of the nature of jobs by AI and machine learning. And how profoundly will it shape our living, our working, even our thinking?

This is not another list of speculative predictions. Instead, we’ll dive into what experts are actually seeing and predicting about the latest phase of AI, and crucially, how we can prove it.

How do we get beyond hype and make these advances real, reliable and beneficial? What is needed to test, measure and confirm that AI is headed in the right direction?

| Key Takeaways: |

|---|

|

Autonomous AI Ecosystems

The emerging paradigm of 2026 is autonomous AI ecosystems, where various AI agents collaborate amongst themselves rather than being kept in silos. The only code you can control on such integrated systems can be of complex workflows, For example, software delivery pipelines, where responsibilities are delegated to different specialized agents that coordinate in real time.

But this evolution also creates real testing challenges, such as communication failure between agents, conflicting decisions leading to cascading failures and unexpected emergent behavior. Validating such systems is much more challenging: individual components can no longer simply be verified, as you need to verify interactions and collective outcomes.

Read: Trusting AI Test Automation: Where to Draw the Line.

Testing Approach

- System-of-systems Validation: Shift focus from individual AI outputs to evaluating how multiple agents interact and collaborate as a unified system. This requires validating end-to-end workflows rather than isolated components.

- Simulation Environments: Build realistic environments that mimic production scenarios to observe how AI agents behave under different conditions. These simulations help uncover hidden interaction issues and emergent behaviors.

- Interaction Testing: Test communication and decision exchanges between AI components to ensure alignment and prevent conflicts. This involves validating protocols, dependencies, and coordination logic.

- Decision Chain Monitoring: Track how decisions flow across agents instead of validating outputs in isolation. This helps identify where inconsistencies or failures originate and how they propagate.

- Failure Containment Validation: Ensure that when one agent fails, the impact is contained and does not cascade across the ecosystem. This requires designing safeguards and validating system resilience under stress conditions.

Read: How to Test Fallbacks and Guardrails in AI Apps.

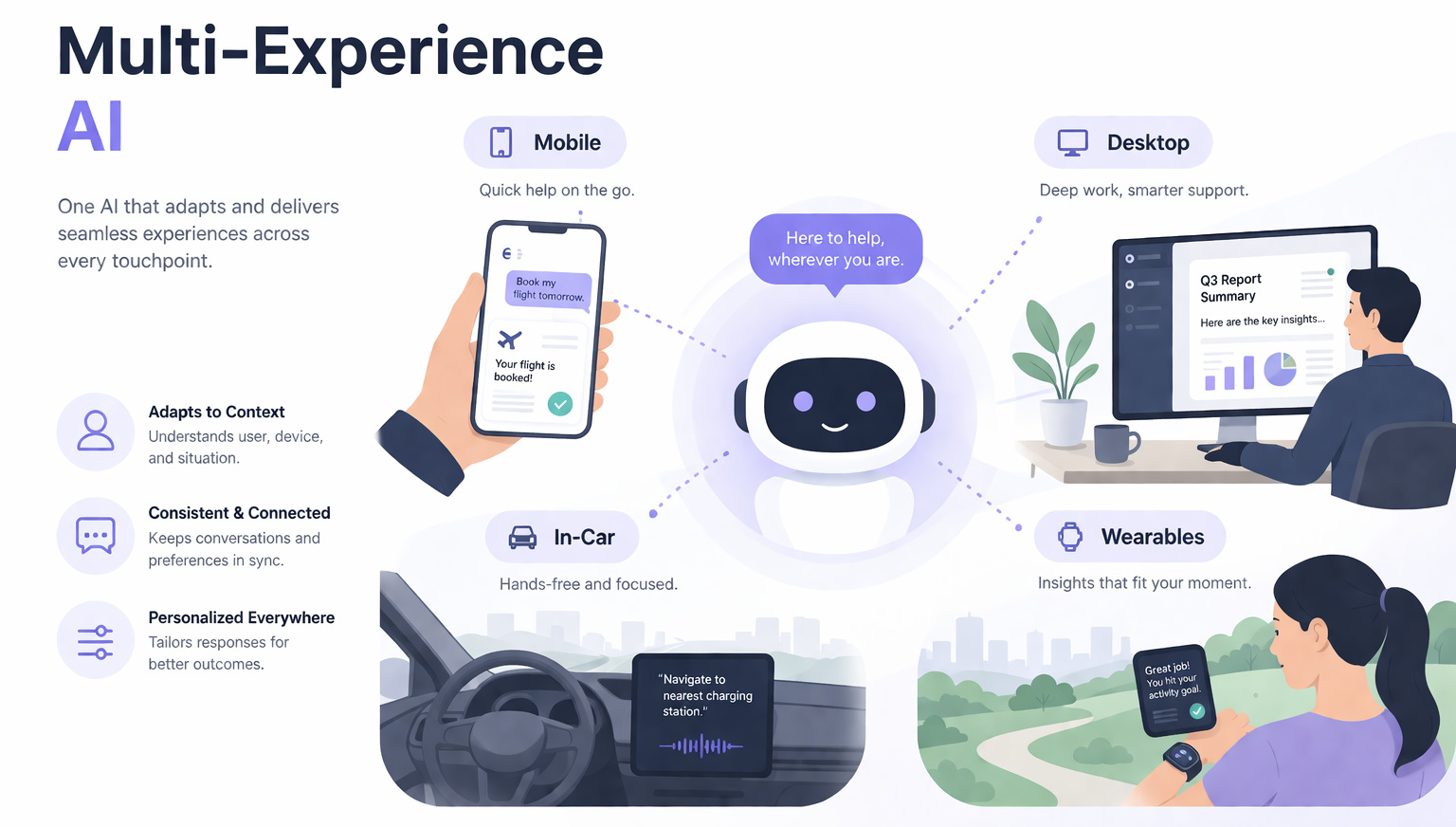

Multi-Experience AI: Beyond Multimodal Inputs

Multi-experience AI is an evolution beyond multimodal systems; it enables AI to understand and process text, images, audio, video, as well as various layers of context (user intent, emotional tone and environment). By 2026, such systems will respond in highly customized ways, recalibrating on the fly according to who you are, what you’ve interacted with before, and even how you’re feeling.

This move comes with major challenges for testing since the same integration can yield different results based on user history, emotional cues, and contextual response. Predictability is reduced, and testers must now verify a variety of possible results instead of single results; hence, traditional test case design becomes obsolete.

Read: MCPs vs. APIs: Differences.

Testing Approach

- Context-aware Validation: Testing must move beyond fixed input-output validation and focus on whether responses are appropriate for the given context. This requires defining expected behavior ranges instead of exact outputs.

- Persona-based Testing: Testers should create multiple user personas with distinct backgrounds, preferences, and histories to evaluate how AI adapts responses. This ensures the system behaves correctly across diverse user profiles.

- Scenario-based Testing: Different environmental and situational contexts must be simulated to observe how AI adjusts its behavior. This requires building test scenarios that reflect real-world variability.

- Emotional and Tone Testing: AI responses must be validated for tone sensitivity, ensuring that emotional cues are correctly interpreted and responded to appropriately. This involves testing variations in sentiment and emotional input.

- Contextual Test Matrices: Testers need to design matrices that explore variations such as the same input across different users, histories, and emotional states. This structured approach helps uncover inconsistencies while ensuring behavioral reliability.

Real-Time Adaptive AI: The End of Static Behavior

Real-time adaptive AI, from understanding the static models to changing the systems into continuous evolution, means in real time learning rather than periodic restarts. These systems will one day dynamically tune to user behavior, update responses on the fly, and optimize decisions in real-time instead of waiting for traditional update cycles.

That creates a new challenge for testing, because AI behavior becomes a moving target where any test that passes today could fail tomorrow, not due to a defect but an entirely legitimate evolution. For a system to be reliable now, it needs to differentiate between expected adaptation and unforeseen drift or instability.

Read: RAG vs. Agentic RAG vs. MCP: Key Differences Explained

Testing Approach

- Temporal Testing: Testing must validate system behavior across different time stages, such as initial state, post-learning, and continued adaptation. This requires maintaining checkpoints to compare how the AI evolves over time.

- Drift Detection: Continuous monitoring is needed to identify changes in output patterns and deviations from expected behavior. This helps ensure that learning remains aligned with intended goals and does not degrade performance.

- Snapshot Testing: Capture system behavior at specific moments and compare it with future states to track changes. This requires storing baseline outputs and using them as references for controlled evolution.

- Controlled Evolution Validation: Instead of enforcing static correctness, testing must ensure that changes are intentional, explainable, and within acceptable boundaries. This requires defining thresholds for acceptable variation in behavior.

AI as Decision Authority

AI is quickly transitioning from a decision-support tool to an actual decision-making authority: systems autonomously approve transactions, screen candidates, and run complex operations such as supply chains. By 2026, that shift means AI isn’t merely assisting humans; it is driving outcomes, directly influencing businesses and people.

This opens up important testing challenges because wrong decisions can cause financial losses, ethical issues, and legal problems rather than simple technical defects. Testing thus has to be more than just ensuring functional accuracy, but also that decisions are trustworthy, compliant, and justifiable under real-world conditions.

Read: AI Agents in Software Testing

Testing Approach

- Explainability Testing: Validate whether the AI can clearly justify its decisions by explaining the reasoning and data behind them. This requires mechanisms to surface decision logic in a human-understandable way.

- Auditability Testing: Ensure that every decision can be traced, reproduced, and independently verified when needed. This involves maintaining detailed logs and traceability of inputs, outputs, and decision paths.

- Boundary Testing: Test decisions against defined business rules, ethical standards, and regulatory requirements to ensure compliance. This requires building constraints and validation checks into the testing framework.

- Decision Consistency Validation: Verify that similar inputs produce logically consistent decisions across scenarios. This helps prevent unpredictable or biased behavior in critical decision-making systems.

- Risk-based Testing: Prioritize testing for high-impact decision areas where failures could cause significant damage. This requires identifying critical decision points and applying deeper validation strategies.

AI Governance and Regulation

With AI systems exerting greater control over critical decisions and societal outcomes, governance and regulation are emerging as core themes in the domain of AI. Beginning in 2026, there are no more options regarding compliance, and organizations have to make sure their AI systems comply with strict standards related to fairness, transparency, privacy, and accountability.

This poses new verification challenges; now, validation must go beyond technical correctness to legal and ethical correctness. One such job, that of a tester, is to ensure that AI systems don’t break the rules or discriminate against users or misuse sensitive data.

Read: AI Compliance for Software

Testing Approach

- Compliance Testing: Validate that AI systems adhere to regional laws and industry-specific regulations. This requires mapping system behavior against legal requirements and continuously updating tests as regulations evolve.

- Bias Testing: Detect and measure discrimination in outputs by analyzing how different user groups are treated. This involves creating diverse datasets and scenarios to uncover hidden biases.

- Privacy Testing: Ensure that user data is handled securely and used responsibly throughout the system. This requires validating data collection, storage, and processing practices against privacy standards.

- Accountability Validation: Verify that AI systems maintain clear ownership and traceability for decisions and actions. This includes ensuring logs, reports, and escalation paths are properly defined.

- Ethical Boundary Testing: Test whether AI behavior aligns with defined ethical guidelines and organizational values. This requires setting measurable criteria for acceptable and unacceptable outcomes.

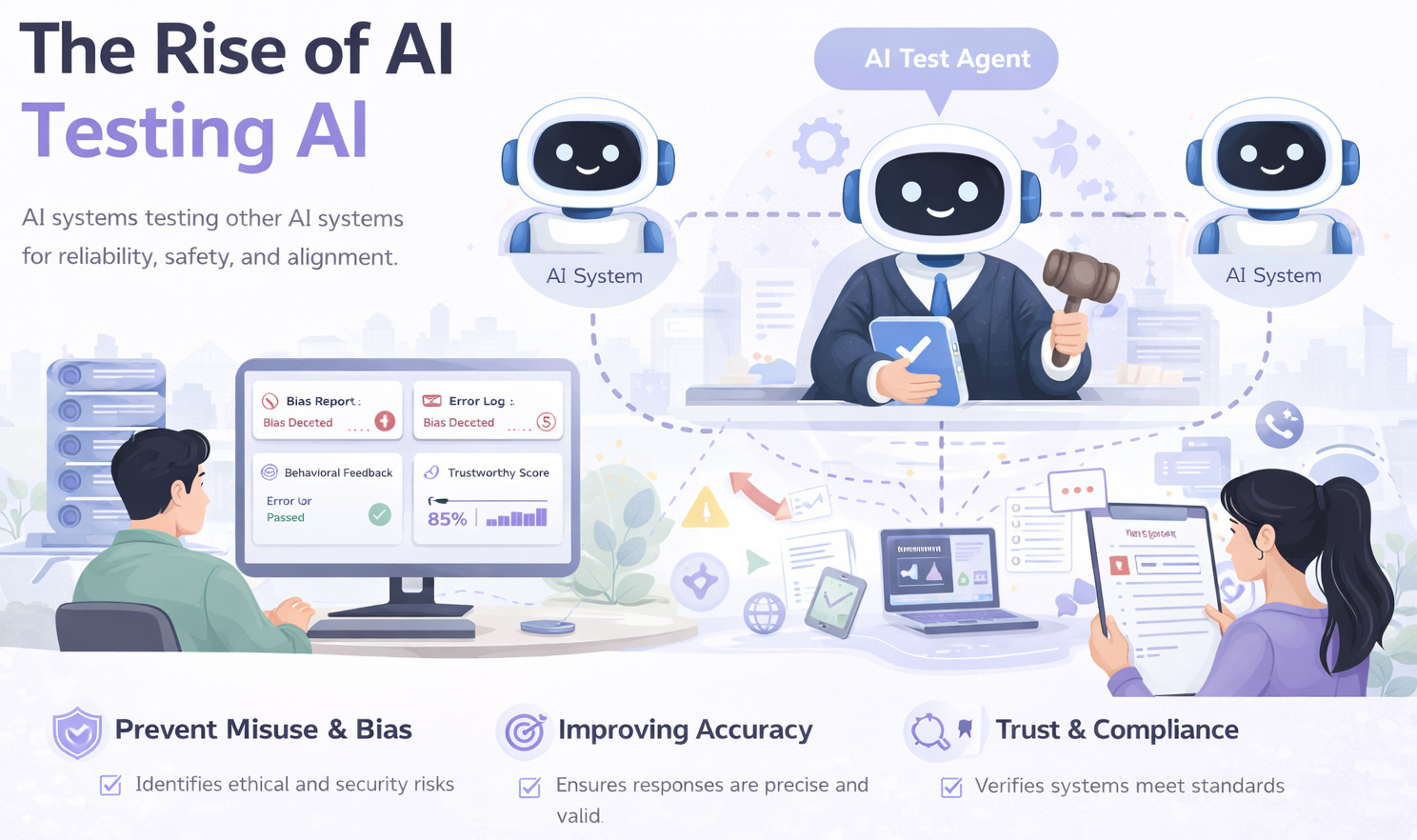

The Rise of AI Testing AI

AI testing AI will be among the most transformative shifts in 2026. Where intelligent systems are responsible for generating test cases, finding anomalies, or even predicting potential failures. This creates a very powerful feedback loop and ultimately allows for much quicker and scalable testing like never before.

But this evolution creates a major testing problem: how do you test the reliability of a system whose only job is to test another system? Verifying accurate, complete, and untouched AI in addition to the application under test is critical to ensuring trust in AI-driven testing.

Testing Approach

- Meta-testing: Validate the effectiveness of AI-generated tests by assessing their accuracy, relevance, and coverage. This requires benchmarking AI-generated test suites against known scenarios and expected outcomes.

- Cross-model Validation: Use multiple AI systems to validate the same outputs and identify inconsistencies or gaps. This approach reduces reliance on a single model and helps uncover hidden errors.

- Human-in-the-loop (HITL) Validation: Maintain human oversight for critical decisions, complex edge cases, and ethical considerations. This ensures that AI-driven testing remains aligned with real-world expectations and accountability.

- Test Quality Auditing: Continuously evaluate the quality of generated tests, including redundancy, coverage gaps, and false positives. This requires defining metrics to measure the effectiveness of AI-driven testing.

- Layered Trust Validation: Build a multi-layered validation framework where AI systems, cross-model checks, and human review work together. This ensures that trust is not placed in a single layer but distributed across the system.

Hallucinations and Trust Deficits

Nowhere is this more true than with AI hallucinations, when systems output bold but ultimately wrong results. This can be a major issue throughout 2026, just in a far quieter and subtler fashion. Such is the logic of these errors, that they will come across as coherent and convincing, making them much more challenging to detect using traditional validation techniques.

This poses a legitimate challenge for testing, making it no longer trivial to identify incorrect outputs and requiring more sophisticated verification mechanisms. It is not just that you might be wrong, but being convincingly wrong has a direct impact on user trust, making their decision less reliable.

Read: What are AI Hallucinations? How to Test?

Testing Approach

- Ground Truth Validation: Compare AI outputs against verified data sources and trusted knowledge bases to ensure factual correctness. This requires maintaining high-quality reference datasets for validation.

- Confidence Scoring Validation: Evaluate the confidence level provided by the AI and check whether it aligns with actual accuracy. This helps detect cases where the system is overly confident despite being incorrect.

- Adversarial Testing: Challenge the system with ambiguous inputs, edge cases, and misleading prompts to expose hidden weaknesses. This requires designing inputs specifically aimed at triggering hallucinations.

- Consistency Checking: Validate whether the AI provides consistent answers across similar queries or repeated interactions. This helps identify instability or unreliable reasoning patterns.

- Trustworthiness Evaluation: Move beyond correctness to assess how reliable and dependable the system is in real-world usage. This involves combining accuracy, confidence, and consistency into a broader trust metric.

The Future of Trust in AI

The purpose of AI testing by 2026 is much greater than quality; it becomes the bedrock of trust. As we increasingly use AI to inform us and act on our behalf, the expectation for reliable behavior that can easily be understood by users increases significantly.

The reliability of AI products and applications would come down to the trust users have in the system, which in turn is determined by how transparent and consistent it has been across scenarios. Testing is critical to ensuring these aspects and is a key mechanism to build trust in how AI behaves in the wild.

Final Thoughts

The evolution of AI is pushing us into uncharted territory, where testing is no longer limited to code, features, or functions but extends to validating intelligence, behavior, and autonomy.

In 2026, the most successful organizations will not be those that adopt AI the fastest, but those that test it the smartest, understanding the deeper complexities of intelligent systems. As AI continues to influence critical decisions and actions, the definition of quality itself is changing in a fundamental way. It is no longer just about correctness; it is about confidence.

Frequently Asked Questions (FAQs)

- Can AI ever be fully “tested” or certified as completely reliable?

No, AI systems cannot be fully “certified” in the traditional sense because they continuously learn and adapt. Instead of absolute correctness, the goal is to establish acceptable boundaries of behavior, monitor drift, and ensure ongoing reliability through continuous validation.

- How can organizations measure trust in AI systems?

Trust in AI is measured through a combination of factors such as consistency of outputs, explainability of decisions, accuracy over time, bias detection, and user confidence. Unlike traditional metrics, trust is multi-dimensional and evolves with usage.

- How can teams balance automation and human oversight in AI testing?

While AI can automate large parts of testing, human oversight is essential for interpreting results, handling edge cases, and ensuring ethical alignment. The most effective approach is a hybrid model where AI accelerates testing and humans validate critical decisions.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |