Hyper-personalization Testing: Automating AI-Driven UIs

|

|

Software is no longer static. It no longer acts the same way across users, nor does it follow predictable deterministic flows that can be easily expressed in a set of pre-defined test cases. Addressing this challenge requires a change in approach, which is why today’s applications, particularly the ones powered by Artificial Intelligence, are dynamic and adaptive, and even personalized.

Hyper-personalization is the next step in user experience evolution. It’s more than superficial personalization (the selection of a theme or language) and enters deeply contextual, in-the-moment adaptation. When we open applications today, they learn from us, including our behavior, preferences, location, and devices. They even infer intent in order to offer a personalized experience for each of us.

AI-powered systems require a transformation from deterministic validation to probabilistic and behavior-based testing methods. Unlike fixed expected outputs, QA needs to consider patterns, boundaries, and confidence across a multitude of scenarios. This evolution drives testing into continuous validation, adaptive automation, and AI-aware quality strategies.

| Key Takeaways: |

|---|

|

Hyper-personalization in AI-Driven Interfaces

Hyper-personalization is when you utilize sophisticated data analytics, machine learning algorithms, and real-time processing to customize user experiences on an individual basis. Unlike traditional personalization, which is based on fixed rules, it adjusts constantly to changes in user behavior.

- Behavioral data (clicks, scrolls, time spent)

- Contextual data (location, device, time of day)

- Predictive models (recommendations, intent prediction)

- Continuous learning systems

- An e-commerce platform dynamically rearranges product listings for each user

- A streaming service recommends content based on nuanced viewing patterns

- A fintech app adjusts dashboards based on spending habits

- A SaaS platform changes UI workflows based on user proficiency

In all these cases, the UI itself is no longer fixed; it is generated dynamically.

Read: Your Guide to Hyperautomation.

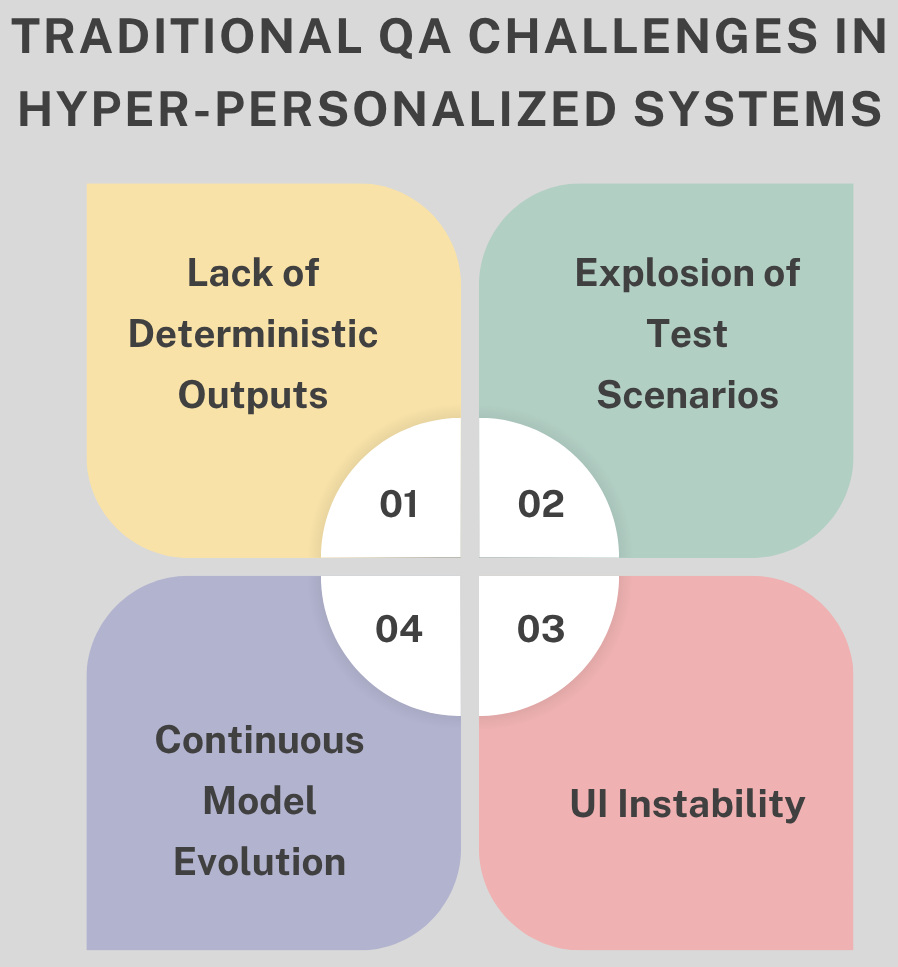

Traditional QA Challenges in Hyper-Personalized Systems

Traditional QA relies on deterministic validation, which is based on a specific input yielding a predictable output. It also presumes stable UI components, controlled environments, and predictable, repeatable test results. Hyper-personalization breaks all of these assumptions by allowing for dynamic behavior that differs among users and contexts.

- Lack of Deterministic Outputs: AI-based systems can produce different responses for the same input based on user history, changes in model retraining, or new external data. For instance, two different users on the same app searching for “best restaurants” will be served completely different results based on their previous behavior and location context, making it impossible to define a single expected outcome.

- Explosion of Test Scenarios: Each user has a unique subset of controls presented to them, and with this attribute, your possible test scenarios grow exponentially. For example, an e-commerce platform might show a different product ordering/discounts/banners for every user, and it is not practical to create the test cases for every possible combination.

- Continuous Model Evolution: AI models continuously evolve through retraining, new data inputs, and feature updates, even without explicit code changes. For example, a recommendation engine might start suggesting different products or content after a model update, even if the application code remains unchanged.

- UI Instability: Traditional automation relies on stable locators and fixed layouts, which break in hyper-personalized systems where interfaces dynamically adapt. For example, a dashboard may rearrange widgets or hide/show sections based on user behavior, causing automation scripts that rely on fixed element positions to fail frequently.

The QA Paradigm Shift

To successfully test hyper-personalized systems, QA has to evolve from stringent validation to smart verification. The paradigm shifts from requiring precise outputs to measuring the goodness and relevance of outputs.

Read: How to Validate AI-Generated Tests?

Instead of framing the inquiry as “Is this output correct?”, what testers need to determine is whether or not the result seems reasonable, relevant, and in keeping with the user context. This is a much bigger picture that considers the variability and dynamic nature of systems.

This consequently shifts testing to a new mindset where passing just means probability over yes or no. It also highlights validating behavior, rather than static verification of system correctness in narrow cases.

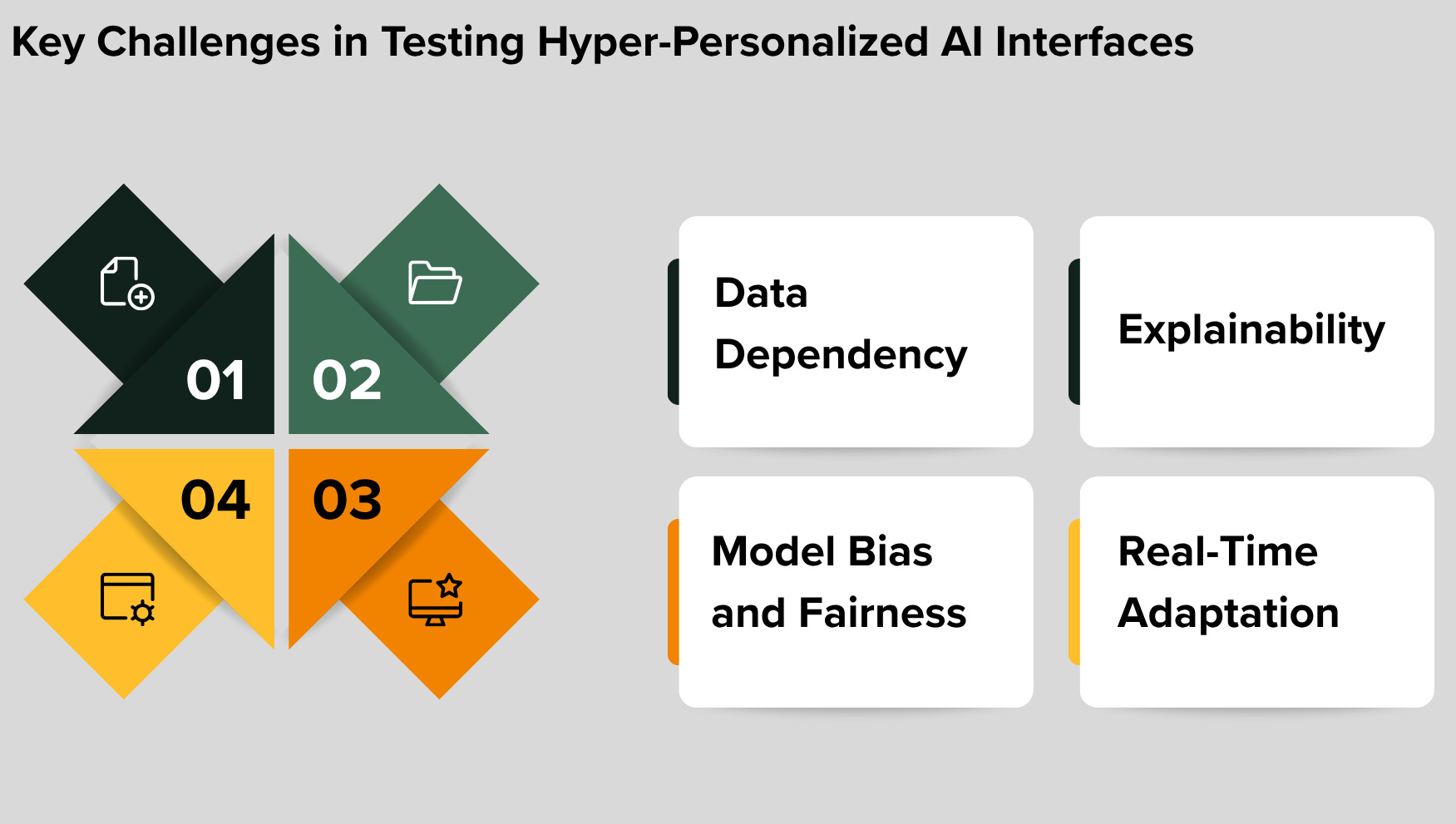

Key Challenges in Testing Hyper-Personalized AI Interfaces

Testing hyper-personalized AI interfaces introduces complexities that go far beyond traditional QA practices. These systems are dynamic, data-driven, and continuously evolving, requiring entirely new testing approaches and thinking.

Data Dependency

Hyper-personalizing your website and the user experience is based on rich, diverse data about your users. Without realistic datasets, however, test scenarios might not accurately mirror the real world, and their usefulness decreases.

To solve this problem, the QA team must mimic different user personas, behaviors, and usage patterns. For instance, if you are building a travel app, you want to test with users of different income levels, travel histories, and preferences to make sure that recommendations are meaningful across segments.

Read: Top Test Cases for Flight Booking Systems: A Checklist.

Model Bias and Fairness

AI-based personalization may also carry an unintentional bias that can cause a disparate user experience. Depending on who uses your system, you will produce more relevant or better results for some and deny or disadvantage others.

Testing should intentionally test fairness and inclusiveness between users and user scenarios. As an example, a hiring platform should validate that job recommendations are free from discriminatory biases against different demographic customers, so as not to lock out working opportunities for some users.

Explainability

AI systems are generally black boxes; it is hard to know when and how some decisions were made. This opacity presents a serious problem for QA teams attempting to verify that the system is behaving as it should.

To do this, testers require tools that offer insights into decision-making processes. For instance, a recommendation engine should provide traceability indicating the reason that a specific product or content was recommended to the user.

Read: Explainability Techniques for LLMs & AI Agents: Methods, Tools & Best Practices.

Real-Time Adaptation

Hyper-personalized systems can dynamically update, even during a single user session. It provides a dynamic behavior where the output will always vary as per the interactions.

This temporal variability must be considered during testing, thus validating behavior over time and not just at a single point. A music streaming app is one such example where, when a user skips or likes a song, playlists need to update instantly, which QA needs to test as it happens live.

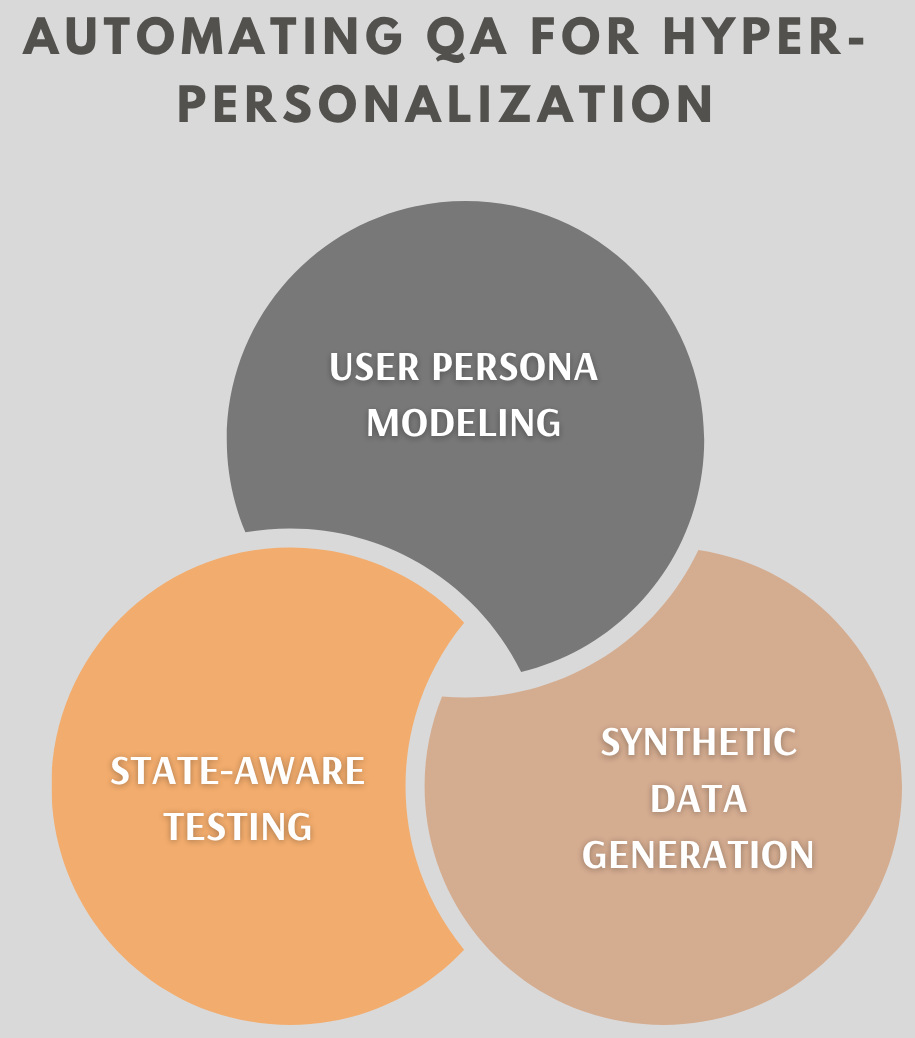

Automating QA for Hyper-Personalization

To automate testing effectively in hyper-personalized systems, QA teams must rethink the core foundations of automation. Instead of static scripts, automation must become adaptive, context-aware, and data-driven.

User Persona Modeling

Instead of validating generic user flows, QA should create specific personas that reflect different user types working with the system. These personas allow simulating real-world diversity, ensuring that testing is more representative of how usages happen in practice.

You should include well-defined behaviors, historical data about each persona, and boundaries for experience expectations to aid in validation. For instance, if onboarding flows for a new user and advanced features for an elite user are being tested, all experiences need to be tested accurately.

Synthetic Data Generation

Hyperpersonalized systems need a lot of heterogeneous, realistic data to produce good results. Using only one or a static dataset will provide you with incomplete/test results.

The use of synthetic data makes it possible to simulate many different behaviors, uncommon edge cases, and complex user journeys at scale. For example, you can generate different purchase histories and browsing patterns so that an e-commerce platform tests recommendations across several segments of customers.

Read: Test Data Generation Automation.

State-aware Testing

Testing should not just include inputs and outputs, but also needs to know the current state of the system at that moment during processing, user history, which model versions can interact with, and other contextual factors. This ensures that validation is representative of how the system operates over time and across conditions.

An automation solution must record state transitions and validate behaviors across different states. An example for this would be a streaming app that figures out which kind of content to recommend after watching many shows, so the tests need to authenticate this progression properly.

Automation Strategies for AI-driven UI Testing

Testing AI-driven interfaces requires a shift from rigid automation to intelligent, adaptive strategies that can handle dynamic behavior. The focus moves toward validating intent, context, and experience rather than fixed outputs.

Behavior-Driven Testing

Here, testing should not be about validating specific outputs but rather about whether the system behaves appropriately in context. That is, assessing relevance, logical adaptation of UI to user needs, and results instead of strict adherence.

How can that be achieved? QA should utilize semantic validation along with context-aware assertions, which translate meaning rather than just static values. That is, instead of expecting a specific item in the recommendation engine results, usage data should be validated according to relevance to user preferences.

Read: What is Behavior Driven Testing?

AI-assisted Test Automation

Notably, AI itself emerges as a strong enabler for testing the AI-driven systems by abstracting complexity and variability. They can understand UI context, adapt to layout changes, and validate content in a less mechanical manner.

These include features such as Vision AI, recognizing the same UI elements irrespective of layout changes, and NLP, verifying text relevance. For example, an AI tool can validate that a dynamically placed “Buy Now” button remains visible and clickable, even if it is repositioned.

Read: Self-healing Tests.

Exploratory Automation

AI is software that initially does not follow the rules as defined by the traditional automation process to write test cases according to a particular script. This enables automation to explore new scenarios and learn novel behavior as it constantly changes.

Automation frameworks should support multiple paths, detect anomalies, and provide evolving or dynamic test flows. For example, a test bot can navigate a SaaS platform, attempting various user journeys and reporting unexpected behavior or UI changes.

Validating Recommendations and Personalization Logic

- Relevance Testing: Testing should not focus on validating individual items, but rather on ensuring that recommendations are being presented within the right category, context, and user intention. If a user tends towards fitness equipment, then suggest to the customer something related to fitness equipment, like shoes or apparel, instead of other types. The idea is to ensure that recommendations register as intuitive and helpful from an end user’s standpoint.

- Diversity and Coverage: Recommendations should not be repetitive or overly narrow, as this can limit user experience and discovery. Testing must ensure a healthy mix of options across categories and perspectives. For instance, a streaming platform should not repeatedly suggest the same genre but instead include a variety of content types.

- Consistency: Even though outputs may vary, they should follow consistent and logical patterns aligned with user behavior. For example, if a user consistently engages with financial news, the platform should continue prioritizing similar content trends over time. Sudden, unexplained shifts in recommendations would indicate potential issues in the personalization logic.

Continuous Testing in AI Systems

- Model Monitoring: It focuses on tracking how AI systems behave in production over time. It involves continuously measuring performance, evaluating output quality, and detecting model drift that may degrade accuracy or relevance. This helps teams identify when a model no longer aligns with expected behavior.

- Feedback Loops: They integrate insights from real users, production data, and automated test results to improve system quality. By continuously feeding this information back into the system, teams can refine models and validate whether changes lead to better outcomes. This creates a self-improving testing and validation cycle.

- Canary Testing: It allows teams to deploy AI models incrementally to a small subset of users before a full rollout. This approach helps compare outputs between new and existing models while minimizing risk. It also enables early detection of anomalies, ensuring issues are addressed before widespread impact.

Ethical and Compliance Testing

- Bias Detection: This aims to recognize unfair or discriminatory trends in AI-based choices. Testing should assess if demographic bias, content exclusion, or disparities in user experience will be introduced by the system. This also helps keep personalization fair and inclusive among groups of users.

- Privacy Validation: It is an important aspect of any app, as it helps protect user data and ensures that the app complies with privacy regulations. This means proving your data usage is in compliance, that you’ve gotten the correct consent from users, and practicing safe handling of that data. This is essential to safeguard user trust and mitigate legal risks.

Building a Scalable QA Strategy

- The combination of deterministic and probabilistic testing enables teams to validate not just fixed system behaviors, but also dynamic AI outcomes. Deterministic checks make sure that core functionality is still running, while a probabilistic approach to results checking ensures that the outputs are within acceptable, meaningful boundaries.

- AI-assisted automation lets testing systems evolve with the documents they’re validating. These tools interpret context, account for UI variability, and intelligently evaluate outputs instead of depending on frangible scripts.

- Tests centered on behavior and outcomes move testing from overspecified checks for validity to user-facing quality assurance. QA does not check if the system returns specific results, but whether it is overall logical and useful for the end user.

- Investing in data engineering is essential for practical and scalable testing. The quality and coverage of the test data matter since they help to experience various scenarios for normal and edge cases that model real-world behavior.

- Integrating testing into the AI lifecycle ensures continuous validation as models evolve. This means embedding QA processes into data pipelines, model training, and deployment stages to catch issues early and maintain system reliability over time.

Read: The Role of Testing in Deployment Strategy.

The Role of Modern Testing Tools

Modern testing tools must evolve to support the dynamic nature of AI-driven and hyper-personalized systems. They need capabilities such as AI-based validation, vision-based UI testing, natural language test creation, and self-healing automation to remain effective in constantly changing environments.

testRigor, for example, enables human-readable test cases, visual UI validation, and significantly reduces maintenance effort in dynamic interfaces. This becomes especially critical when UI elements frequently change, as traditional automation approaches would otherwise require constant updates and quickly become unsustainable.

Read: All-Inclusive Guide to Test Case Creation in testRigor.

Conclusion

Hyper-personalization is transforming software into adaptive, ever-evolving ecosystems, fundamentally reshaping how testing must be approached. Traditional QA is no longer enough, and the future lies in intelligent automation, behavior-driven validation, AI-assisted testing, and continuous verification, where tools like testRigor play a key role in enabling resilient, low-maintenance automation for dynamic UIs.

Testing these systems is not about controlling variability but about understanding and validating it effectively. Organizations that embrace this shift will be the ones that successfully redefine quality in the age of AI.

Frequently Asked Questions (FAQs)

- Is behavior-based testing truly reliable when there is no fixed “correct” output to validate against?

Behavior-based testing relies on defining acceptable boundaries and relevance rather than exact matches. However, it requires strong domain understanding to avoid subjective judgments and inconsistent results.

- Are probabilistic validation approaches introducing ambiguity into QA instead of reducing risk?

Probabilistic validation reflects real-world AI behavior more accurately than rigid checks. That said, it can introduce ambiguity if thresholds and evaluation criteria are not clearly defined.

- How can QA teams confidently detect subtle model bias without deep expertise in data science?

QA teams can use predefined fairness metrics and testing frameworks to identify obvious bias patterns. However, deeper bias detection often requires collaboration with data scientists and domain experts.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |