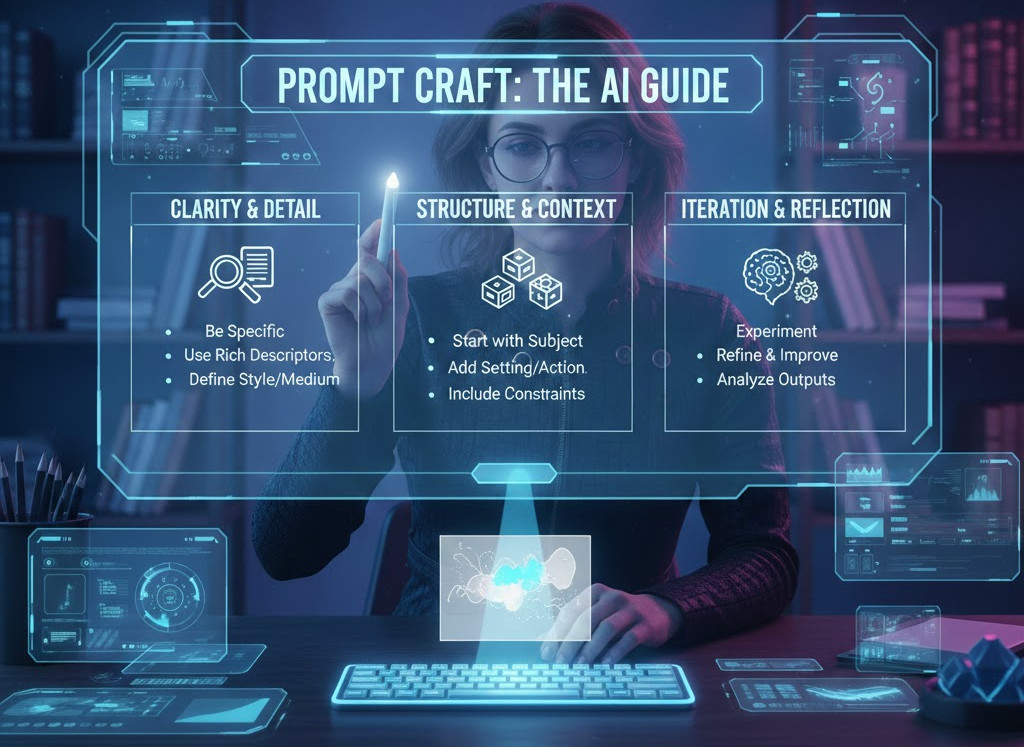

How to Write Good Prompts for AI?

|

|

“We are the authors of intelligence. Let’s write with care.”

Artificial intelligence has changed the way humans interact with machines. For years, interactions were prescriptive, subject to menus and buttons, and command-based APIs. Today, interaction is linguistic. We address machines in natural language, and the machines answer back with texts that often read as reason, explanation or creativity.

This shift has led some people to assume that AI is now “understanding” us. It does not.

In fact, what AI is doing, truth be told, is mirroring the structure and order of human expression and its very limits. The query is the intermediate language for human and machine reasons. Performance degrades when such translation is itself weak. When it’s good, AI is remarkably effective.

So, prompting isn’t a cosmetic talent. It is the art of being precise enough about intent that a non-human intelligence can effectively execute it.

| Key Takeaways: |

|---|

|

What is Prompt in AI

Prompting is not simply a technical engagement with AI, but a philosophical one in which we must render human intent in the most formalized language possible. It reveals the chasm between what we fancy, what we mean, and our ability to express it.

Language as a Proxy for Thought

Human language is a flawed approximation of thought, reducing complex mental structures into simple chains of words. In human language, we share context, culture, and intuition that help one reconstruct what the words are not saying. AI has none of that common knowledge, so language is the only way to convey meaning. All the ambiguities, assumptions, and omissions in a prompt remain untouched and directly affect the generated text. Prompting forces a philosophical reckoning:

If I cannot express my intent clearly, do I actually understand it? Many prompt failures are not failures of the AI. They are failures of articulation.

Meaning vs. Probability

Humans speak meaning, AI speaks probability. When you are writing a prompt, it is because you think you have intent on your mind, but the AI sees your words as probabilistic signals being filtered through learned patterns. This is a fundamental mismatch between what humans expect and how machines behave. Well-formed prompting closes this gap by minimizing ambiguity and the density of constraints.

The Illusion of Intelligence

The output of AI often sounds smart even when it gets things wrong, which creates a dangerous illusion in which eloquence is confused with accuracy. Fluency can also conceal shallow reasoning, falsely providing confidence in the answer.

Skill in eliciting consists, therefore, not only of noting a lack of depth in what is output as well as identifying such hallucinatory ‘confidence’, but also discriminating coherence from truth. As AI gets more fluent, the onus upon humans to critically assess its output merely grows.

What is a Good Prompt for AI

Prompting as a cognitive discipline requires conscious thought, to think on purpose, not casually. It needs the human to disambiguate intention, organize thinking, and settle on a form of words before getting down to business with the machine. In a way, prompting is as much about shaping human thought as it is guiding artificial intelligence.

Read: Understanding Pair Prompting in AI.

Prompting Forces Thought Compression

To write a prompt is to compress context, goal, constraint, and expectation into a small thing of language. The efficacy of this compression directly correlates to how well the AI is able to respond to intent. Poor prompts compress poorly and either eliminate or distort crucial information, while good prompts maintain structure and meaning. That’s why good engineers, architects, and testers often make great prompters – they are conditioned to break complex systems down into fine-grained specifications.

Read: Cognitive Computing in Test Automation.

The Role of Mental Models

Each successful prompt is built upon an internal model of human cognition, which defines how the concept is embedded. This mental model is weak in the case of vague prompts and produces generic output. If you have a strong mental model, the prompt is well-defined, and the output is actionable. Prompting is not teaching; it is seeing if they know already.

The Question that Defines Prompt Quality

Before you blame AI, consider whether you can explain the problem clearly to another human without ambiguity. If the answer is no, then that showdown will baffle AI in exactly the same way. This question serves as a consistent indicator of the quality of prompts. The prompt quality is directly proportional to the problem clarity. Clear thinking yields clear prompts, and clear prompts result in more accurate outcomes. Because if there is a lack of clarity, you can tweak it as quickly as possible, and it will not make up for the loss.

Why AI Prompts Fail

The majority of prompt failures are structured, as opposed to being random. They arise from the human tendency to externalize intent, not any lack of capability in AI.

- Vague Goals: Prompts like “improve this” and “make it better” establish no standard for success. And without clear guidelines or measurements of improvement, the AI never really knows what to optimize toward and settles for generic responses.

- Unstated Constraints: Humans often assume constraints like time, budget, risk tolerance, or regulatory limits are self-evident. AI assumes nothing unless it is explicitly stated, leading to answers that may be technically sound but practically unusable.

- Overloaded Prompts: Prompts like “analyze, design, and compare at the same time” weaken focus. Good prompting breaks up a complex prompt into smaller, well-scoped steps to avoid losing depth and precision.

Bad vs. Good Prompt for AI: Examples

There’s usually a big difference between a prompt that works and one that doesn’t. Here are some examples to demonstrate how modulating clarity, constraints, and intent take you from poorly defined requests into an executable specification.

| Scenario | Bad Prompt | Good Prompt |

|---|---|---|

| Vague improvement request | “Make this better.” | “Rewrite this document for senior engineering leaders, improving clarity and structure while preserving the original technical meaning.” |

| Unfocused analysis | “Explain why this failed.” | “Analyze the likely causes of this failure using only the provided logs. State assumptions explicitly if evidence is missing.” |

| Overly broad design task | “Design a scalable system.” | “Design a scalable authentication system for 1M users per day with GDPR compliance, prioritizing simplicity over novelty.” |

| Ambiguous comparison | “Compare these tools.” | “Compare these tools for a mid-sized QA team focusing on maintenance effort, learning curve, and long-term cost.” |

| Poorly defined testing task | “Test the login feature.” | “Test login with a valid user and verify the dashboard loads within two seconds without errors.” |

| Undefined planning request | “Create a roadmap.” | “Create a six-month QA automation roadmap for a startup with five engineers and a limited budget.” |

Why the Bad Prompts Fail

Bad prompts fail because they separate intent and structure from each other. They use implicit assumptions, assumed context, or subjective language the AI can’t infer. The model will hence end up using a generic pattern to fill in the gaps and hence generating output that makes sense, but are weakly related to what is actually needed.

Why the Good Prompts Work

Good prompts work because they’re acting as specifications rather than requests. They make intention explicit, structure constraints, and provide a straightforward metric for what “success” looks like. This makes things less ambiguous, the solution space smaller and enables AI to operate predictably instead of guessing.

Prompting as an Engineering Specification

Prompting should be treated with the same formality as other engineering documents, not like casual conversation. Just as with software projects, vague requirements give rise to unpredictable and unusable outcomes. A good prompt is a specification that expresses intent, constraints, and success criteria. It turns out that when prompt designing work is treated as an engineering project, reliability gets much better.

A practical example of this philosophy can be seen in tools like testRigor, where prompts are not casual queries but executable specifications. Test scenarios are written in structured natural language that encodes intent, constraints, and expected behavior explicitly. When a tester writes “login as a valid user and verify the dashboard loads within two seconds,” the language is not decorative; it is the control surface.

The reliability of the result depends entirely on the precision of the prompt, reinforcing the idea that prompting is closer to requirements engineering than conversation.

Read: Talk to Chatbots: How to Become a Prompt Engineer.

Prompts as Specifications

In engineering, fuzzy requirements are a recipe for disaster, and the same goes for AI triggers. A strong prompt is similar to a technical spec, design brief or test charter that explicitly describes what is in scope (and out of scope), inputs, technologies, outputs, constraints, and acceptance criteria. This is such precision that the AI can even work within these guidelines, instead of having to guess at intent.

Context is State

AI conversations are naturally stateful to some extent, since each new prompt changes the current context of the conversation. A bad state handling results in drift, contradiction, and misalignment over time. Good prompts maintain state by summarizing what decisions have been made, restating the goals or constraints when needed. Read: AI Context Explained: Why Context Matters in Artificial Intelligence.

Asking vs. Directing

Engineering distinguishes decisively between exploration and execution, and prompting ought to do likewise. Exploratory prompts deliberately expand the solution space, whereas directive prompts narrow it toward the target solution. Confusion between these modes frequently leads to responses which are unfocused at one extreme, and hyper-exact on the other.

Better AI Prompt: Language Matters

The precision of the language is what determines how strong a signal an AI gets. AI systems do not act on intentions per se but rather correspond to the linguistic patterns for expressing those intentions. Minor variations in the wording can lead to great differences in the depth, focus, and structure of the reasoning. The heavy prompting takes the language as a command rather than an aesthetic wield.

Why Words Matter More Than You Think

AI answers to linguistic signals in which verbs stand for action, adjectives for evaluation, and the structure itself represents the flow of reasoning. If you substitute, say, explain with analyze, the expected depth and rigor of the answer changes. Likewise, “failure modes” is better than “issues” and indicates a more methodical and mechanical approach.

Domain Language as a Control Surface

By using domain-specific terms, one can fire deeper and more relevant knowledge patterns in the model. It also denotes an assumed level of knowledge and eliminates beginning or explanatory material. Prompting accuracy and the quality of output both rise sharply with domain fluency. Read: Why Testers Require Domain Knowledge?

Grammar as Structure, Not Style

Grammar is not just about being right or wrong; it is about how ideas are divided logically and linked. Non-optimal sentence structure destroys reasoning paths and leads to an indecisive conclusion. Clear grammar results in clearer inference and alignment with intention.

Iteration as Alignment Mechanism

Good prompting is seldom shot-based and should be considered as an aligning process instead of an instruction. The common machine interpretation and human intent are further honed in each cycle. As changes are made, ambiguity is minimized, and relevancy is maximized. Iteration isn’t the enemy of efficiency; it’s how we find alignment.

Read: What Is “Vibe Slopping”? The Hidden Risk Behind AI-Powered Coding.

Prompting Is Rarely One-Shot

AI performance is gradually enhanced by a process of refinement, such as clarifying misunderstandings, narrowing the scope, and adding constraints. Each iteration hones and focuses attention. Over time, this helps the AI’s response converge towards its initial intent.

The Power of Critique

Direct criticism of a prompt’s input is usually more useful than rewriting the entire prompt. Identifying the areas that are too general, missing or wrong with the assumptions, as well as some expert feedback that can be very accurate. Expert users mold and guide output through feedback rather than tossing it all away.

Evaluating AI Output

Assessing the results of AI requires active judgment, not passive reception. Fluency and conviction jointly give a deceptive appearance of correctness to things that are just plain wrong or not well-conceived. Rigorous examination ensures that AI is a decision-support, rather than a modal advisor. Even nicely-written prompts can be misleading when held unscrutinized.

Read: How to use AI to test AI.

Confidence is Not Correctness

AI is often able to generate answers with high confidence, even when the answer is wrong or incomplete. Strong evaluation requires domain understanding, skepticism, and judgment. So good prompting also knows when to question, even reject an output.

Hallucinations Are a Human-Model Interaction Problem

Hallucinations often occur when prompts call for certainty where it does not exist or when constraints are vague. They can also appear in cases of unverifiability or implicitness when the latter is not plausible. Well-prompted dialogue can mitigate the risk of hallucinating by making it explicit that the AI should say what its assumptions are, what its uncertainties are, and generally curtail speculative reasoning. Read: What are AI Hallucinations? How to Test?

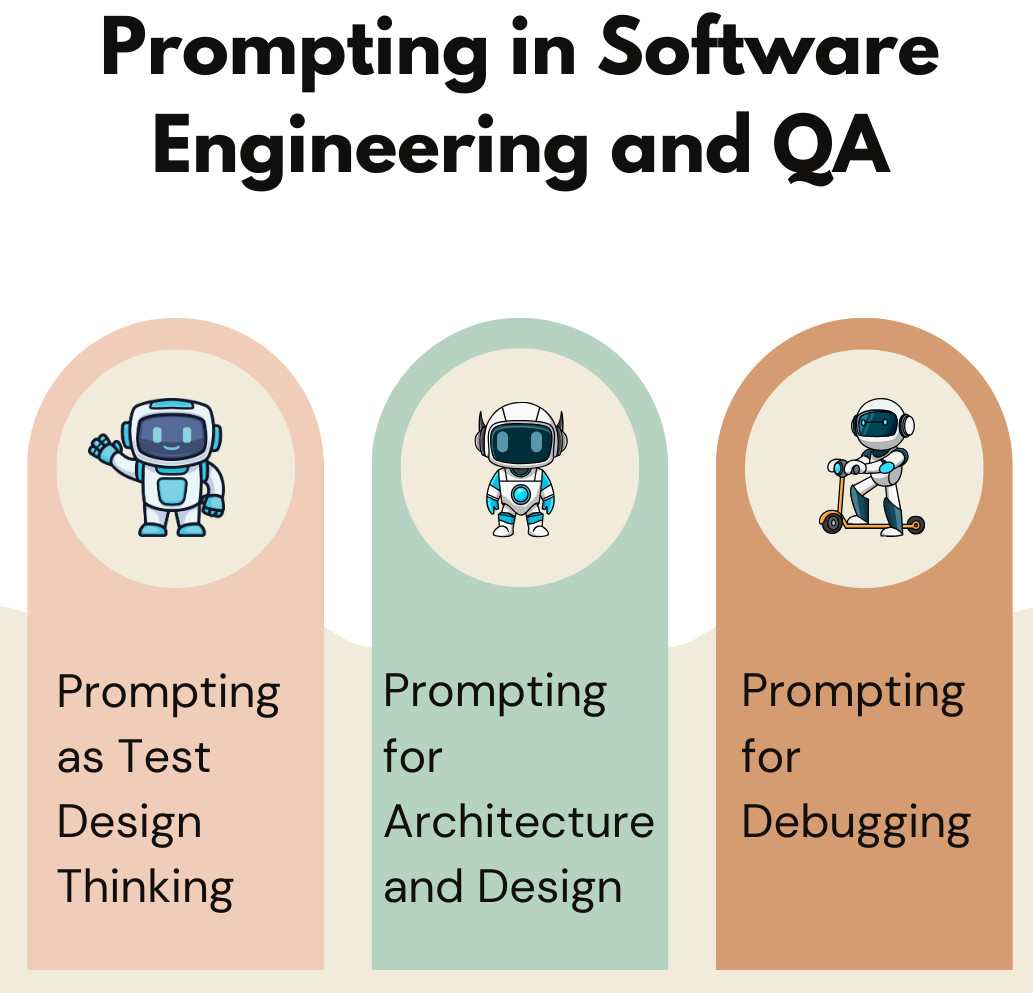

Prompting in Software Engineering and QA

Prompts are an important concept in software engineering and testing, where precision and structure are already core skills. In these fields, prompts are formal problem statements and not casual questions. For verifying and debugging, there are testing and design issues that AI can support more reliably with well-formed prompts. Aligned with engineering thinking, prompting ceases to be a risk and turns into a force multiplier.

Prompting as Test Design Thinking

The best prompts are like good test cases; you can find clear preconditions, relevant actions from the user perspective and some sort of expected response. This mechanical contrivance eliminates ambiguity and steers the AI towards useful possibilities. Testers are often strong in the area of prompting because they think intuitively about edge cases, constraints, and observable outcomes.

This is why many QA professionals find tools like testRigor intuitive. The platform enforces test-design discipline by requiring prompts that resemble high-quality test cases: clear preconditions, user-level actions, and verifiable outcomes. Ambiguous instructions fail fast, while precise prompts produce stable automation. In this sense, testRigor does not abstract away thinking; it demands better thinking upfront, exactly as good prompting does. Read: How to use AI effectively in QA.

Prompting for Architecture and Design

Architectural triggers need to make explicit the consideration of scale, constraints, and trade-offs in order to be successful. Without this, AI solutions will come up with ideas that are either too perfect or not implementable. Clear architectural framing also ensures the output remains anchored in real-world feasibility.

Prompting for Debugging

Good debug assertions describe just enough symptoms to be clear but not so much that they are awkward to write. The intent is to screen out the irrelevant noise, which can cloud thinking. However, bad prompts, on the other hand, lead to wild guessing rather than diagnosis.

Read more: Prompt Engineering in QA and Software Testing.

Prompting in Autonomous and Agentic Systems

As AI systems gain in autonomy and agency, the focus is moving from usability to the safety-critical field. Prompts are becoming increasingly influential, not only on the response side, but also on planning, decision, and action implementation. Mistakes made at the prompt stage can spread to the next phases and cause increasing damage. In these systems, the reliability, control, and alignment are governed by the prompt quality.

Even in semi-autonomous systems like testRigor, where AI decides how to locate elements or adapt to UI changes, prompt quality functions as policy. Clear boundaries, what must be verified, what constitutes failure, and what can change govern how far autonomy extends. This mirrors the broader truth of agentic systems: prompts are not instructions alone; they are behavioral constraints. Read: Different Evals for Agentic AI: Methods, Metrics & Best Practices.

Why Prompt Quality Matters More as Autonomy Increases

When AI acts and plans on its own, weak prompts can encode a bad intent that generalizes across actions. These errors compound over time and can hide failures within an overall apparently consistent course of action. Powerful priors encode well-defined goals, bound the level of autonomy, and remain aligned with human interests.

Prompts as Policy, Not Just Instructions

In agentic systems, prompts effectively serve as implicit policies and not just plain task instructions. They are how guardrails, accepted behaviors and operational boundaries are established. Poorly crafted prompts thereby create a systemic risk that makes it possible to bake unsafe or unclear policies into self-driving behavior.

Prompting as a Meta-Skill and a Leadership Skill

Prompting has arisen as a meta-skill shaping how people think, communicate, and lead. It is where clarity, decision-making, and technical fluency intersect. Leaders who prompt well are also better at translating intent into action, whether they are doing so with humans or machines. As AI becomes integrated into the workday, the requests you receive increasingly reflect what kind of leader you are.

Prompting Improves Thinking

Given the prompt, it demands a clear focus, prioritization, and precision because your ideas need to be organized into an explicit structure. This field reveals reasoning holes and bad assumptions early on. It’s no wonder, then, that those who inspire well often get ideas across more clearly, think through them more closely, and lead more effectively.

Prompting as Organizational Capability

Teams with strong prompting skills use AI more effectively and with less rework. Clear prompts improve decision quality by reducing ambiguity and misalignment. As AI adoption grows, prompt literacy is becoming a core organizational competency rather than an individual advantage.

Conclusion

AI is not a substitute for human thought; it reflects the clarity and discipline of such thought. Good prompts are not cool tricks but a conscious statement of intent, where clear thinking leads to useful AI results. Everyone else pales in comparison because the future will be owned by those who learn to speak accurately to a form of intelligence that doesn’t think quite like them.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |