Top QA Trends for 2026

|

|

Software quality assurance is no longer a mere supporting function; it now acts as a strategic cornerstone of digital transformation. As organizations reduce release cycles, embrace AI-driven development, and scale across cloud-native ecosystems, QA is one of the most transformed disciplines in its history.

The year 2026 represents a tipping point. The era of traditional testing practices, including manual validation, script-heavy automation, and siloed quality assurance (QA) teams, is gradually becoming a thing of the past. They are being replaced with an intelligent, autonomous, and deep-integrated quality engineering eco-system.

| Key Takeaways: |

|---|

|

The State of QA in 2026

- QA is no longer a phase: it is embedded across the entire SDLC. Quality assurance now begins at the earliest stages of development and continues through deployment, ensuring continuous quality rather than late-stage fixes.

- Testing is no longer just validation: It is now prediction and prevention. Modern QA leverages AI and analytics to identify potential issues before they occur, shifting the focus from detecting defects to proactively avoiding them.

- QA teams are no longer execution-focused: They are becoming decision-makers. QA professionals are increasingly influencing product and engineering decisions by providing insights, risk assessments, and quality-driven recommendations.

The digital landscape is evolving, and so is the software testing market. As businesses expand their digital footprint, there is an ever-growing need for sophisticated test automation.

Simultaneously, AI and automation are revolutionizing the way testing is performed by significantly cutting down manual effort and speeding up delivery cycles. This transition empowers QA teams to escape mind-numbing execution and spend more time on strategic initiatives, innovation, and quality engineering excellence.

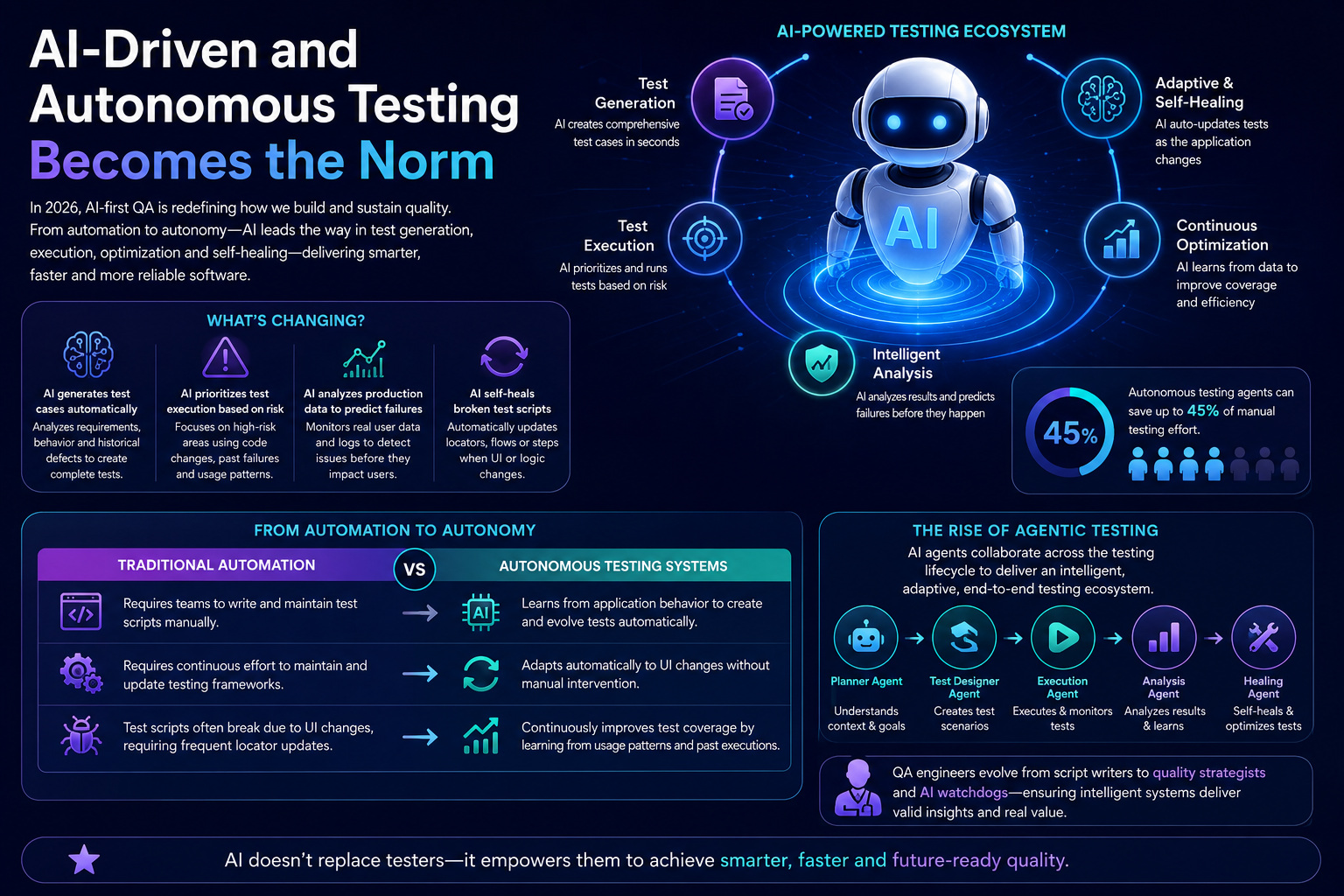

AI-Driven and Autonomous Testing Becomes the Norm

In 2026, the most significant shift is “AI-first” QA, where AI re-imagines how we build and sustain quality. AI has advanced to play a more pivotal role in testing, going from being an aid to testing to leading test generation, execution, and optimization efforts. This evolution is converting QA from a lagging function to a leading, intelligence-based discipline.

What’s Changing?

- AI generates test cases automatically: AI can thoroughly analyze requirements, user behavior, and historical defects before generating complete test cases without months of manual work from testers, thus speeding up the test design phase tremendously.

- AI prioritizes test execution based on risk: Rather than running all tests to the same degree, AI pinpoints high-risk areas by analyzing code changes, previous failures, and usage patterns to ensure that critical tests are always run first.

- AI analyzes production data to predict failures: AI can monitor user interactions and production logs, detecting problematic trends and predicting failures past their time to impact.

- AI self-heals broken test scripts: With frameworks that leverage AI, locators, flows, or test steps can be updated automatically whenever the application UI changes, thereby reducing maintenance overhead.

The use of autonomous testing agents is becoming more widespread among organizations, as it can potentially save up to 45% of manual testing effort.

From Automation to Autonomy

| Traditional Automation | Autonomous Testing Systems |

|---|---|

| Traditional automation requires teams to write and maintain test scripts manually. | Autonomous testing systems learn from application behavior to create and evolve tests automatically. |

| It requires continuous effort to maintain and update testing frameworks over time. | These systems adapt automatically to UI changes without requiring manual intervention. |

| Test scripts often break due to UI changes, requiring frequent locator updates. | They continuously improve test coverage by learning from usage patterns and past executions. |

The Rise of Agentic Testing

The paradigm is shifting towards agent-based testing, where various AI agents work together and cover different aspects of the overall testing lifecycle. At the core of the intelligent adaptive test ecosystem is a set of specialized agents, like test generation, execution, and analysis, working together to build an AI-powered testing framework.

This is also changing the role of QA engineers from script writers to quality strategists for defining how and where testing should happen. They no longer perform tests manually; instead, they have taken a role similar to AI watchdogs and ensure that intelligent systems function as intended and generate valid insights.

Read: Different Evals for Agentic AI: Methods, Metrics & Best Practices.

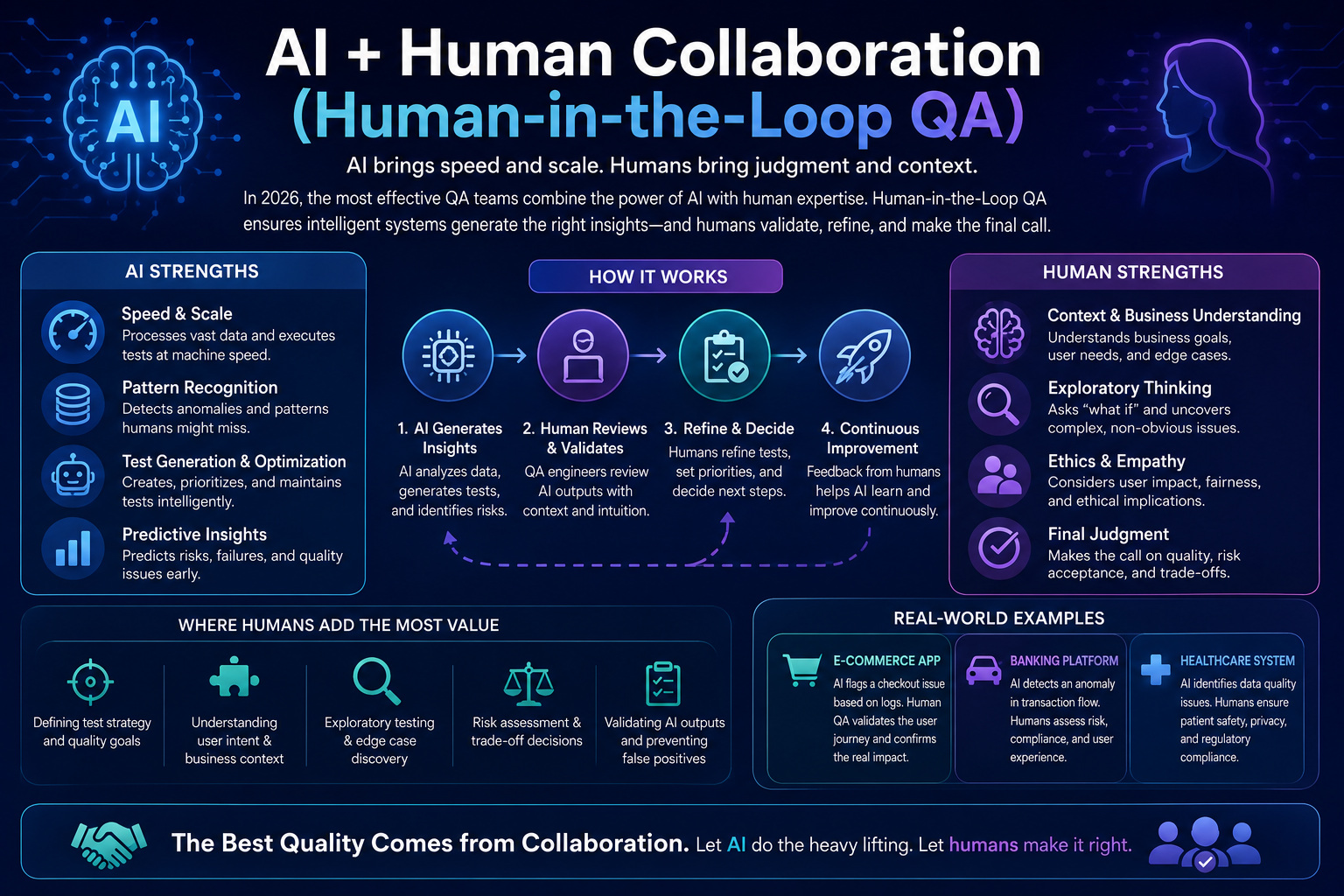

AI + Human Collaboration (Human-in-the-Loop QA)

Amid all the hype, AI is not taking over QA; it is complementing and amplifying the role of quality engineering. This experience has proven that the transition from QA people to using only AI can result in catastrophic malfunctions and financial losses. This brings to light that even AI-driven testing systems cannot replace human intelligence; experts are still needed to drive the tool, validate the results, and ensure its effectiveness.

Read: How to Keep Human In The Loop (HITL) During Gen AI Testing?

Why Human-in-the-Loop Matters

| AI Capabilities | Human Strengths |

|---|---|

| AI can generate test cases rapidly, significantly reducing test creation time. | Humans understand business context and user intent beyond what data alone can provide. |

| AI can identify patterns across large datasets and detect anomalies efficiently. | Humans can validate complex edge cases that require intuition and domain knowledge. |

| AI can predict potential failures using historical and real-time data analysis. | Humans ensure usability and alignment with business goals and customer expectations. |

The New QA Workflow

AI creates test scenarios from the behavior of applications, user flows, and historical defect patterns to save hours of manual test design. QA professionals then take over instead, scrutinizing these scenarios and elaborating on those domains, edge cases, and the business context that AI is simply not designed to consider.

Once validated, AI conducts the tests at scale and analyzes the results with speed and precision, detecting anomalies, patterns, and potential risks. These results could be interpreted as: QA engineers analyze these, apply critical thinking, create context around the numbers, and make decisions based on them to derive the actual impact on the product.

QAOps: The Convergence of QA, DevOps, and Observability

QAOps is redefining how modern teams build, test, and operate software by merging quality into every stage of delivery. In 2026, it’s no longer optional; it’s the backbone of fast, reliable, and scalable engineering.

What is QAOps?

QAOps unifies testing, development, operations, and monitoring in a seamless and continuous workflow. As an alternative to considering quality as a last stop, it moves validation throughout the entire lifecycle of software. This change makes sure the testing does not remain siloed and becomes a shared responsibility.

Why does it matter? Because modern systems are very distributed, very cloud-native, and very much alive. Conventional testing methodologies are simply not equipped to cope with the pace and complexity of microservices-led architectures. With QAOps, testing becomes continuous, feedback loops close in real-time, and quality gets ensured proactively. Therefore, issues are identified and fixed before users ever see them.

Key Capabilities

- Continuous testing in CI/CD pipelines ensures that every code change is automatically validated as part of the build and deployment process.

- Real-time monitoring and alerting enable teams to track application performance and detect issues instantly in production environments.

- Feedback loops from production to development ensure that insights from real user behavior, logs, and system metrics are continuously fed back into the development cycle.

Read: Continuous Integration and Continuous Testing: How to Establish?

Shift-Left + Shift-Right = Shift-Everywhere Testing

- Shift-Left: Validation of quality at the very beginning of the development lifecycle (Requirement analysis). It consists of practices such as static code analysis and automated unit testing that find defects early in development, before they grow. Teams that can detect problems early reduce rework, cost, and risk.

- Shift-Right: Focuses on testing and validating in production, where real-world conditions are active. By focusing on monitoring, chaos engineering, and studying actual user behavior to inject some unknowns. It makes sure systems are resilient, reliable, and in line with what users would expect.

- Shift-Everywhere: The combination of Shift-Left and Shift-Right transforms testing into a continuous process. It becomes context-aware by adapting to real-time data and system behavior. Ultimately, testing evolves into a data-driven practice that consistently improves product quality and user experience.

Testing AI-Generated Code

As AI coding assistants like GitHub Copilot become mainstream, they are accelerating development at an unprecedented pace. However, this shift introduces new testing challenges that traditional QA approaches are not fully equipped to handle.

New Risks

- Hidden logic flaws can be introduced in AI-generated code, making defects harder to identify using traditional testing methods.

- Security vulnerabilities may arise because AI models can replicate insecure coding patterns from their training data.

- The lack of explainability makes it difficult for developers and QA teams to understand the reasoning behind the generated code.

New Testing Approaches

Teams are using AI-specific code review strategies and improving static analysis techniques to overcome these challenges. QA teams need to not just validate whether the code works, but also that it conforms with expected logic and intent.

In addition, behavioral validation is becoming essential to ensure that AI-generated outputs behave correctly under real-world scenarios. QA teams must now validate not only code correctness but also the quality and reliability of AI-driven decisions.

Continuous Security Testing (DevSecOps)

Security is no longer treated as a separate phase; it is deeply embedded in QA practices across the lifecycle. This shift is driven by increasing cyber threats, stricter regulatory compliance requirements, and growing concerns around data privacy.

As a result, organizations are integrating automated security scans directly into CI/CD pipelines and adopting continuous vulnerability assessment. Runtime security monitoring further ensures that threats are detected and addressed even after deployment, with many teams already embracing shift-left security practices. Read more about DevSecOps.

To support this evolution, techniques like SAST, DAST, and SCA are becoming standard in modern testing strategies. Security testing is now continuous, automated, and risk-based, enabling teams to proactively identify and mitigate vulnerabilities.

Cloud-Native and Scalable Testing Infrastructure

Testing infrastructure has rapidly moved to the cloud because that’s how modern applications are built. This transition has been mainly powered by the need to scale, cost-effective solutions, and globally distributed teams. Cloud-native environments let teams spin up and scale resources as needed for testing and experimentation without making a large upfront investment.

The key trends are cloud-based test environments for on-demand execution and seamless cross-browser & device testing. These capabilities allow tests to be run in parallel at a massive scale, allowing you to practically eliminate test cycle time.

Subsequently, it helps teams deliver with shorter feedback loops and heightened collaboration across development, QA, and operations.

Read: Test Scalability.

Observability-Driven Testing

Observability is becoming a key driver of QA by enabling teams to deeply understand system behavior in real time. It relies on logs, metrics, and traces to provide a complete picture of how applications perform across environments. This visibility helps teams move beyond surface-level testing to truly data-informed quality engineering.

Observability transforms QA by helping identify root causes faster, enabling proactive testing, and improving debugging efficiency. QA teams now rely heavily on production data to enhance test coverage and uncover real-world issues that traditional testing might miss. As a result, testing becomes more accurate, continuous, and aligned with actual user experiences.

Performance Engineering as a Continuous Discipline

Performance testing is an evolution towards performance engineering, a change in focus from infrequent validation to continuous performance assurance. In contrast to running load tests on a particular cadence, teams validate performance at different points of the development lifecycle.

This move puts a heavy focus on real-time performance monitoring, scalability testing, and user experience metrics. Teams can avoid performance problems, scale systems up well, and give users a consistent experience as a result.

Accessibility Testing Becomes Mandatory

Accessibility testing has transitioned from optional to mandatory in modern software quality practices. This trend is fueled by laws, the principles of inclusive design, and the quest to achieve a better user experience for all users.

Now, organizations are checking accessibility and bringing them into the flow as part of CI/CD pipelines. Our training is also more focused on UX validation, which helps ensure applications are usable and accessible when used in the real world.

Exploratory Testing Makes a Comeback

Interestingly, while automation continues to grow, exploratory testing is becoming even more important in modern QA practices. This is because AI cannot fully replicate human intuition, especially when it comes to understanding unexpected behaviors and nuanced user experiences.

Edge cases often require creativity and out-of-the-box thinking that automated scripts may overlook. Additionally, validating user experience demands a human perspective to assess usability, emotion, and real-world interaction.

As a result, the role of QA is shifting toward critical thinking and deeper exploration of unknown scenarios. Testers are now expected to validate real user behavior and uncover issues that go beyond predefined test cases.

Read: Testing AI Tone, Empathy, and Context Awareness.

Ethical AI Testing and Governance

As AI continues to expand, the importance of its ethical behavior and effects is becoming more prominent. It is necessary to actively address key areas such as bias detection and prevention, transparency and explainability, and fairness in order for AI systems to be responsible and trustworthy.

QA has come of age to become an essential part of validating AI outputs for regulatory compliance and protecting us from harmful behavior. It sounds more than functional testing; it should focus on ethics, accountability, and governance.

Read: AI Compliance for Software.

Testing for Emerging Technologies (IoT, AR/VR, Blockchain)

- IoT Testing: Focuses on ensuring seamless device interoperability across a wide range of connected systems. It also involves validating real-time data accuracy and reliability as devices continuously exchange information.

- AR/VR Testing: Emphasizes validating user interactions within immersive and highly dynamic environments. It also requires performance validation to ensure smooth rendering, responsiveness, and a realistic user experience.

- Blockchain Testing: Involves validating smart contracts to ensure they execute accurately and without errors. It also prioritizes security testing to protect against vulnerabilities in decentralized and distributed systems.

Conclusion

The QA landscape is changing in 2026, as AI continues to revolutionize the way testing is performed, demanding that teams move toward continuous intelligent quality. QA is transitioning from being a function with an operational nature to becoming the strategic sensory system.

Human intelligence, though important for critical thinking and ground-truthing, remains indispensable. Those that adapt will provide higher quality, quicker, and those that do not adapt risk modernization in a complex world.

Frequently Asked Questions (FAQs)

- Why is accessibility testing becoming mandatory?

Accessibility testing is becoming mandatory due to stricter regulations, inclusive design practices, and the need to ensure applications are usable for all users.

- Why is observability important in software testing?

Observability helps QA teams monitor logs, metrics, and traces in real time, allowing faster root-cause analysis, proactive issue detection, and improved test coverage based on actual production behavior.

- What challenges come with AI-generated code?

AI-generated code can introduce hidden logic flaws, security vulnerabilities, and explainability issues that require advanced validation and testing strategies.

- What is Shift-Everywhere testing?

Shift-Everywhere testing combines Shift-Left and Shift-Right testing approaches to enable continuous quality validation across the entire SDLC and production environments.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |