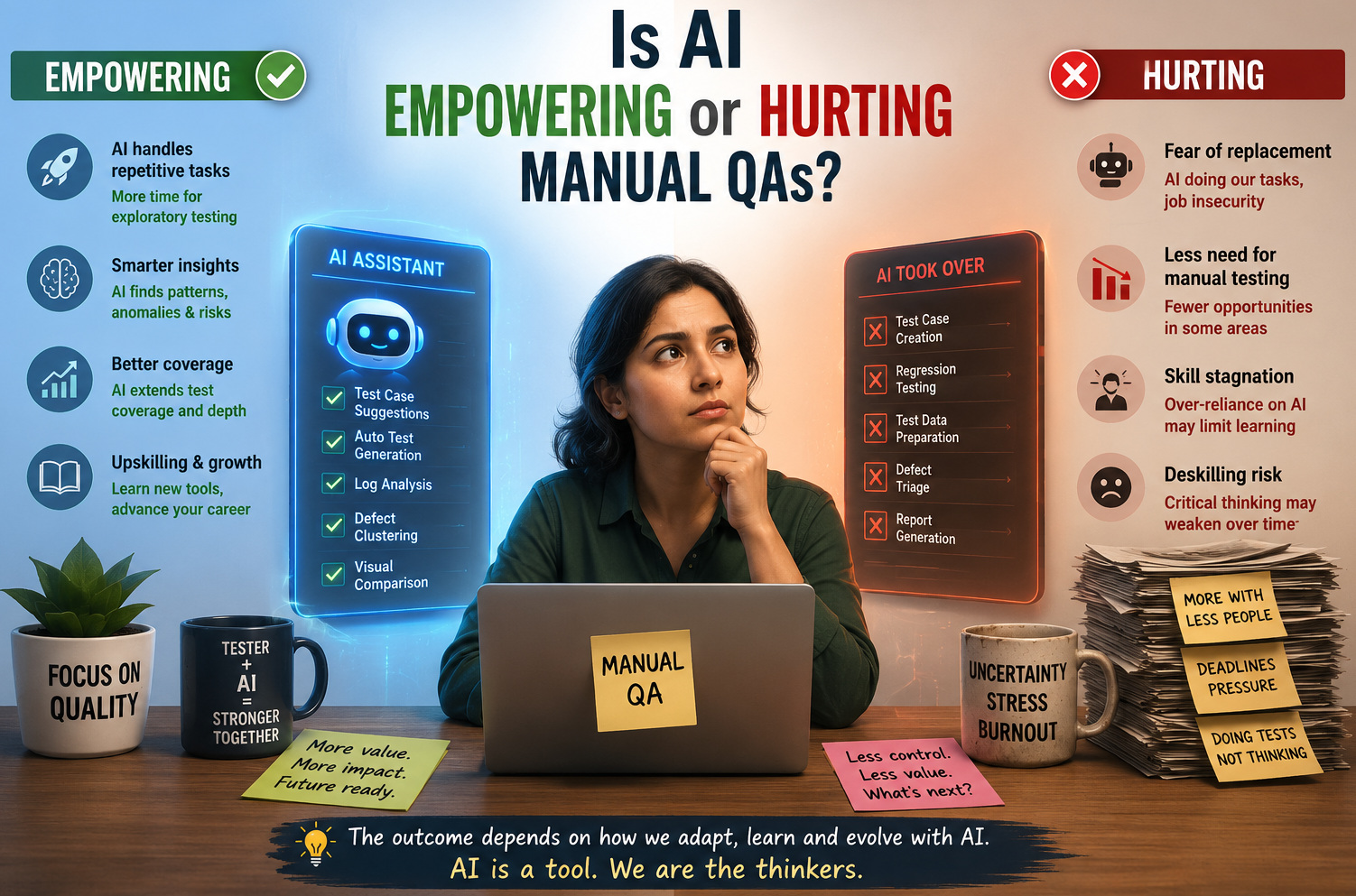

Is AI Empowering or Hurting Manual QAs?

|

|

One of the biggest discussions in QA lately is whether or not Artificial Intelligence will help manual testers, or if it prepares them for quiet replacement. The answer appears layered for many manual QAs. There is no denying the benefits AI offers, including test execution in minutes; better tracking of defects, releasing us from repetitive tasks that previously took hours to execute!

But with that excitement comes a very real sense of the unknown.

While machines automate repetitive validation and data-heavy analysis, naturally, the question arises: Where do human testers fit in?

The reality isn’t as drastic, though. AI is not here to destroy manual QA; it is changing it. Execution-heavy aspects of the role are moving towards exploratory testing, validation of user experience, and scenarios with computer-run logic that machines fail to replicate. Manual testers who learn to work alongside AI will find themselves more valuable than ever, rather than competing with it.

At the end of the day, AI is merely a tool, and what it does depends entirely on how testers elect to evolve with it and use it.

| Key Takeaways: |

|---|

|

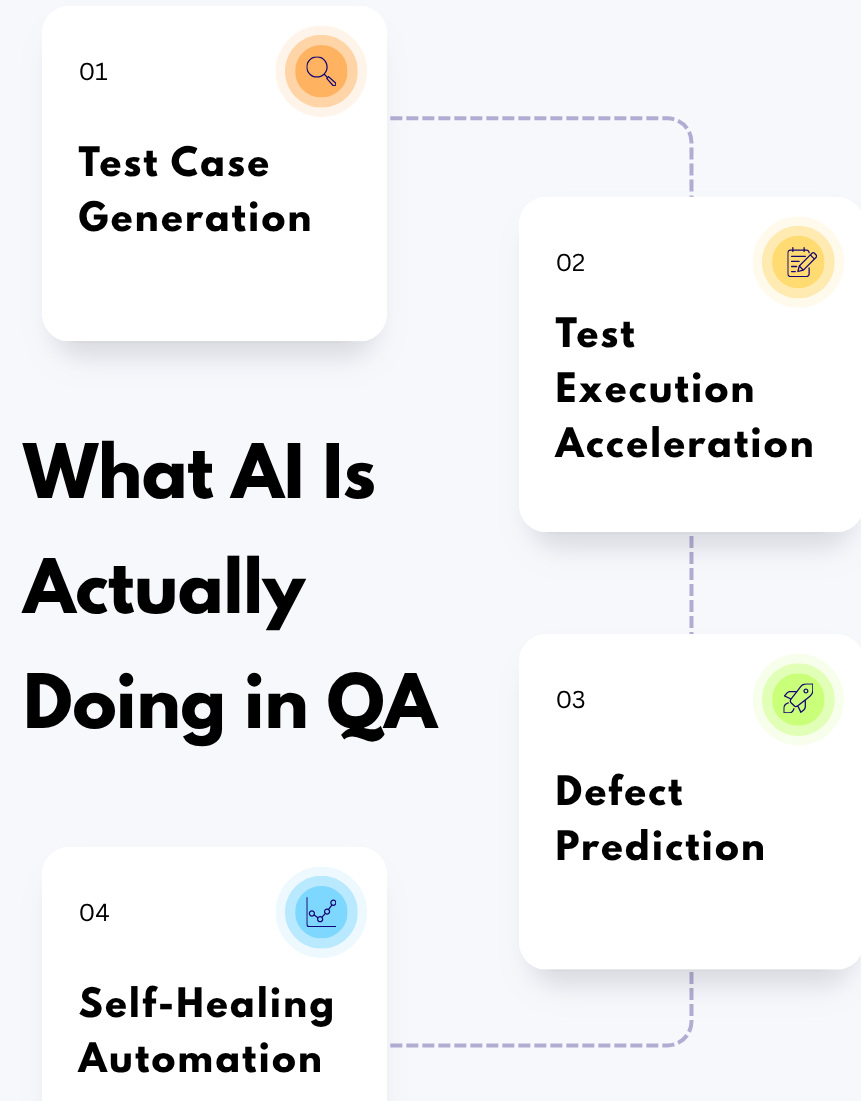

What AI is Actually Doing in QA

But before we get ahead of ourselves, let us clarify something: AI is not replacing QA; it is evolving the game. AI does not replace testers; rather, it is replacing repetitive and mundane tasks like regression checks, test data generation, defect pattern analysis, etc. Thus, enabling QA engineers to spend more time on exploratory testing, critical thinking, and enhancing user experiences. Here’s what AI is currently good at:

Test Case Generation

With AI, it is possible to convert the requirements rapidly into organized test cases, including proposals for edge cases and increasing coverage in less time. Concretely, if a user needs to log in, AI may create tests for valid and invalid credentials, password limits, or session timeouts, but will likely not create tests for real-world scenarios (like a user switching devices before their session times out).

Read: Creating Your First Codeless Test Cases.

| Can | But |

|---|---|

| Convert requirements into test cases | Lacks deep business context |

| Suggest edge cases | Can miss real-world scenarios |

| Improve coverage quickly | May generate generic or irrelevant cases |

Test Execution Acceleration

AI-powered tools can execute thousands of tests in parallel, dramatically reducing regression cycle time. For instance, a full regression suite that once took 8 hours can be completed in under an hour, but faster execution doesn’t guarantee meaningful validation.

Read: How to execute test cases in parallel in testRigor?

| Can | But |

|---|---|

| Run thousands of tests quickly | Speed ≠ quality |

| Optimize test suites | May skip critical validations |

| Reduce execution time | Human validation is still needed |

Defect Prediction

AI can examine previous defect data and code modifications to bring attention to the areas that are at risk before even performing testing. If a payment module is often used, AI could mark it as high-risk, but predictions alone can be inaccurate when data is outdated or incomplete.

Read: How to Turn Defects into Insights?

| Can | But |

|---|---|

| Analyze historical defects | Depends heavily on data quality |

| Identify risk areas | False positives are common |

| Predict potential issues | May miss new or unknown risks |

Self-Healing Automation

AI-based automation frameworks can fix broken locators automatically or adapt to minor UI changes and lower the maintenance effort. To illustrate, if a button ID changes that is only slightly different, the button might still pass, but this can hide real UI issues that users might run into.

Read: AI-Based Self-Healing for Test Automation.

| Can | But |

|---|---|

| Fix broken locators | Can hide real issues |

| Adapt to UI changes | Over-reliance reduces reliability |

| Reduce maintenance effort | May lead to false confidence in tests |

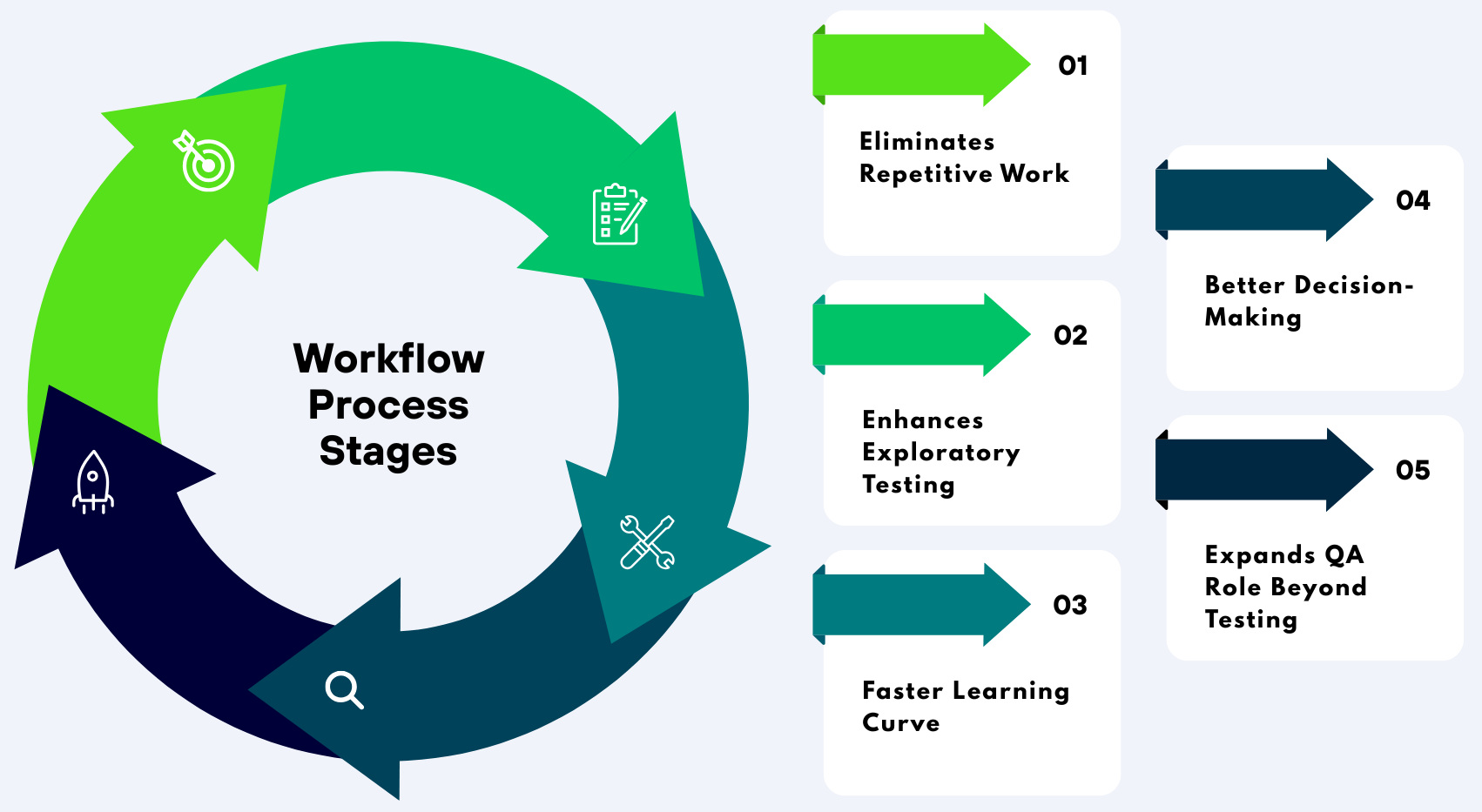

Where AI Empowers Manual QAs

AI is pushing QA in a direction that you will realize is beneficial for manual testers, not harmful. It does not eliminate their duties but rather eliminates low-value workflow and then scales up the best thing humans do: thinking, innovating, and understanding users.

Eliminates Repetitive Work

Manual QAs tend to dedicate a big part of their time to repetitive tasks such as regression testing, setting up data, and constantly validating the same workflows. For example, verifying a checkout flow across multiple builds can become monotonous and time-consuming when done manually.

AI can control bulk test runs, generate large volumes of test data, and execute pattern-based validations. For example, an artificial intelligence tool is capable of executing hundreds of regression scenarios overnight, and it also prepares datasets for testing so that the testers can leave those test cases to automation and focus on the more complex ones.

Enhances Exploratory Testing

When AI handles structured and predictable tasks, manual testers gain more bandwidth to dive into exploratory testing. This allows them to uncover edge cases, usability gaps, and unexpected behaviors that automated systems often overlook.

For example, while AI may validate that a form submission works, a human tester can notice confusing UI flows or poor error messaging. These real-world insights, like how a first-time user might struggle with navigation, are areas where human intuition outperforms AI.

Read: How to Automate Exploratory Testing with AI in testRigor.

Faster Learning Curve

Testers can leverage AI-powered tools that suggest test cases, explain defects, and even generate documentation from requirements. This cuts down on the time new testers take to get up to speed with systems and being able to start meaningful contributions.

For instance, a junior QA can rapidly generate test scenarios for a feature and ask for explanations for failed test cases using AI. This speeds up onboarding and helps keep all teams testing their work in a consistent way between projects.

Better Decision-Making

AI analyzes different data points, including risk analysis, test coverage gaps, and failure trends to allow testers to make smarter decisions. This means QAs will not be testing based on instinct but through data aides that help them and guide their testing process.

So, for instance, if AI flags a module that suffers significantly from defect density, testers can take the hint and validate it even deeper. This allows us to spend time and effort in areas that have a greater impact instead of spreading our effort evenly across every feature.

Read: Can You Trust an AI That Can’t Explain Its Decisions? A Guide to Explainable AI Testing.

Expands QA Role Beyond Testing

AI is forcing manual QAs to move beyond testing and into higher-level, quality-focused roles. It spans domain areas such as quality engineering and business validation, along with making contributions to product-level decision-making.

For example, a QA does not validate if the feature is working or not, but checks whether it really solves a user problem. This transformation places testers in the center, as critical contributors to the success of the product and not just a gatekeeper for defects.

Where AI Hurts Manual QAs

- Skill Obsolescence Risk: The role of the QA engineer who only sticks to running existing test cases without even learning new tools and methods can be easily replaced by another person. Think of a case where an AI tool generates and executes standard regression tests quicker and more accurately than you ever could. Stagnation is a real danger when the first tasks deemed low-skill are precisely those that can be automated, repetitive ones.

- Over-Reliance on Tools: A lot of teams just take for granted what they see as an AI output and do not question it or apply any critical thinking. For example, if a test is marked as passed after using an AI tool, testers may not check the actual user experience. Producing blind trust will cause unnoticed defects, finally ending up with a poor-quality release.

- Job Market Shift: The QA job market is changing; companies are looking for testers who know how to automate their skills, have a basic understanding of AI tools, and domain knowledge. For instance, a job advertisement that used to require only manual testing experience may now ask for exposure to test automation frameworks and tools powered by AI. Consequently, purely manual roles are fading away.

- Pressure to Upskill: While artificial intelligence has flexible workflows, adapting to it can be overwhelming, especially for testers who are not tech-savvy. One such steep learning curve is the one surrounding the adoption of automation tools or understanding if a test platform using artificial intelligence works properly. But the industry has moved on anyway, and continuous upskilling is more of a need than an option.

Read: Test Automation Tool For Manual Testers.

What Manual QAs Do Better Than AI

- Business Understanding: There are many areas in which AI can train on requirements, but it does not automatically understand the business flows and customer expectations. Unlike manual QAs, they are able to relate the features to the business impact and also find the gaps that affect users.

- Exploratory Thinking: AI is pattern-based and relies on set logic, making it limited in being able to think outside the box. It can simulate the real user behavior since humans break patterns, explore unpredictably, and therefore identify any issues that are hidden.

- Usability Testing: AI does not experience frustration, nor can it discern whether an interface is intuitive or confusing. The experience of manual testers can help determine general user experience, along with wherever there are issues affecting ease of use.

- Contextual Decision-Making: AI does not possess the judgment to assess complex situations through intuition and apply experience-based reasoning. Manual QAs utilize the wider context and what they know from the past to ascertain what really has significance.

- Communication & Collaboration: QA is not just about testing, but also continuous and close communication with developers, product managers, and stakeholders. Manual testers are an essential part of the process of assuaging ambiguity and gaining alignment among everyone that AI cannot supersede.

The Future of Manual QA

Manual QA will not disappear, but it is definitely changing. The role is transitioning from mere execution to deeper analysis, from simple validation to strategic thinking, and from testing task isolation to full-blown ownership of product quality. By embracing this shift, testers will serve a broader role than simply QA.

Instead of asking whether AI will replace manual QA, the more useful question is how it can elevate your capabilities as a tester. The strongest teams already combine AI for speed and efficiency with human intelligence for judgment and insight. This balance is what defines modern QA success.

Read: How to Utilize Human-AI Collaboration for Enhancing Software Development.

Closing Thought

AI is like a super assistant: it will either help or hurt your career, depending on how you use it. If you ignore it, you lose, fall behind; if you depend on it blindly, then you no longer have any control over quality. This is where the true advantage lies; if you can apply your own mind in conjunction with using AI, but also if you use it smartly.

The future does not belong to AI. It belongs to QAs who know how to leverage it.

Frequently Asked Questions (FAQs)

- Will manual testing completely disappear in the future?

Manual testing is unlikely to disappear because software quality involves human judgment, creativity, usability evaluation, and business understanding that AI cannot fully replicate. However, the nature of manual QA roles will continue to evolve toward more analytical and strategic responsibilities.

- What skills should manual QAs learn to stay relevant in the AI era?

Manual QAs should focus on learning AI-assisted testing tools, basic automation concepts, API testing, data analysis, domain knowledge, exploratory testing, and communication skills. Understanding how AI works in testing workflows will become increasingly valuable.

- Can AI identify usability and user experience problems effectively?

AI can detect patterns and inconsistencies, but it struggles to understand emotions, frustration, confusion, and real human behavior. Human testers are still far better at identifying usability issues and evaluating overall user satisfaction.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |