Testing Conversational UX in AI-Powered Apps

|

|

Conversational interfaces have slowly but effectively transformed the way users interact with software, shifting from formal structure to fluid dialogue. In contrast to traditional UIs that present manageable inputs in predetermined flows, conversation systems must deal with ambiguity, topic changes, and infinite potential expressions.

This change requires a different mindset about testing: One that is less about following specific ways and more about how well the system can understand the meaning of human interactions and be consistent.

Testing conversational UX is about more than just proving functional correctness: it’s about assessing a system’s ability to understand, respond, and adapt, all in real time. Since the outputs are probabilistic, not deterministic, testers will have to evaluate relevance, tone, continuity, and recovery from misunderstanding rather than accuracy alone.

The end goal, of course, is to ensure the interaction feels natural and human-like, meaning testing should hinge as much on experience quality as it does technical validation.

| Key Takeaways: |

|---|

|

What is Conversational UX

First, we must explain what really gives conversational UX an advantage over traditional interfaces and then test it. Conversational UX (CUX) examines how systems interact with users through natural language interactions, whether in text or voice form, to establish a more human-like interaction. This implies interactions are dynamic, context-driven, and highly reliant on how well-intentioned the system understands and responds to.

- Intent recognition, where the system accurately identifies what the user wants despite variations in language

- Context awareness allows the system to remember prior interactions and maintain continuity in the conversation.

- Dialogue flow management to ensure interactions progress smoothly without rigid structures.

- Tone and personality, making responses feel natural and aligned with the system’s purpose or brand.

- Error handling in language enables the system to recover gracefully from misunderstandings or ambiguous inputs.

A user might say, “I need a refund.” A user might also say, “This product isn’t working. What do I do?” A user might instead say, “Can you help me get my money back?”

All of these sentences mean the same thing but require interpretation by the system. Testing conversational UX means validating how well the system understands and handles this variability.

Read: UX Testing: What, Why, How, with Examples.

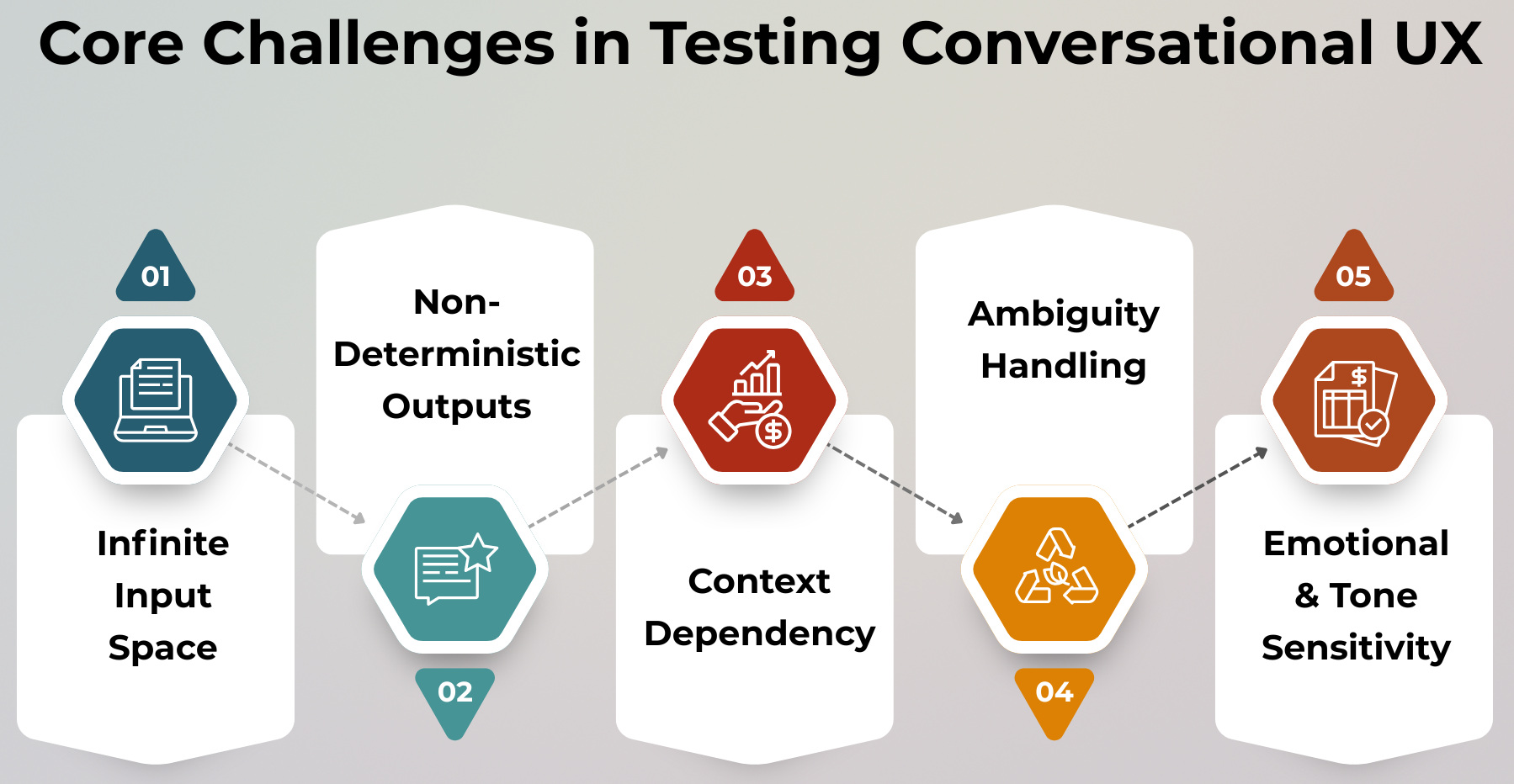

Conversational UX Testing: Challenges

Testing conversational systems adds an entirely new layer of complexity that simply does not exist with traditional, deterministic software. Instead of confirming static inputs and outputs, testers need to assess dynamic, context-dependent, and human-like interactions.

Infinite Input Space

- A user could say, for instance, “I want to cancel my order.”

- Another user might ask, “Can I return what I purchased?”

- A user might instead ask, “I don’t want this anymore, how do I stop it?”

They all need the system to generalize from disparate ways of saying things to the same intent, which makes exhaustive testing impossible.

Read: UI Testing: What You Need to Know to Get Started.

Non-Deterministic Outputs

Many conversational AI models are probabilistic and generate different responses even to the same input. This renders traditional exact-match assertions useless, requiring a move toward assessments of semantic correctness instead.

For instance,

- One response could be, “Sure, what seems to be the issue?”

- Another response could be, “I’m here to help. Can you tell me more about the problem?”

Both are correct, even though they are not identical, which means testing must focus on meaning rather than wording.

Read: Generative AI vs. Deterministic Testing: Why Predictability Matters.

Context Dependency

Conversational exchanges are not standalone; they occur over multiple turns in which all answers rely upon prior inputs. This makes validating whether or not the system can maintain and accurately use context across messages in an utterance essential.

- The user may say, “I want to book a ticket.

- The system might reply, “Where to?”

- The user then says, “Delhi.”

Here, the final response relies entirely on what was exchanged earlier, and a test must confirm that the system links “Delhi” correctly to the booking intent.

Read: Testing AI Tone, Empathy, and Context Awareness.

Ambiguity Handling

In practice, users tend to give ambiguous or incomplete inputs that require the system to make inferences or to ask for clarification rather than assumptions that lead the interaction off-track. Effective handling of ambiguity is key to ensuring a smooth and helpful conversation.

- A user might say, “I need help.”

- Alternatively, a user might say something like, “Something’s wrong.

In either case, a good system should respond with clarifying questions such as “Sure, can you tell me what you need help with?” rather than jumping to conclusions about the intent.

Read: Chatbots Testing: Automation Strategies.

Emotional and Tone Sensitivity

The tone of responses significantly impacts user experience, even when the functional outcome is the same. Conversational systems must communicate in a way that feels natural, polite, and empathetic rather than robotic or abrupt.

- A system might say, “Invalid input. Try again.”

- A better alternative would be, “I didn’t quite get that, could you rephrase?”

While both serve the same purpose, the second response creates a more positive and human-like interaction, which testing must evaluate qualitatively.

What Exactly are You Testing?

- Intent Recognition: Focuses on whether the system correctly understands what the user is trying to achieve. This is the foundation of any conversational interaction because every response depends on accurate intent mapping.

- Entity Extraction: Evaluates whether the system can identify and pull out important details from user input. These details are essential for completing tasks accurately.

- Dialogue Flow: Checks whether the system guides the user through a logical and coherent interaction. The conversation should feel structured without being rigid.

- Context Management: Ensures that the system remembers previous parts of the conversation and uses them appropriately. Without this, conversations become fragmented and frustrating.

- Response Quality: Evaluates whether the system’s replies are relevant, clear, and natural. This goes beyond correctness and focuses on how the response feels to the user.

- Error Handling: Tests how the system behaves when it cannot understand or process the input. A good system should recover gracefully instead of failing abruptly.

- Personalization: Checks whether the system adapts responses based on user preferences, history, or behavior. This adds a layer of intelligence and improves the overall experience.

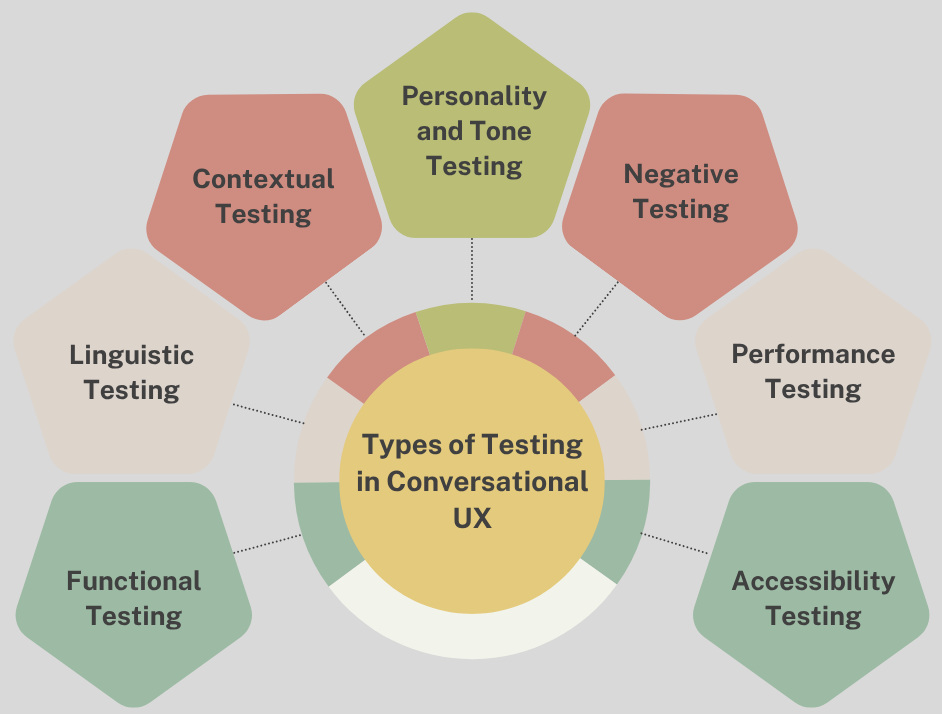

Types of Testing in Conversational UX

Conversational UX testing spans multiple layers, each addressing a different aspect of how the system behaves and interacts. A comprehensive approach ensures both technical correctness and a natural, human-like user experience.

Functional Testing

Just like any traditional application, at its core, the system has to do what it is supposed to do. This also includes ensuring that end-to-end workflows run flawlessly.

A user may say something like, for example, “I want to book a ticket”. Pass the booking step with payment and confirmation. But if everything is done technically, but the conversational flow is poor, or there are confusing responses, the experience will still decline.

Read: Functional Testing Types: An In-Depth Look.

Linguistic Testing

Linguistic testing is centered around how well the system understands any anticipated variations in language and expression. This provides that different ways of wording the same thing do not get interpreted differently.

For instance, a user could say, “Cancel order.” The user could also input: “Cancel my order”. Instead of that, a user may say, “Cancel my order.” This includes variations in wording, slang, or phrasing, but all of these should map to the same intent.

Read: Natural Language Processing for Software Testing.

Contextual Testing

Contextual testing is extremely important as it tests how well the system can handle multi-turn conversations and maintain contextual continuity. That guarantees that the system is able to recall previous inputs and respond accordingly in order.

A user might, for example, say, “I wanna get food.” For instance, the system could prompt “What type of cooking? The user then says, “Italian.” Instead of taking “Italian” as an independent request, the system should continue the flow based on this context.

Personality and Tone Testing

This type of test checks if the tone and personality are consistent in the system interactions. When the tones do not match, it makes the system feel unreliable or unnatural.

So, for example, in one case, a system might say, politely, “Sure, I’d be happy to help,” and then in another case give something like an Alexa-style response. However, at other times, it will suddenly respond with the baseline text, “Invalid request.” These inconsistencies can make it harder for users to understand, leading to a lack of trust in the system.

Negative Testing

Negative testing explores how the system behaves when faced with unexpected or problematic inputs. The goal is to ensure the system handles errors gracefully without breaking the experience.

For example, a user might say, “asdfghjkl.” A user might also say, “I want to cancel and book at the same time.” The system should respond with clarification or guidance instead of producing incorrect or meaningless output.

Read: Positive and Negative Testing: Key Scenarios, Differences, and Best Practices.

Performance Testing

Conversational systems have to respond quickly if the rhythm of interaction needs to be natural. Making things feel natural and flow smoothly can be disrupted by even the smallest of delays.

For example, a user may say, “My order status?” and when there’s a few-second delay before the system responds to your input, it shatters the magic of conversation. Testing will decide whether your answers are served in a timeline.

Accessibility Testing

Accessibility testing verifies that different types of users will be able to utilize conversational systems. Which means, people speak different languages and have various capabilities.

For instance, a non-native speaker might say, I want help with order problems. For example, a differently-abled user may use voice input instead of typing. Such diversity needs to be digested gracefully by the system since the users vary in terms of their requirements.

Automation in Conversational UX Testing

When scaling conversational UX testing, Automation is one of the most important techniques to use, but it has limitations as an inherent factor. There are portions that can be objectively verified with automated checks, but there are others that rely heavily on human belief and qualitative evaluation.

| What Can Be Automated | What Cannot Be Fully Automated |

|---|---|

| Intent classification validation (e.g., mapping “cancel my order” to the correct intent) | Tone evaluation (e.g., whether the response feels polite or harsh) |

| Entity extraction checks (e.g., identifying “Mumbai” as destination and “tomorrow” as date) | Emotional intelligence (e.g., detecting empathy in responses) |

| Regression testing for known conversational flows | Naturalness of responses (e.g., whether replies feel human-like) |

| API-level validation for backend integrations | Subtle conversational nuances and user experience quality |

AI-Assisted Testing

- Synthetic Data Generation: AI can generate multiple variations of user inputs to simulate real-world language diversity. For example, phrases like “Book a ticket,” “Reserve a seat,” and “I need a flight” can all be created automatically to test intent recognition.

- Semantic Similarity Evaluation: Instead of relying on exact matches, AI can evaluate how similar a response is to the expected meaning. This allows testing systems to validate correctness based on intent and relevance rather than identical wording.

- Conversation Simulation: AI agents can simulate real user interactions across multiple turns of conversation. This helps in testing dialogue flow, context handling, and system behavior under realistic conversational scenarios.

Read: How to use AI to test AI.

Using testRigor to Test Conversational Chatbots

- Natural Language Test Creation: Tests can be written in plain English to simulate real user conversations (e.g., “user asks to cancel order” → “system responds with refund options”), making it easier to model realistic dialogue flows.

- Intent-based Validation: Instead of checking exact responses, testRigor allows validation based on meaning and expected outcomes, aligning well with non-deterministic chatbot behavior.

- End-to-end Conversation Testing: Multi-turn conversations can be automated to validate context handling (e.g., remembering user inputs across steps like booking, cancellation, or support queries).

- AI-powered Assertions: testRigor can validate whether responses are relevant, correct, and aligned with expected intent, even when wording varies.

- Integration with Backend Validation: Chatbot responses can be verified against actual system actions (e.g., ticket created, order canceled), ensuring functional correctness alongside conversational quality.

By combining conversational validation with functional checks, testRigor enables teams to test chatbots not just for correctness, but for real-world usability, reliability, and user experience.

Read: Chatbot Testing Using AI – How To Guide.

Testing Voice-Based Conversational UX

- Challenges: A slip of the tongue or garbled speech recognition can confuse the system about your intent. Different accents and dialects can result in understandable user input inconsistencies. Some ambient noise may interfere with voice capture and ED (Electronic Digital) processing.

- Testing Focus Areas: Verify speech-to-text to ensure user input is correctly transcribed. Response time should be quick enough to keep the conversation natural. Testing of interrupt handling to ensure the system can manage when users speak over or stop responses.

Conclusion

Testing of conversational UX needs a paradigm shift from static validation towards meaning and context, and the quality of user experience in ephemeral interactions. However, as systems increasingly behave more like humans, testing has to be a careful combination of automation and qualitative judgment to maintain relevance with responses both in terms of soundness and tone. Ultimately, this means ensuring interactions are natural, dependable, and seamless across different user queries in various real-world conditions.

Frequently Asked Questions (FAQs)

- How do you define success in conversational UX testing?

Success is defined by how naturally and effectively users can complete their goals through conversation, measured using metrics like task completion rate, user satisfaction, fallback rate, and conversation abandonment.

- How do you test conversational AI across multiple languages?

Testing requires multilingual datasets, native language validation, cultural context checks, and validation of intent mapping consistency across different linguistic structures.

- How often should conversational AI systems be tested?

They should be tested continuously, especially after model updates, new feature releases, or changes in training data, as even small updates can impact behavior.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |