What are Vibe Coding Tests? The Future of AI-Driven QA

|

|

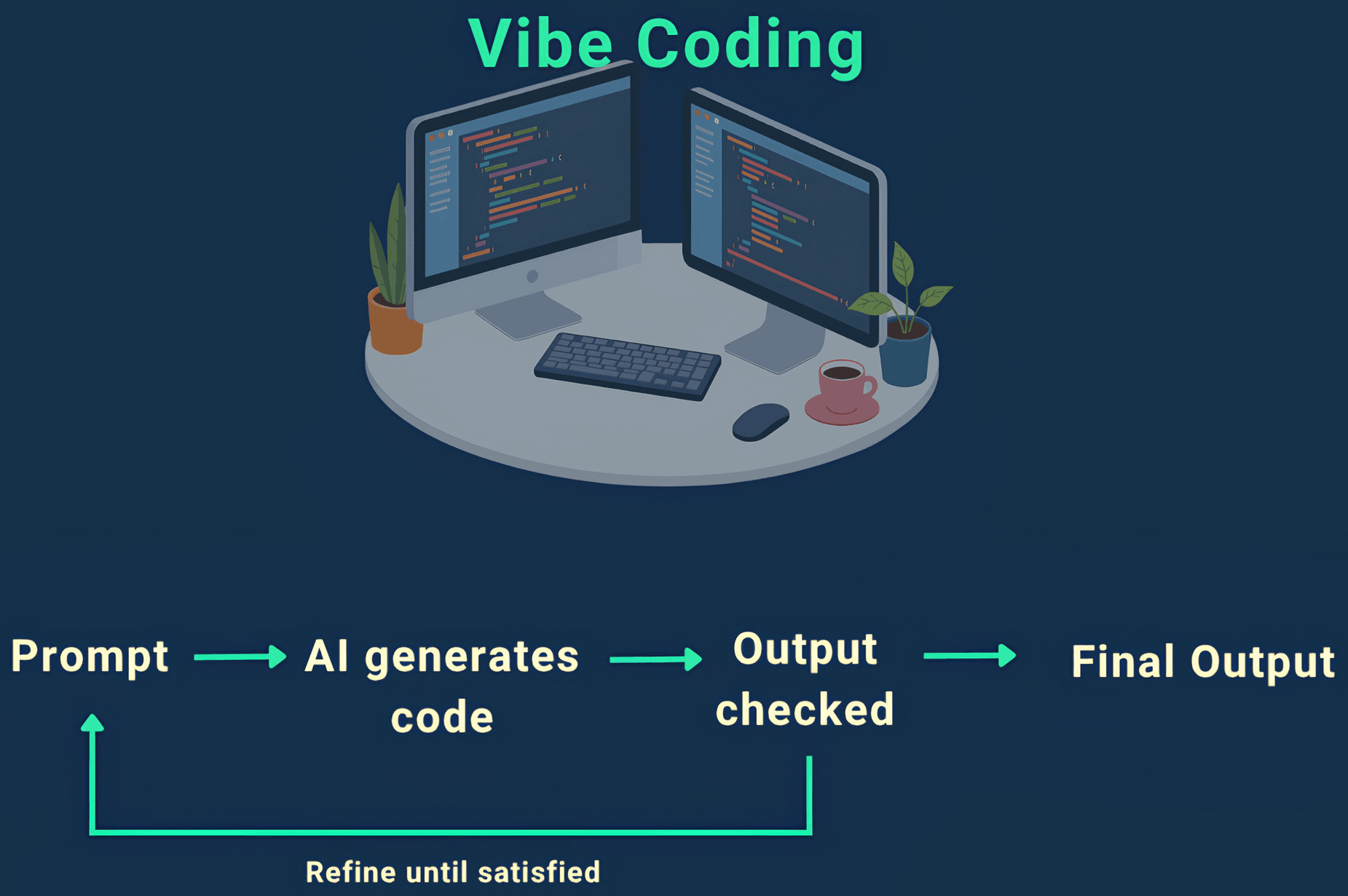

Not long ago, building software meant writing everything yourself – every condition, every UI element, every edge case. Today, that’s changing fast. Developers are increasingly leaning on AI to generate code by simply describing what they want. Instead of focusing on syntax and structure, they focus on intent.

This shift is often called vibe coding. You give the system a direction – what the feature should do, how it should behave – and it fills in the details. It’s faster, more flexible, and lowers the barrier to building complex functionality. Teams can move from idea to working feature in a fraction of the time it used to take.

But this speed introduces a new kind of uncertainty. When code is generated on the fly and can vary slightly each time, the old ways of testing don’t always hold up. You can’t rely on fixed outputs or rigid assertions when the system itself is designed to be flexible.

That’s why testing needs to evolve alongside development. To keep up with vibe coding, QA teams need to shift their focus – from checking exact results to validating whether the system behaves the way users expect.

| Key Takeaways: |

|---|

|

What Is Vibe Coding?

Vibe coding is a way of building software where the focus shifts from writing exact code to describing what you want the system to do. Instead of carefully crafting every function or UI element, developers give prompts, guidelines, or examples, and let AI generate the implementation.

Think of it as working at a higher level of abstraction. You’re not worrying about how something is built line by line, but rather what the end result should look like.

For example, instead of coding a search feature from scratch, you might describe the expected behavior: “Users should be able to search for products and see relevant results ranked by popularity.” The system then generates the logic, UI, and even edge-case handling based on that input.

Read more about it over here: What is Vibe Coding?

This approach is becoming more common with the rise of AI-assisted development tools, low-code platforms, and prompt-driven workflows. It speeds up development and allows teams to iterate quickly without getting bogged down in implementation details.

However, there’s a catch.

Key Challenges in Testing Applications Built with Vibe Coding

Testing applications created through vibe coding comes with its own set of challenges. The issue isn’t just how the code is written; it’s how the system behaves once that code is generated and evolves over time.

Unpredictable Application Behavior

Since the underlying code is generated based on prompts or intent, the application may not behave the same way every time. Even small changes in prompts can lead to noticeable differences in workflows or outputs.

No Fixed Expected Results

In many cases, there isn’t a single correct output to validate against. The application might return different responses or render slightly different UI elements while still working as expected. This makes it hard to define strict test assertions.

Frequent Breakage of Automated Tests

Tests written against one version of the generated code may fail when the code is regenerated or updated. This happens even when the functionality is still correct, leading to unnecessary test failures.

Unstable UI Elements and Flows

Applications built this way often have dynamic UI structures. Elements may change labels, positions, or identifiers, which breaks traditional locator-based automation.

Difficult Validation of Business Logic

Because the implementation is not explicitly written and reviewed line by line, it can be harder to ensure that all business rules are consistently followed across different scenarios.

Higher Dependency on End-to-End Testing

Unit-level validation becomes less reliable when the internal logic is generated and can change. As a result, teams have to rely more on end-to-end testing, which is slower and harder to maintain.

Increased Test Maintenance Effort

As the generated code evolves, tests need frequent updates to stay relevant. QA teams can end up spending more time fixing tests than validating new functionality.

Challenges in Debugging Failures

When a test fails, it’s not always clear whether the issue is in the generated code, the prompt that created it, or the test itself. This makes root cause analysis more time-consuming.

External Dependencies Add Instability

If the application relies on AI services or APIs, changes outside your control can impact behavior, making tests less stable.

Lack of Visibility into Implementation

Since developers are not always writing the code directly, QA teams may have limited insight into how things are implemented, making it harder to design targeted tests.

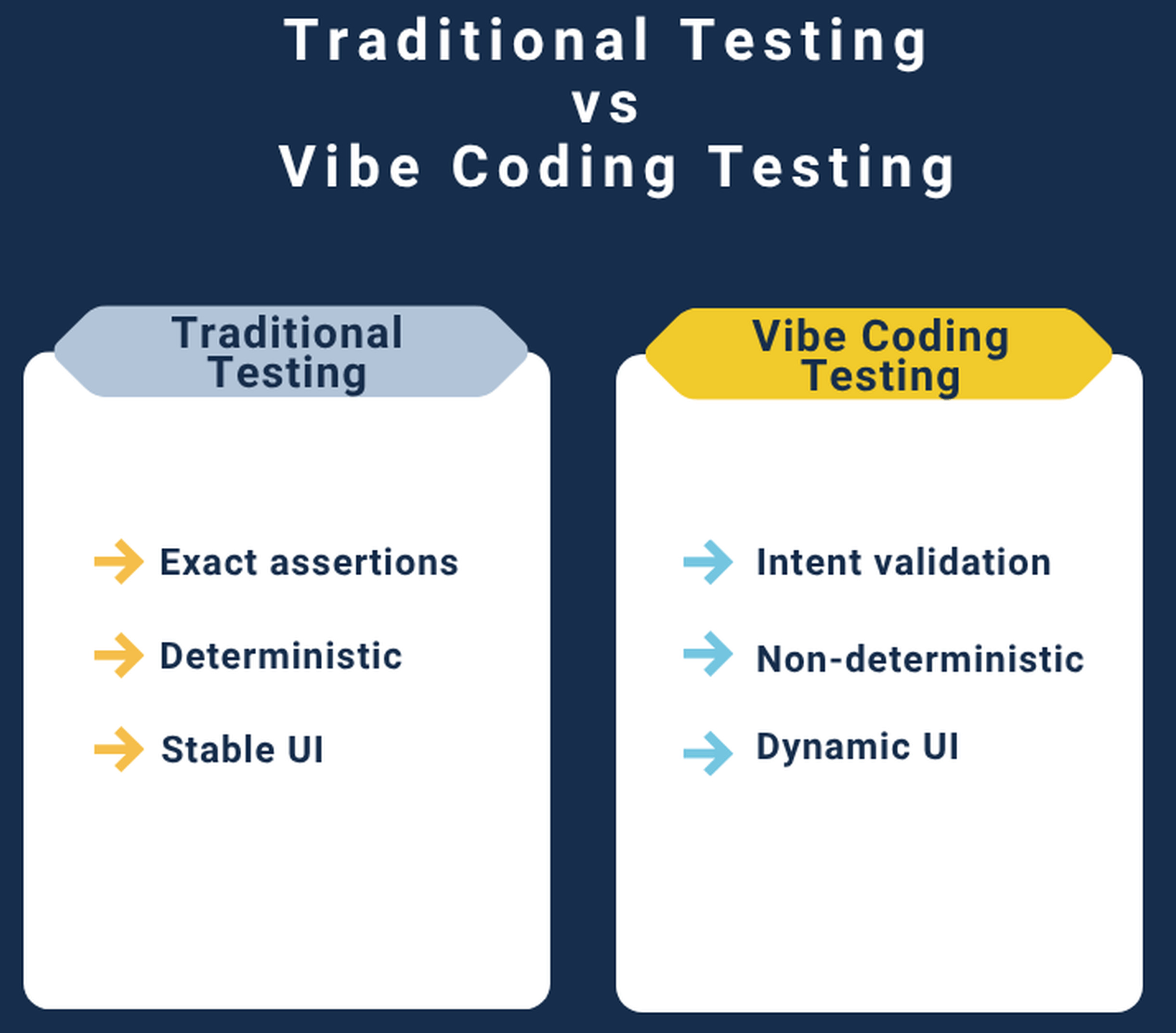

Why Traditional Testing Struggles Here

Traditional testing was built for systems that behave the same way every time. You write a test, define an exact expected result, and the system either passes or fails. That model works well when the underlying code is stable and predictable.

Vibe coding breaks that assumption. When features are generated based on prompts or AI models, the output isn’t always identical – even if the input looks the same. You might get slightly different wording, a different UI structure, or a variation in how a workflow is handled. From a user’s perspective, everything still works fine. But from a traditional test’s point of view, it looks like a failure.

This leads to a few common problems.

First, tests become brittle. Even small, harmless changes can cause failures, forcing teams to constantly update their test scripts.

Second, maintenance effort goes up. QA teams spend more time fixing tests than actually validating functionality.

There’s also the issue of flakiness. Tests may pass in one run and fail in another without any real issue in the product. This makes it harder to trust test results and slows down development cycles.

Finally, traditional testing struggles with defining what “correct” even means in these systems. When multiple outcomes can be valid, strict assertions don’t capture the full picture. You need a way to evaluate whether the system is behaving appropriately, not just whether it matches a predefined output.

Vibe Coding Testing vs. Traditional Testing

What a Good Testing Strategy Looks Like for Vibe-Coded Applications

Testing applications built through vibe coding requires a shift in mindset. Instead of trying to validate every detail of the implementation, the focus should be on whether the application works the way users expect it to.

Focus on User Journeys, Not Individual Steps

Start by testing complete workflows – what a user is trying to achieve from start to finish. Whether it’s logging in, searching for something, or completing a transaction, the goal is to make sure the journey works smoothly, even if the underlying steps vary slightly.

Validate Outcomes, Not Exact Outputs

Rather than checking for exact text or structure, tests should verify whether the result makes sense. For example, instead of matching a specific response, check if the response is relevant and solves the user’s request.

Use Flexible Assertions

Strict comparisons don’t work well here. Tests should allow for acceptable variations while still catching real issues. This could mean validating keywords, intent, or overall correctness instead of exact matches.

Test at the Behavior Level

Since the internal code can change, it’s more reliable to test what the system does from the outside. Treat the application like a black box and focus on inputs and observable behavior.

Keep Tests Readable and Intent-Driven

Tests should clearly describe what is being validated in plain language. This makes them easier to maintain and ensures they stay aligned with business requirements, even as the implementation changes.

Continuously Update Based on Real Usage

As prompts and generated code evolve, testing should evolve too. Regularly review test coverage based on how users are actually interacting with the system.

Balance Coverage with Practicality

It’s not realistic to cover every possible variation. Focus on high-impact scenarios and critical paths, and avoid over-testing edge cases that don’t significantly affect users.

How AI-Assisted Testing Can Help

Testing vibe-coded applications requires tools that can handle change, variation, and intent-driven behavior. This is where testRigor stands out.

Unlike traditional automation tools that depend heavily on fixed locators, rigid scripts, and exact assertions, testRigor is built around how users actually interact with an application. Tests are written in plain English, making them easier to create, understand, and update as the application evolves.

One of the biggest advantages is the tool’s focus on visible behavior rather than implementation details. Since vibe-coded applications can change under the hood, relying on internal structure becomes a weak strategy. testRigor avoids this by interacting with the UI the way a real user would, which makes tests far more stable.

It also reduces the problem of brittle tests. Because it doesn’t rely on hardcoded selectors or exact text matches, small UI changes or variations in output don’t immediately break your test suite. This is especially important for applications where layouts or responses can shift slightly over time.

Another key benefit is speed. Teams can quickly write and update tests without needing deep programming knowledge. This makes it easier for QA, product managers, and even non-technical stakeholders to contribute to testing – something that becomes increasingly valuable in fast-moving, prompt-driven development environments.

This makes testRigor a great tool for vibe testing.

Testing Vibe-Coded Applications With testRigor

One of the biggest advantages of testRigor is how easily it connects testing with actual requirements. In vibe-coded applications, where implementation can change frequently, relying on specs becomes far more reliable than relying on code-level details.

1. Generate Test Cases Based on a Prompt (Specification)

With testRigor, you can write tests directly from business requirements or product specs—using plain English. Instead of translating requirements into complex automation scripts, you simply describe what the system should do from a user’s perspective.

For example, a requirement like:

“User logs in, searches for a product, and sees relevant results.”

It can be turned into a test almost as-is (thanks to reusable rules). There’s no need to worry about locators, IDs, or underlying implementation.

Read: Eliminate testing with Executable Specifications

2. Generate Test Based on a Description (Prompt)

testRigor makes testing AI features easier by allowing you to write tests that simulate real prompts and verify the results from a user’s point of view. Instead of asserting exact matches, you can check whether the outcome is relevant, accurate, and aligned with the expected intent.

For example, if you’re testing a chatbot, you don’t need to validate the exact wording of a response. You can write a test that checks whether the response answers the user’s question, includes key information, or avoids incorrect details. This makes tests far more resilient to variation. Similarly, for AI-driven features like recommendations or content generation, testRigor lets you focus on whether the output is useful and appropriate, rather than identical every time.

- Testing Chatbots Using testRigor

- Top 10 OWASP for LLMs: How to Test with testRigor?

- Testing Graphs Using testRigor’s AI

- Testing Images Using testRigor’s AI

- Testing AI-Generated Content Using testRigor

testRigor can also create multiple test cases for you if you provide a descriptive prompt that sets the stage well – what is the application about, what are the modules, what needs to be verified, etc. This is like prompting an LLM to generate better test cases.

Take a look at this guide that further explains different ways to create tests in plain English in testRigor: All-Inclusive Guide to Test Case Creation in testRigor

Another great feature of testRigor is that it gives you an explanation of its decisions (XAI), helping overcome the fear associated with testing AI with AI. Read: How testRigor Supports XAI in QA

In short, testRigor aligns well with the nature of vibe-coded applications. It focuses on what matters – the user experience – while staying flexible enough to handle the variability that comes with AI-generated code.

Best Practices for Teams Testing Vibe-Coded Applications

Testing vibe-coded applications isn’t just about using the right tool; it also requires a shift in how teams approach QA. Here are some practical ways to make testing more effective and manageable.

Start With Critical User Flows

Focus on the most important paths first – what users absolutely need to be able to do. This ensures that core functionality is always covered, even if other parts of the system are still evolving.

Avoid Over-Specifying Expected Results

Trying to validate every detail will make tests brittle. Instead, define what “good enough” looks like and validate against that. This keeps tests stable while still catching real issues.

Write Tests in Plain, Intent-Driven Language

Tests should clearly describe what is being validated from a user’s perspective. This makes them easier to understand, review, and update as the application changes.

Prioritize End-to-End Testing

Since the underlying code can change frequently, testing complete workflows is more reliable than focusing too much on individual components.

Continuously Review and Refine Tests

As prompts, models, and features evolve, testing should evolve too. Regularly revisit test cases to make sure they still reflect real user behavior. However, if you’re using a good tool, you may not need to maintain tests too often.

Collaborate Across Teams

QA, developers, and product teams should work closely together. When testing is based on a shared understanding of requirements, it becomes easier to validate intent rather than just implementation.

Don’t Aim for Perfect Coverage

It’s not practical to test every possible variation. Focus on high-impact scenarios and common use cases instead of trying to cover everything.

Monitor Production Behavior

Real user interactions can reveal gaps that tests might miss. Use production insights to improve and expand your test coverage over time.

Conclusion

As vibe coding continues to grow, testing will need to stay just as flexible. The teams that adapt early will be better positioned to deliver reliable, high-quality applications – no matter how the code is created.

To deal with this new wave of development that vibe coding has brought in, a different testing mindset and the right tools make all the difference. By focusing on intent, user journeys, and real outcomes, teams can keep up with the pace of vibe-coded development without sacrificing quality.

Tools like testRigor help bridge this gap. They allow teams to write tests in plain language, validate behavior instead of implementation, and handle the variability that comes with AI-driven systems.

Frequently Asked Questions (FAQs)

What are vibe coding tests?

Vibe coding tests are a way to validate applications built using AI or prompt-driven development. Instead of checking exact outputs, these tests focus on whether the system behaves correctly and delivers the expected user experience.

Why is testing vibe-coded applications different from traditional testing?

Traditional testing relies on fixed outputs and predictable behavior. Vibe-coded applications can produce different but valid results, so testing needs to focus more on intent, relevance, and overall functionality rather than exact matches.

How do you handle changing outputs in vibe coding tests?

The key is to avoid strict assertions. Instead of checking for exact text or structure, tests should verify whether the output makes sense, is accurate, and meets the user’s goal. Flexible validation is essential.

How does testRigor compare to Claude Code for testing vibe-coded systems?

Claude Code is helpful for generating test ideas and scripts, but testRigor is designed to execute and maintain those tests reliably. It focuses on user-visible behavior, handles UI and output changes better, and reduces test maintenance – making it more suitable for testing vibe-coded applications in practice.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |