Who is Responsible for Testing AI-Generated Code?

|

|

“Accountability breeds response-ability.” —Stephen Covey.

With the emergence of AI-enhanced software development, the traditional accountability chain in software engineering has changed. When tools such as Copilot or ChatGPT generate code, authorship is obscured, and developers become more of a reviewer than an original creator.

This raises an important question: if humans are not fully responsible for writing the code, can they be fully responsible for its correctness, i.e., testing?

Clearly, the issue of who owns the testing of AI-generated code should lead to a reframing of ownership throughout the engineering lifecycle. Developers need to verify the intent and behavior of AI-generated code, and QA teams need to take a more risk-based and contextual approach to testing.

In the end, quality is a joint responsibility, distributed across every node in human-AI interaction rather than stationed at a single point.

| Key Takeaways: |

|---|

|

The Illusion of Just Another Tool

Most organizations think of their AI coding assistant as nothing more than another tool in the productivity toolbox, a fancy IDE or framework, but that assumption may put you in trouble. AI is not like traditional tools that follow sets of programmed logic; they are generating code based on learned patterns.

So, AI can create code that seems plausible but may contain hidden bugs, edge-case failures, or even insecure and outdated practices. It may also hallucinate non-existent APIs or behaviors, which can make problems more difficult to catch through casual review.

As a consequence, AI-generated code presents another form of risk: one that’s less obvious but far more insidious. Testing, then, needs to transition away from validating the intention of a person, toward testing the machine-derived assumptions.

The Traditional Responsibility Model

To understand the shift, it’s important to first look at how responsibility was traditionally defined in software development. In the past, roles were clearly separated, and accountability followed a predictable, structured path. This clarity made it easier to assign ownership, track issues, and maintain quality across the development lifecycle. In a traditional software lifecycle:

- Developers were responsible for writing correct code

- QA engineers were responsible for validating behavior

- Architects ensured design integrity

- Product owners ensured requirement clarity

Responsibility was shared across teams, but accountability was still easily traceable. They usually place the defect in some place that involves misunderstanding, a logic error, a missed test case or some integration issue.

The catch was that all of the defects had a very human cause. With AI-generated code, that source becomes obscured, and it is harder to establish where responsibility lies.

Read: AI Agents in Software Testing.

The Rise of AI-Generated Code

AI-generated code is no longer an experimental toy but inseparable from the day-to-day development workflows of organizations. Developers are using AI tools more and more to speed things up and take away the heavy lifting.

They are used to help write boilerplate code, create functions based on natural language prompts, identify problems in the code, recommend optimizations, and even generate test cases. Now developers often aren’t writing code from scratch; they’re reviewing, changing, and approving what AI produces.

This transformation redefines the essence of development work. It shifts from creation to curation, from writing to validating, and from direct execution to a more oversee-and-instruct role.

Because the approach to development is changing in this way, it hasn’t realized implications for testing. Tests now have to deal not only with human business logic, but with assumptions and ambiguity that come along when AI creates the code.

Read: How to Validate AI-Generated Tests?

The Responsibility Gap Introduced by AI

Adding AI is like introducing a new actor into the operating system; one who does not have intent, foresight, or accountability. Despite these limitations, it produces code that runs in production systems and causes real-world effects. This presents an unusual challenge, where outputs have an impact but are not necessarily responsible.

This results in an increasing responsibility gap among modern engineering workflows. Humans approve, AI codes, systems run, and users feel. Each segment is related, yet accountability is diffused throughout the chain.

The question of ownership becomes difficult to establish when something goes awry. The responsibility is no longer neatly associated with a single entity, but dispersed across human-machine work. This uncertainty compels organizations to reassess and redefine what accountability means in development powered by AI.

Read: How to use AI to test AI.

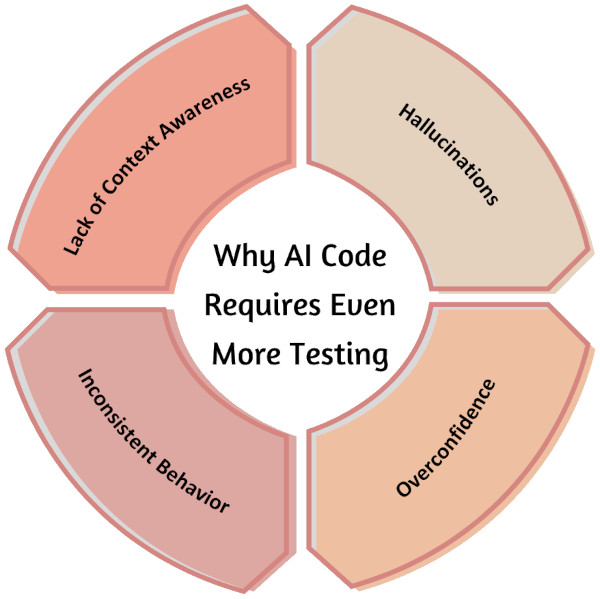

Why AI Code Requires Even More Testing

There is a popular misconception that AI-generated code is automatically smarter and thus requires less testing. In fact, the reverse is true; AI adds new levels of uncertainty so that more and better validation is required. With AI being a co-creator in development, testing needs to evolve to mitigate risks that are less visible yet all the more complex.

-

Lack of Context Awareness: Since AI does not have a complete understanding of the system, it generates code based on learned patterns. Unfortunately, this means it can easily miss edge cases, misunderstand requirements, or forget integration dependencies that a human developer would normally take into account.For example, AI creates a payment processing function that processes successfully, but does not stick around for failed retries or timeout handling, thus resulting in jarring and varied user experiences.

-

Hallucinations: AI can produce outputs that appear correct but are actually fabricated or incorrect. This includes non-existent APIs, invalid logic, or assumptions that don’t align with the system.For instance, AI suggests using a function like getUserSessionToken() from a library that doesn’t actually provide such a method, causing runtime failures.

-

Overconfidence: AI-generated code tends to be clean, structured, and convincing in appearance, which can lead to less critical review. A silver lining is that this increases the risk of teams getting carried away by flawed logic without any proper validation.Consider the scenario, a developer accepts an AI-synthesized sorting algorithm as it appears to be efficient, but the logic of the uncommon edge cases (for example, on duplicates and large datasets) is busted.

-

Inconsistent Behavior: AI outputs are not always deterministic; the same prompt does not necessarily produce matching results every time it is run. This makes consistency and predictability difficult.For example, one day the AI generates an API response handler with proper error handling, while the next day it omits validation checks for null responses for the same prompt.

Code and Testing Ownership in an AI Era

Since AI has become embedded in the process of software development, the question of ownership is more complex than in the past. The traditional demarcation between roles starts to get fuzzy, creating ambiguity of responsibility for quality.

What does it mean to own testing: Exploring accountability among developers, QA engineers, and organizations in the era of AI.

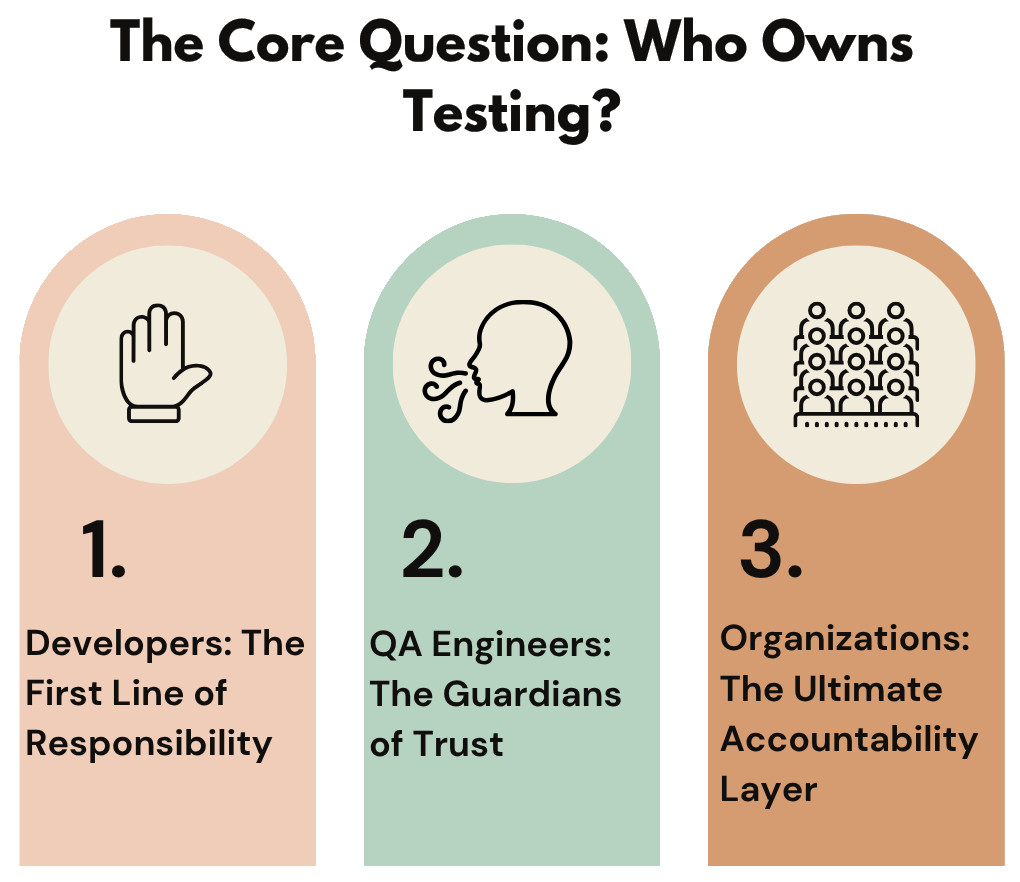

Developers: The First Line of Responsibility

Even if the code is produced through AI, it is up to developers to be the immediate owners of its quality. Responsibility for utilizing AI tools, evaluating generated output, and incorporating it into the system rests with them. That makes their role critical to ensuring that AI-generated code is not blindly accepted, but thoroughly validated instead.

Developer Responsibilities in an AI Era

- Validate AI-generated code before committing

- Fully understand the logic instead of copy-pasting

- Write additional tests where coverage is insufficient

- Question and verify AI assumptions

Read: A Tester’s Guide to Working Effectively with Developers.

QA Engineers: The Guardians of Trust

As development speeds up and code volumes increase due to AI, the need for QA becomes even more crucial. As more code is generated even faster, the attack surface grows very rapidly. QA needs to be the last line of defense that keeps quality in check at this breakneck speed.

QA Responsibilities in AI-Driven Development

- Thoroughly test AI-generated features

- Validate edge cases that AI may overlook

- Focus more on exploratory and scenario-based testing

- Identify patterns in AI-related defects

- Understand AI tool behavior and common failure modes

- Design tests targeting AI-specific risks

Organizations: The Ultimate Accountability Layer

At a high level, accountability lies with the organization, not the AI. It is up to the companies to decide whether to use AI tools and determine how they are integrated into development workflows. That means they set the criteria to ensure quality, security, and compliance.

Organizational Responsibilities

- Define clear policies for AI usage

- Establish mandatory review and approval guidelines

- Ensure security, compliance, and governance standards

- Provide training for teams using AI tools

- Define acceptable trust levels for AI-generated code

- Enforce testing and quality benchmarks across teams

The Myth of “AI will Test its Own Code”

The assumption that AI can do everything from generating code to writing tests and validating outputs itself gives rise to the irresistible notion that it could potentially cover the entire development lifecycle. This assumption is attractive, as it holds the prospect of speed and efficiency with little human intervention.

For example, the whole team lets AI build a feature and test suite, assuming the end-to-end coverage can be achieved without manual work.

But this notion is fundamentally mistaken because AI does not possess genuine intent, understanding, contextual intelligence, or responsibility. It doesn’t “know” why a feature exists, or how users may in practice engage with it in unpredictable ways. For example, AI produces a login flow that does work technically, but there are no considerations for scenarios like how to lock out an account or what if users behave strangely.

Read: Does AI Confabulate or Hallucinate?

AI-generated tests also inherit the same limitations as the code they validate. They often reinforce the same assumptions instead of challenging them, which reduces their effectiveness.

This can lead to situations where:

- Tests mirror the same flawed logic as the generated code

- Critical edge cases are not covered

- Incorrect behavior is validated as correct

Consider if AI generates both a function and its test, the test may only confirm the expected (but flawed) logic, ignoring failure scenarios.

This creates a dangerous feedback loop where issues remain hidden despite passing tests. The system appears stable, but underlying defects go undetected until real users encounter them.

This loop typically looks like:

- AI writes the code

- AI generates the tests

- Tests pass successfully

- Bugs still exist in production

For instance, an AI-generated pricing calculation passes all tests but produces incorrect totals under rare discount conditions, discovered only after customer complaints.

AI Code and Testing: Shared Responsibility Model

The most practical approach in an AI-driven development environment is to adopt a shared responsibility model. Instead of eliminating ownership, AI redistributes it across multiple stakeholders who must collaboratively ensure quality and reliability.

- Developers: They remain responsible for the code that enters the system, regardless of whether it is AI-generated. Their role shifts toward validating, understanding, and approving the output before integration.

- Developers must carefully validate and approve AI-generated code before committing it.

- They need to ensure unit-level correctness by thoroughly reviewing logic and writing necessary tests.

- QA Engineers: They play a critical role in ensuring that the system behaves correctly under real-world conditions. Their focus expands to identifying risks and gaps that AI and developers may overlook.

- QA engineers must validate overall behavior and ensure the quality of AI-generated features.

- They are responsible for identifying gaps, edge cases, and potential risks in the system.

- AI Tools: These tools act as enablers that enhance productivity but do not take ownership of outcomes. They should be treated as assistants rather than decision-makers in the development process.

- AI tools should assist in generating code and tests, but not replace human judgment.

- They can accelerate development speed, but they do not guarantee correctness or quality.

- Organizations: They hold the ultimate responsibility for how AI is adopted and governed within development workflows. They must create a structure that ensures accountability across teams.

- Organizations must define governance policies for the use of AI in development.

- They are responsible for ensuring accountability, quality standards, and compliance across all teams.

AI-Generated Code Testing: Strategies

Knowing what those risks are with AI-generated code is just the start. What changes teams need to be making in their testing approaches to meet new challenges is what matters. In the world of AI, traditional testing methods are no longer enough. Testing needs to be much more intentional, risk-based, and adaptive so that trustworthiness is provable.

-

Risk-Based Testing for AI Code: Testing must focus on areas where AI-generated code is less prone to failure and to introducing hidden defects. It’s about the aspects that involve core business logic, integrations, and high-quality user flows. For example, AI-generated payment processing logic requires more testing than simple UI components.

-

Edge Case and Negative Testing: Edge case testing is an important step, as AI struggles to capture outlying conditions. Negative testing can reveal how the system reacts to bad or out-of-range inputs. For instance, testing the response of an AI-generated form handler to null values, excessively large inputs, or invalid formats.

-

Exploratory Testing Approaches: Since AI might introduce uncertainties that will not be covered in test cases during a normal testing session, exploratory testing becomes highly relevant. Testers explore the system to find unexpected behavior and latent defects. For example, a tester manually navigates through an AI-generated workflow to uncover inconsistent state transitions.

-

Security and Performance Validation: Malicious logic or vulnerabilities get into code generated by AI and reduce the performance of systems. You may need to do dedicated testing to meet security and scalability standards. For example, pinpointing SQL injection vulnerabilities in the generated prompts or observing performance bottlenecks under heavy usage.

- Regression Testing Importance: Frequent changes generated through AI can bring unexpected side effects to what is already developed. Regression testing ensures that anything new we add does not break what was previously working. For instance, when AI-generated enhancements are fitted with the end product, a complete regression suite is executed to guarantee that nothing breaks or existing workflows are compromised.

The Future of Accountability

As AI continues to take on a larger role in software creation, the question of accountability will become even more critical. Organizations must proactively redefine responsibility models to ensure quality, trust, and control in increasingly AI-driven systems.

- AI will contribute to writing larger portions of software systems, reducing direct human authorship.

- System complexity and associated risks will increase as AI-generated components grow in scale.

- Strong governance frameworks and standardized practices will become essential for managing AI usage.

- Human accountability will remain central, as ultimate responsibility cannot be delegated to AI.

Read: How to Keep Human In The Loop (HITL) During Gen AI Testing?

Wrapping Up

The traditional ownership in software development is rearranged by AI-generated code, as testing will be a shared responsibility among the developers, QA engineers, and the organization, rather than being assigned to a single role. AI speeds up development but also increases risk; new complexities with hallucinations, context dropping, behavior not matching requirements, and requires different and deeper testing strategies.

In the end, human accountability is critical, and organizations need to have strong governance and validation models informing their leaders that they can trust their AI systems.

FAQs

-

Can AI-generated code introduce legal or licensing risks?Yes, AI-generated code may unintentionally replicate patterns from copyrighted sources. This can expose organizations to compliance and intellectual property risks if not reviewed carefully.

-

How can teams measure the quality of AI-generated code effectively?Teams should go beyond traditional metrics like code coverage and include AI-specific indicators such as output consistency. Monitoring defect patterns linked to AI-generated components is also essential.

-

Should AI-generated code be treated differently in code reviews?Yes, it requires more rigorous and structured reviews compared to human-written code. Reviewers must validate assumptions, logic accuracy, and hidden dependencies.

| Achieve More Than 90% Test Automation | |

| Step by Step Walkthroughs and Help | |

| 14 Day Free Trial, Cancel Anytime |